In practical machine learning, the journey from raw data to reliable predictions depends heavily on how models are trained, evaluated, and optimized.

Training is the phase where an algorithm learns from labeled or structured data by identifying relationships between input features and target outcomes.

This learning process produces a model capable of making predictions on new data.

Testing, on the other hand, evaluates how well the model generalizes to unseen examples by assessing accuracy, consistency, and robustness.

Without proper separation of training and testing datasets, models may memorize patterns instead of learning true relationships, ultimately failing in real-world scenarios.

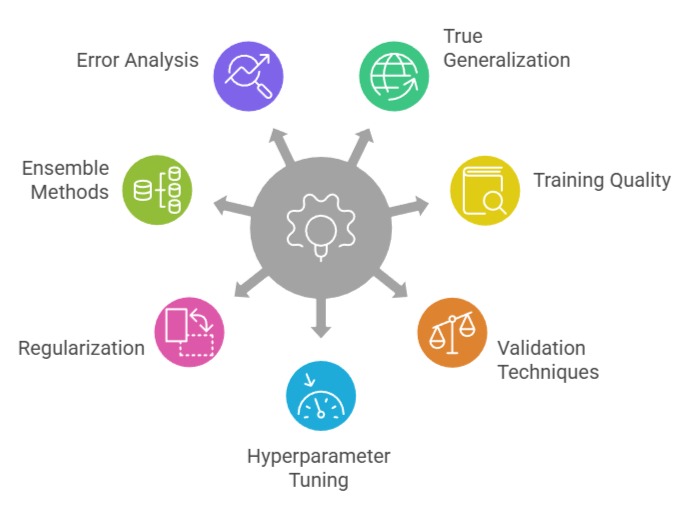

Model improvement is an iterative cycle where performance issues are systematically identified and resolved.

Refinement may involve hyperparameter tuning, feature engineering, data augmentation, addressing imbalance, or choosing more suitable algorithms.

Techniques like regularization, cross-validation, and ensemble learning further strengthen a model’s stability and predictive power.

Importance of Training , Testing and Improving Models

1. Clear Separation of Training and Testing for True Generalization

Separating data into training and testing sets prevents models from learning noise or memorizing specific examples. If the same samples appear in both phases, performance metrics falsely inflate and fail to represent real-world behavior.

By isolating the test set, practitioners can objectively measure predictive capability on unseen input, reducing the risk of overfitting.

This separation also guides model selection, helping identify which algorithm genuinely performs best rather than appearing strong by chance.

Proper split strategies, such as stratification for classification tasks, maintain distribution consistency. Overall, dataset partitioning lays the foundation for trustworthy evaluation and deployment.

2. Importance of Training Quality and Algorithm Fit

Training quality depends on how well the algorithm matches the structure and behavior of the dataset.

Models trained on insufficient, noisy, or biased data produce unreliable predictions regardless of complexity.

Choosing an appropriate algorithm—whether linear, tree-based, or probabilistic—ensures the learning process aligns with the underlying patterns.

Proper training also involves adjusting learning parameters, handling outliers, managing feature scales, and eliminating redundant features.

High-quality training is crucial because errors made at this stage propagate throughout the entire pipeline.

A thoughtfully trained model performs better, requires fewer post-processing corrections, and adapts more smoothly to optimization techniques.

3. Using Validation Techniques to Avoid Overfitting and Underfitting

Validation sets or cross-validation are essential in ensuring that the model is not overly tailored to one dataset.

Overfitting occurs when a model becomes too complex, memorizing noise, while underfitting occurs when it is too simple to capture essential structure.

Techniques like k-fold cross-validation expose the model to various data splits, revealing how consistently it performs.

This helps in fine-tuning architecture and hyperparameters—learning rate, depth, regularization strength, and others.

Using validation effectively strengthens overall reliability, enabling stable performance across different data subsets and preventing catastrophic failures in production.

4. Hyperparameter Tuning for Optimized Model Behavior

Hyperparameters govern how models learn, and tuning them precisely can drastically improve results.

Approaches like grid search, random search, or Bayesian optimization allow systematic exploration of parameter combinations.

Proper tuning prevents convergence to poor minima, stabilizes gradient-based algorithms, and balances model complexity with generalization ability.

For instance, adjusting the number of trees in a Random Forest or the C parameter in SVMs can change predictive outcomes significantly.

Hyperparameter optimization turns a mediocre model into a strong performer by ensuring learning dynamics are tailored to dataset characteristics.

5. Improving Performance Through Regularization and Constraints

Regularization techniques—such as L1, L2, dropout, and early stopping—help prevent the model from relying excessively on specific features or noise patterns.

These methods penalize unnecessary complexity, pushing the model toward simpler, more generalizable solutions.

Regularization is especially important in high-dimensional datasets where correlation and redundancy are common.

By encouraging smoother decision boundaries, the model becomes more robust to variations and less sensitive to spurious patterns.

This strengthens long-term performance and enhances stability when deployed in dynamic environments.

6. Boosting Accuracy with Ensemble Methods

Ensemble methods like bagging, boosting, and stacking combine the strengths of multiple algorithms to create more powerful predictors.

These approaches reduce variance, correct individual model weaknesses, and handle diverse patterns within the dataset.

For example, boosting sequentially improves errors by focusing on difficult samples, while bagging reduces variance by aggregating independent learners.

Ensembles often outperform single models because they mitigate the risk of relying on one algorithm’s assumptions or biases.

This technique remains one of the most effective ways to elevate performance in competitive ML tasks.

7. Continuous Error Analysis Using Metrics and Residuals

Evaluating errors is more than checking accuracy—it requires examining patterns in misclassifications or residuals.

Continuous analysis reveals whether errors stem from insufficient features, class imbalance, noisy labels, or algorithmic limitations.

By understanding these patterns, practitioners can redesign features, rebalance the dataset, improve preprocessing, or choose better algorithms.

Residual plots, confusion matrices, and metric trends offer insight into where the model struggles most.

This diagnostic process drives targeted improvements instead of guesswork, enabling smarter and more effective refinements.