Machine learning algorithms form the foundation upon which more sophisticated models are built.

These algorithms help machines recognize relationships, classify data, detect patterns, and make informed predictions.

Each algorithm operates on different principles and is suited for distinct problem types—ranging from forecasting numerical outcomes to identifying hidden structures in unlabeled data.

Understanding these core algorithms equips learners with the ability to analyze data behavior, evaluate model performance, and choose the right technique for practical tasks in real-world settings.

They also serve as stepping stones for progressing into advanced techniques like neural networks, ensemble methods, and deep learning architectures.

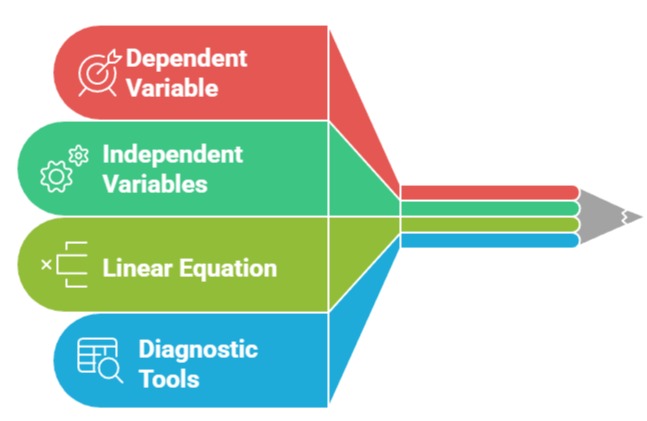

Linear Regression

Linear regression is one of the simplest predictive algorithms used to estimate a continuous numeric value.

It models the relationship between input variables and a target by fitting a straight line (or hyperplane) that minimizes prediction error.

Importance of Linear Regression

1. Linear regression provides a transparent way to understand how independent variables influence an outcome, making it highly useful for interpretation and trend analysis.

2. It is computationally lightweight, making it suitable for large datasets and real-time predictions where speed is essential.

3. The algorithm identifies linear patterns in data, helping analysts detect whether changes in predictors increase or decrease the target value.

4. By examining coefficients, businesses can understand the strength of factors affecting revenue, costs, or demand.

5. The model supports feature selection through statistical metrics like p-values and R², enabling clearer decision-making.

6. It serves as an entry-point algorithm, simplifying the transition to more complex regression methods like Ridge and Lasso.

7. With proper preprocessing, it works well even in multidimensional feature spaces, offering scalable predictions across industries.

Example :Predicting monthly electricity usage based on temperature, number of occupants, and appliance count.

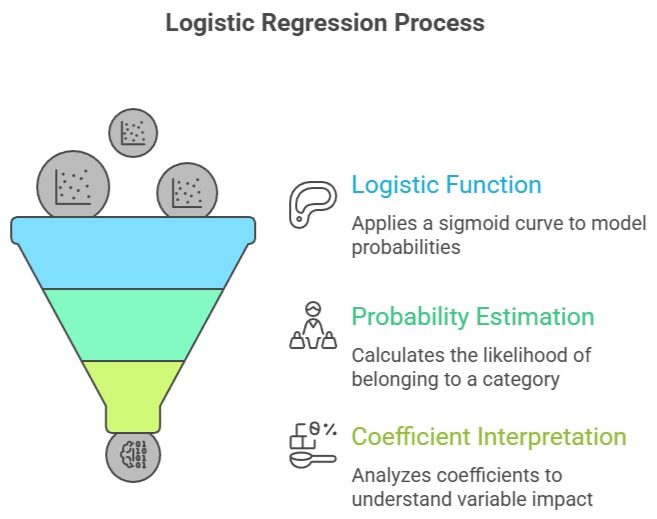

Logistic Regression

Logistic regression is a classification algorithm used for predicting categorical outcomes, especially binary classes.

Instead of forecasting a numeric value, it estimates probabilities using a logistic (sigmoid) function.

Importance of Logistic Regression

1. Logistic regression transforms linear combinations of features into probabilities, providing clear thresholds for decision-making.

2. It is widely used in scenarios requiring outcome probabilities, such as medical diagnosis or risk scoring.

3. The algorithm uses log-odds, making it effective for interpreting how each predictor contributes to increasing or decreasing the likelihood of an event.

4. Because it is less prone to overfitting than many complex models, it performs strongly when datasets are clean and structured.

5. Logistic regression supports regularization, ensuring more stable results even in high-dimensional environments.

6. Its probabilistic nature allows organizations to prioritize cases based on risk levels rather than just binary labels.

7. The method is flexible enough to handle multi-class classification with variants like Softmax Regression.

Example : Predicting whether a customer will renew a subscription (yes/no) based on behavior data.

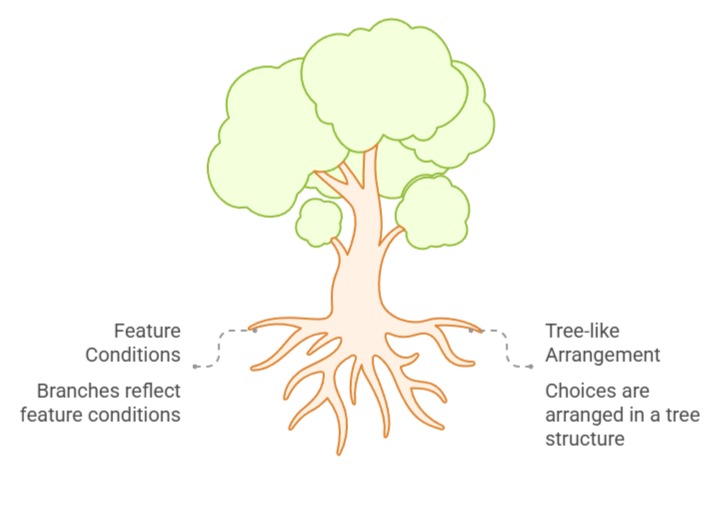

Decision Trees

Decision trees are versatile algorithms that split data into branches based on feature conditions, ultimately forming a tree-like structure of decisions.

They can handle both classification and regression tasks.

Importance of Decision Trees

1. Decision trees mimic human decision processes, making them easy to interpret and communicate to non-technical stakeholders.

2. They adapt well to nonlinear relationships because the branching structure captures complex interactions between variables.

3. Decision trees require minimal data preparation as they can handle missing values, categorical data, and outliers with ease.

4. The model provides visual clarity through tree diagrams, enabling deeper understanding of how predictions are made.

5. Overfitting can occur, but pruning techniques and ensemble extensions (like Random Forests) significantly improve generalization.

6. They are powerful for scenarios involving rules-based segmentation, such as policy automation or eligibility checks.

7. Their hierarchical structure supports granular decision-making, giving organizations insight into feature importance.

Example : Classifying loan applicants into “approved,” “review,” and “rejected” categories based on financial attributes.

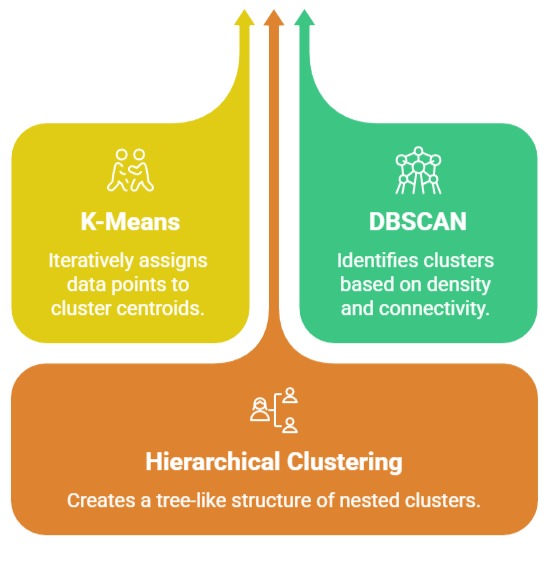

Clustering

Clustering consists of unsupervised learning algorithms that group similar data points into clusters without predefined labels.

Popular techniques include K-Means, DBSCAN, and hierarchical clustering.

Importance of Clustering

1. Clustering reveals natural patterns or structures in data, making it valuable for exploratory analysis and segmentation tasks.

2. It helps organizations categorize large datasets when labels are unavailable or expensive to obtain.

3. Different clustering methods adapt to various data shapes: while K-Means handles spherical clusters, DBSCAN identifies irregular and noise-heavy groups.

4. Clustering enhances personalization by allowing businesses to design targeted strategies for each discovered group.

5. It supports anomaly detection by isolating data points that differ significantly from established clusters.

6. When combined with dimensionality reduction, clustering uncovers deep insights from high-dimensional spaces like text or sensor readings.

7. It enables pre-processing for supervised learning by creating new features or groups that improve downstream model performance.

Example : Grouping customers based on purchasing behavior to design tailored marketing campaigns.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.