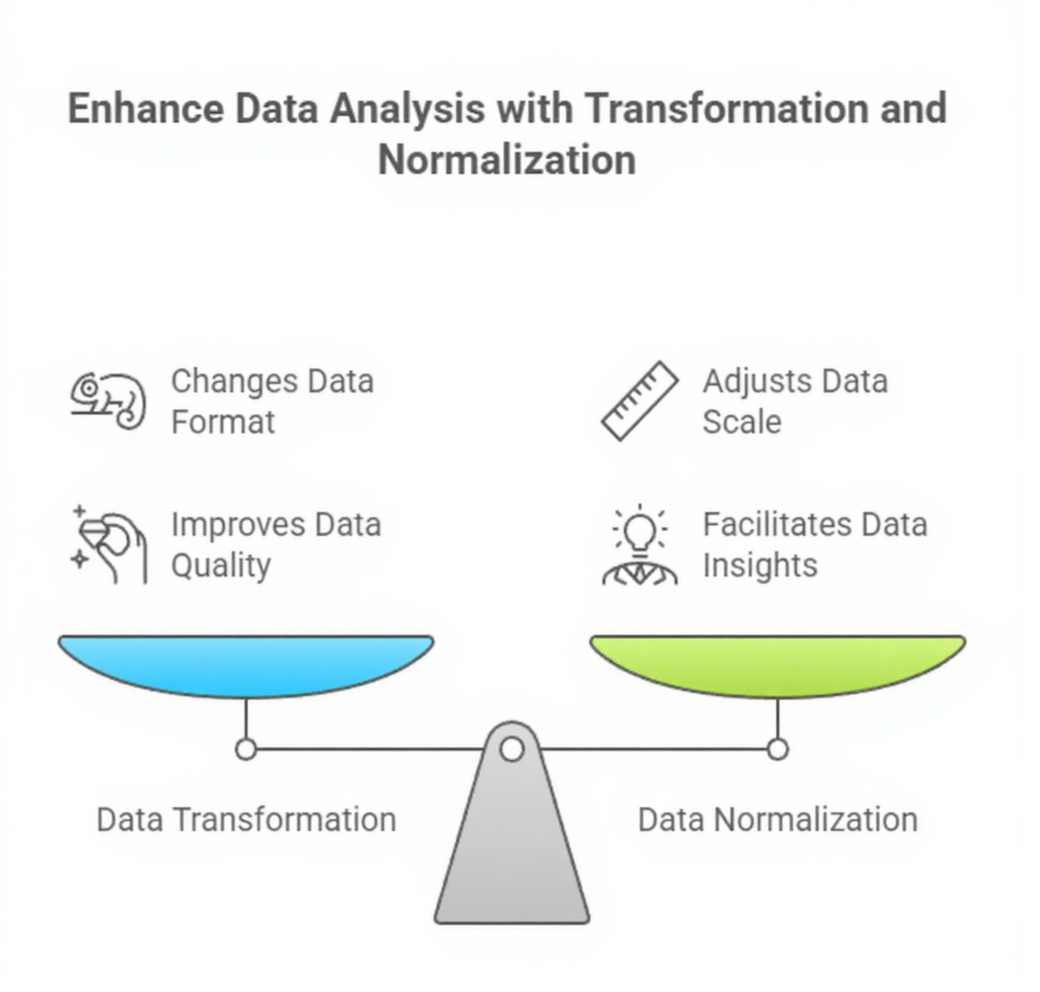

Data transformation and normalization are essential preprocessing steps that shape raw datasets into formats suitable for machine learning algorithms.

Since real-world data rarely comes in a uniform or structured form, transformation techniques help convert existing variables into consistent, meaningful representations.

These adjustments include encoding categorical fields, scaling numeric values, applying mathematical conversions, or restructuring data to highlight relevant relationships.

Normalization focuses specifically on adjusting the range or distribution of numerical features so that no single variable dominates the learning process purely due to its scale.

Algorithms such as k-nearest neighbors, neural networks, logistic regression, and gradient-based models rely heavily on these scaled values for proper functioning.

Transformations also assist with improving interpretability, reducing skewness, and enhancing model stability.

These techniques ensure that information is presented in a way that reflects underlying patterns rather than superficial variations in measurement units.

With modern data pipelines involving multi-source inputs, normalization becomes even more important to maintain coherence across datasets.

Ultimately, data transformation and normalization work together to create cleaner, more consistent input features, enabling models to train efficiently, converge faster, and produce more dependable results.

Importance of Data Transformation and Normalisation

Importance of Data Transformation and Normalisation

Below is the list of key reasons highlighting the importance of data transformation and normalisation in machine learning.

1. Ensures Equal Contribution of Features During Model Training

Normalization prevents variables with large numeric ranges from overpowering smaller-scale features.

Without scaling, distance-based algorithms may prioritize high-magnitude attributes even when they hold less predictive value.

Standardization, min–max scaling, and robust scaling help align feature ranges so the model evaluates each variable fairly.

This enhances gradient descent performance, reduces instability, and prevents erratic parameter updates.

When features contribute proportionally, the model is better able to capture true relationships within the data. Overall, normalization supports balanced learning and smoother optimization paths.

2. Improves Convergence Speed for Optimization Algorithms

Many machine learning models rely on gradient-based optimization, which becomes slower when feature scales differ widely.

Large-scale features cause uneven gradient magnitudes, forcing the optimizer to take irregular steps or overshoot minima.

Normalization brings features to similar numerical ranges, smoothing the loss landscape.

This allows algorithms like neural networks and logistic regression to converge more quickly and reliably.

Faster convergence reduces computation time and training cost, making workflows more efficient. Models also exhibit fewer oscillations and greater stability throughout training.

3. Helps Resolve Skewed Data Distributions Through Transformations

Transformations such as logarithmic, square root, and Box-Cox modify skewed distributions into more symmetric forms.

This is especially important for algorithms assuming normality or linear relationships.

Skewed variables often contain long tails that distort statistical measures and weaken predictive accuracy.

By adjusting these distributions, transformations allow models to better fit underlying patterns without being misled by extreme values.

These techniques improve interpretability and give algorithms a clearer representation of natural variation across the dataset. Handling skewness is crucial when preparing financial, healthcare, or sensor data.

3. Supports Categorical Feature Conversion for Model Compatibility

Many machine learning models require numerical input, making transformation of categorical variables essential.

Techniques such as one-hot encoding, label encoding, or target encoding convert text categories into structured numerical formats.

Proper transformation ensures that categorical representations preserve meaning without introducing unintended relationships.

For Example, label encoding can inaccurately imply numeric distances between categories, while one-hot encoding maintains neutrality.

These conversions help models learn patterns from both nominal and ordinal data. Correct categorical treatment broadens the model’s ability to capture diverse real-world information.

4. Enhances Feature Comparability Across Multiple Data Sources

When combining data from different platforms, devices, or databases, inconsistencies in scale and unit measurements often occur.

Normalization harmonizes these differences, allowing features from various sources to align seamlessly.

This unification prevents sources with larger ranges from overshadowing others and ensures smooth integration during modeling.

In large pipelines, this step becomes essential for maintaining overall dataset coherence.

Properly normalized inputs allow cross-domain models to generalize more effectively. This enhances reliability in real-world production environments.

5. Reduces Sensitivity to Noise and Improves Robustness

Unscaled or poorly transformed data amplifies noise, particularly in algorithms that rely on distance metrics like clustering or SVMs.

Normalization compresses extreme values, making datasets more resilient to minor fluctuations.

When noise is distributed uniformly across features, the model distinguishes true patterns more easily.

Transformations also highlight consistent behavior by removing unwanted variance caused by unit differences.

This increased robustness directly contributes to higher model accuracy and lower generalization error. Stable inputs produce stable outcomes.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.