Data acquisition refers to the systematic process of collecting raw information from various sources so it can be transformed into meaningful insights.

In data science and machine learning, this step is foundational because the quality and relevance of collected data often determine the success of the model.

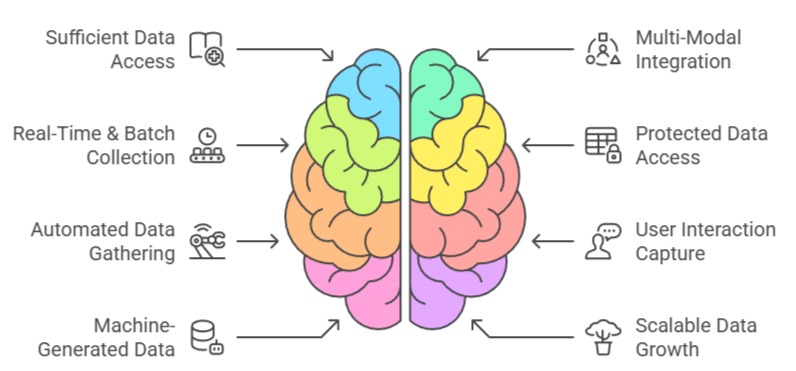

Different acquisition approaches are used depending on the project’s nature, available resources, and required data granularity. Below are the major methods, explained in detail with examples directly related to ML and DS workflows.

Importance of Data Acquisition Methods

1. Ensures Access to Sufficient Data for Building Reliable Models

Data acquisition is the first step in determining whether machine learning models will receive good-quality information to learn from.

A model’s accuracy, generalization ability, and fairness depend largely on how well the collected data reflects real-world conditions.

High-quality acquisition strategies gather diverse, complete, and balanced samples rather than relying on limited or biased sources.

Inadequate data collection often forces models to compensate with assumptions, which weakens predictive performance.

By carefully selecting acquisition methods—whether logs, sensors, surveys, or APIs—data scientists ensure a strong foundation for every downstream process.

2. Allows Integration of Multiple Data Modalities for Richer Insights

Modern analytical projects often require combining text, images, numerical records, and streaming events into a unified dataset.

Effective acquisition methods support this integration by using compatible protocols, consistent formats, and scalable pipelines.

For example, customer data might include transaction history (structured), support chats (text), and profile photos (images).

Bringing these together enhances modeling potential by incorporating multiple perspectives of behavior or patterns.

Such multimodal data acquisition supports advanced applications like sentiment analysis, recommendation engines, and fraud detection.

3. Supports Real-Time and Batch-Level Collection for Different Use Cases

Not all machine learning systems operate under the same timing requirements.

Real-time applications—such as anomaly detection in financial transactions—depend on continuous data acquisition pipelines that provide immediate updates.

Batch workflows, on the other hand, collect large amounts of data at scheduled intervals for deeper offline analysis.

Understanding the difference helps teams design pipelines with the right tools, from streaming platforms like Kafka to traditional ETL processes.

This flexibility ensures that both fast-response and periodic analytical models receive the data they require without delay.

4. Provides Mechanisms for Accessing Protected or Internal Enterprise Data

Organizations often store essential information inside secure databases, data warehouses, and cloud repositories.

Data acquisition techniques such as SQL querying, data lake ingestion, and ETL workflows extract these records under controlled permissions.

These processes ensure that sensitive datasets remain compliant with data governance policies while still being accessible for modeling.

Proper access also reduces fragmentation by allowing teams to consolidate data previously isolated in different departments.

This centralized acquisition makes it easier to maintain consistency and maintain lineage.

5. Facilitates Automated Data Gathering Through APIs and Web Services

APIs are now among the most efficient ways to acquire external data, especially when integrating weather updates, financial indicators, or social media trends.

API-based acquisition enables automation, scalability, and consistent updating with minimal manual intervention.

For machine learning tasks that depend on dynamic environments—market forecasting, risk analysis, or recommendation engines—this continuous feed is essential.

By leveraging APIs, organizations can enrich internal datasets with valuable external information, enhancing predictive accuracy and contextual understanding.

6. Helps Capture User Interactions and Behavioral Patterns

Web and mobile platforms generate a vast amount of data through user interactions, and acquisition techniques such as event tracking, clickstream logging, and session recording help capture this behavior.

These logs offer detailed insights into preferences, engagement patterns, and usability challenges.

Behavioral acquisition is especially valuable for ML applications like personalization, churn prediction, and marketing analytics.

Capturing these interactions accurately provides a dynamic dataset that reflects evolving user habits, making models more adaptive and responsive.

7. Enables Collection of Machine-Generated Data From IoT and Sensor Networks

In industries such as healthcare, manufacturing, and transportation, devices and sensors continuously generate high-frequency readings.

Data acquisition systems collect these measurements in real time to support monitoring, forecasting, and anomaly detection applications.

The challenge lies in handling the volume and velocity of these streams while ensuring no critical information is lost.

With robust acquisition pipelines, organizations can convert raw sensor data into actionable insights for maintenance, safety, and operational efficiency.

This expands machine learning applications into complex physical environments.

8. Supports Scalable Data Growth for Long-Term ML Lifecycle Needs

As machine learning systems evolve, the amount and variety of data they require typically grow.

Effective acquisition methods are designed to scale smoothly, whether expanding into additional data sources, increasing sampling frequency, or adapting to new formats.

Scalable data intake ensures that future analyses do not face bottlenecks due to outdated collection mechanisms.

This adaptability also prepares organizations for the integration of new technologies, such as cloud-based pipelines, advanced telemetry, or real-time dashboards.

Ensuring scalable acquisition guarantees the longevity and reliability of the entire ML pipeline.