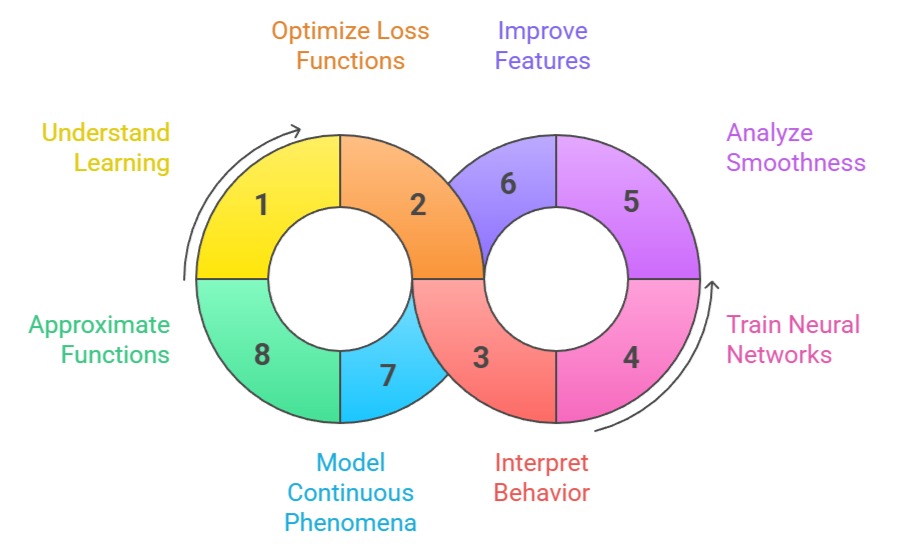

Calculus plays a foundational role in shaping how machine learning models learn, adapt, and optimize their performance.

At the core of most algorithms lies the need to understand how small changes in parameters influence the output or loss value.

Concepts such as derivatives, partial derivatives, gradients, and optimization help quantify the rate of change and guide the model during training.

In data science, calculus also helps explain the shapes of functions, behavior of cost curves, curvature of optimization surfaces, and the stability of learning dynamics.

Whether training linear models or deep neural networks, calculus provides the mathematical engine that allows models to improve over time through systematic parameter updates.

Examples

1. Using derivatives to compute the slope of a loss function in gradient descent.

2. Applying partial derivatives to determine how each weight affects prediction error.

3. Using gradient functions to adjust weights in neural networks during backpropagation.

4. Optimization algorithms using calculus to minimize functions such as MSE, cross-entropy, or hinge loss.

Key Importance Points

1. Enables Understanding of How ML Models Learn Through Gradients

Calculus makes it possible to compute the exact direction in which a model should adjust its parameters to reduce error.

Gradients, derived using partial derivatives, indicate how sensitive the loss is to each parameter.

This allows the algorithm to make systematic updates rather than random guesses.

Without derivatives, gradient descent and its numerous variants would not function, leaving models unable to improve through iterative learning.

Understanding this relationship gives practitioners deeper insight into why certain models converge faster while others struggle.

2. Supports Optimization of Complex Loss Functions Across High Dimensions

Most machine learning problems require minimizing functions that depend on thousands or millions of parameters.

Calculus provides tools for navigating these high-dimensional surfaces efficiently.

Derivatives help measure the steepness of the loss curve, while second-order concepts like curvature assist in determining how quickly to move along particular directions.

Optimization algorithms exploit these properties to avoid overshooting, stagnation, or divergence. This makes calculus indispensable for handling difficult optimization landscapes encountered in deep learning.

3. Helps Interpret Model Behavior Through Function Analysis

Many machine learning models rely on smoothly varying functions such as activation functions, error surfaces, or probability distributions.

Calculus allows analysts to explore their shapes, identify increasing or decreasing regions, find turning points, and understand asymptotic behavior.

This level of insight is valuable when selecting activation functions, adjusting regularization strategies, or diagnosing issues like vanishing gradients.

By analyzing these behaviors mathematically, practitioners can make informed decisions to improve training stability.

4. Drives the Training of Neural Networks Through Backpropagation

Backpropagation is fundamentally built on the chain rule of calculus, allowing gradients to flow from the output layer to all previous layers.

This mechanism ensures that each parameter receives a precise update proportional to its contribution to the error.

The chain rule enables the composition of multiple transformations, making deep architectures possible.

Without this calculus-based propagation mechanism, deep learning models—including CNNs and transformers—would not be trainable. Thus, calculus forms the backbone of modern AI.

5. Essential for Understanding Smoothness, Curvature, and Convergence Behavior

Second-order calculus concepts such as Hessians and curvature help explain how sharply or smoothly a function changes across different directions.

These characteristics influence step sizes, learning rates, and how optimization algorithms behave near minima or saddle points.

Understanding curvature helps differentiate between stable and unstable regions in the loss landscape, guiding model adjustments.

This knowledge is particularly important when using advanced optimizers like Newton’s Method or RMSprop. Calculus ensures that optimization does not become arbitrary or unstable.

6. Improves Feature Engineering Through Sensitivity and Rate-of-Change Analysis

Calculus helps evaluate how sensitive an output variable is to changes in specific features.

By analyzing derivatives, data scientists can identify which inputs have the strongest influence on predictions.

This enhances feature selection, reduces noise, and helps detect multicollinearity or redundant attributes.

Understanding the rate of change also supports model interpretation and explainability, making predictions more transparent.

This calculus-driven reasoning strengthens the overall reliability of analytical outcomes.

7. Facilitates Modeling Continuous Phenomena and Real-Time Predictions

Many real-world processes—such as changes in demand, temperature fluctuations, stock price movements, or sensor readings—evolve continuously.

Calculus offers a formal framework to capture these dynamics through continuous functions and differential equations.

Models can adapt by analyzing instantaneous rates of change, allowing more accurate forecasting or anomaly detection.

This continuous modelling capability becomes crucial in fields like finance, energy, climate science, and healthcare analytics.

8. Powers Smooth Function Approximation in ML Models

Machine learning models, especially neural networks, operate by approximating unknown functions that map inputs to outputs.

This approximation relies on combining differentiable transformations whose gradients can be computed efficiently.

Calculus ensures that these approximations remain stable, smooth, and expressive enough to fit complex data patterns.

The differentiability of activation functions, loss functions, and regularizers all depends on core calculus principles, making it fundamental to every stage of model training.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.