Risk Assessment and Ethical Impact Analysis is a critical component of responsible data science, ensuring that algorithms, data pipelines, and automated decision-making processes do not unintentionally harm individuals, communities, or societal structures.

As AI deployment grows across healthcare, finance, education, transportation, and public service systems, understanding potential risks—such as privacy violations, discriminatory outcomes, and systemic inequalities has become essential.

Ethical impact analysis goes beyond technical evaluation and considers how models influence people’s lives, social norms, distribution of power, and economic opportunities.

It requires assessing risks from multiple dimensions including fairness, transparency, bias, misuse, accountability gaps, and long-term societal consequences.

Ethical Impact Analysis and Risk Mitigation in Data Science

1. Identifying Potential Ethical Risks

Conducting risk assessment starts with identifying privacy, fairness, safety, and societal risks that may emerge from data collection, model behaviors, or decision outputs.

This involves examining data sources for potential biases or lack of representation, evaluating the context of model usage, and understanding how errors could disproportionately harm vulnerable populations.

Analysts must also examine risks associated with data misuse, unauthorized access, and unintended secondary uses.

By mapping out these risks early, organizations prevent downstream harms and reduce costly corrective measures later.

This step sets the foundation for ethical, transparent, and reliable AI practices.

2. Evaluating Harm to Individuals and Communities

Ethical impact analysis examines not only technical risks but also the real-world harm that models may cause—such as discriminatory credit scoring, unfair medical triage, or biased hiring decisions.

It evaluates how specific groups might be disadvantaged or excluded by model predictions.

This includes marginalized communities, people with disabilities, or economically vulnerable populations.

Understanding these impacts requires qualitative and quantitative assessments, including stakeholder discussions and impact forecasting.

This allows organizations to avoid reinforcing inequalities and ensure their systems contribute positively to social well-being.

3. Scenario-Based Risk Forecasting

Scenario modeling explores best-case, worst-case, and edge-case outcomes of AI deployment.

It helps teams anticipate rare but damaging events such as biased model drift, data leakage incidents, or harmful decision loops.

These scenarios also help identify adversarial threats, such as model manipulation or malicious data poisoning.

By forecasting multiple possible futures, organizations design more resilient systems that remain ethical and secure under unpredictable conditions.

It also promotes responsible innovation by encouraging thoughtful planning rather than rushed deployment.

4. Stakeholder and User Impact Consultation

Ethical impact analysis requires listening to those directly affected by AI decisions—customers, employees, subjects of profiling systems, or community groups.

Stakeholder consultation uncovers concerns that technical teams might overlook, especially regarding lived experiences and localized socio-cultural contexts.

Such engagement fosters transparency, accountability, and trust between organizations and the communities they serve.

It also ensures that design choices reflect diverse needs rather than assumptions made by technical teams, reducing ethical blind spots.

5. Establishing Ethical Risk Mitigation Strategies

After risks are identified, teams must design safeguards such as fairness constraints, differential privacy, human-in-the-loop oversight, or strict access control protocols.

Mitigation strategies aim to reduce harm while maintaining model performance and utility.

This includes revising data pipelines, enhancing documentation, applying robustness tests, or implementing explainability tools.

A strong mitigation plan ensures that models operate responsibly under various conditions and that ethical obligations are met consistently throughout the system lifecycle.

6. Integrating Compliance and Regulatory Alignment

Risk assessment includes evaluating whether systems comply with laws such as GDPR, the EU AI Act, or emerging AI governance standards.

Ethical impact analysis checks whether data usage aligns with consent requirements, retention limits, and transparency obligations.

Compliance reduces legal risk and enhances organizational credibility.

It also ensures that evolving regulatory expectations are incorporated into ongoing system development, preventing future liabilities or public backlash.

7. Monitoring Long-Term Societal Impact

Ethical impact analysis is not limited to immediate risks; it examines how systems influence long-term societal patterns such as job displacement, surveillance expansion, or social inequality.

This involves considering cumulative impacts across multiple deployments and understanding how certain demographics may face amplified consequences.

Continuous monitoring ensures AI remains beneficial and does not slowly reinforce harmful systemic biases over time.

It also helps organizations adapt to changing norms and expectations.

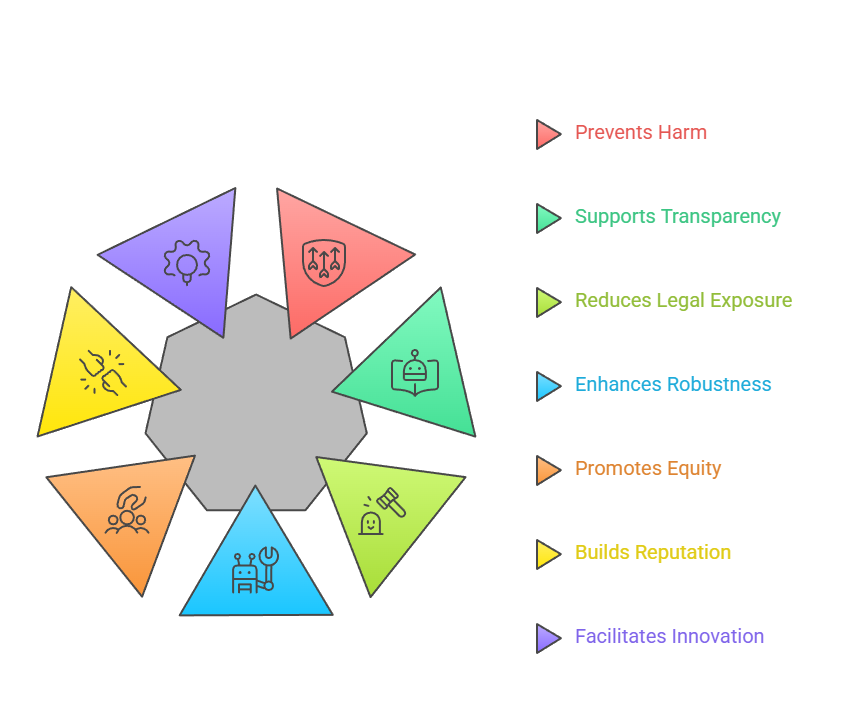

Importance of Risk Assessment & Ethical Impact Analysis

1. Prevents Unintended Harm

Ethical analysis ensures systems do not produce harmful outcomes for marginalized groups, reduce access to opportunities, or reinforce stereotypes.

By anticipating risks early, organizations avoid public scandals, reputational damage, and loss of user trust.

This proactive approach protects individuals’ rights and dignity.

2. Supports Transparent and Trustworthy AI

Transparent risk evaluation helps users understand how decisions are made, which builds trust.

Organizations that clearly communicate risks and safeguards are more likely to gain public acceptance and regulatory approval.

This transparency also improves internal accountability.

3. Reduces Legal and Regulatory Exposure

Ethical impact assessments help organizations comply with privacy, discrimination, and algorithmic accountability laws.

By proactively documenting risks and mitigations, companies reduce the likelihood of lawsuits, penalties, and compliance failures.

4. Enhances Model Robustness and Reliability

Risk identification leads to more resilient systems that withstand unexpected conditions, adversarial attacks, or model drift.

Ethical assessment thus improves both moral and technical performance.

5. Promotes Equity and Inclusion

Ethical impact analysis identifies how different communities are affected by AI decisions, helping ensure fair outcomes.

It prevents systematic disadvantage and promotes inclusive system design.

6. Builds Organizational Reputation and Public Trust

Companies known for ethical governance attract customers, partners, and talent.

Ethical diligence reduces the risk of public controversy, increasing long-term institutional credibility.

7. Facilitates Responsible Innovation

Risk assessment empowers organizations to innovate without compromising safety or ethics.

It encourages creativity while establishing guardrails that prevent irresponsible experimentation.

Challenges in Risk Assessment & Ethical Impact Analysis

1. Difficulty Predicting Long-Term Outcomes

Many AI systems evolve unpredictably due to data drift, user behavior changes, or external factors.

Organizations struggle to forecast long-term societal, cultural, or economic impacts. This uncertainty makes it challenging to design complete mitigation strategies.

2. Limited Availability of High-Quality, Fair Data

Biases in real-world datasets make risk assessment difficult, as flawed data can obscure ethical issues or produce misleading analysis.

Under-representation of minority groups makes it hard to detect differential harms early.

3. Lack of Standardized Ethical Assessment Frameworks

Organizations often operate without consistent ethical guidelines or risk review processes.

This leads to varied interpretations of what constitutes "acceptable risk," complicating evaluation and accountability efforts.

4. Conflicts Between Business Objectives and Ethics

Commercial pressures often emphasize speed, profitability, or model accuracy over ethical considerations. Teams may deprioritize risk evaluations due to deadlines or competitive pressures.

5. Difficulty Communicating Risks Across Teams

Technical teams may struggle to communicate ethical concerns to executives, policy teams, or non-technical stakeholders.

This misalignment slows decision-making and weakens governance effectiveness.

6. Limited Stakeholder Participation

Engaging diverse stakeholder groups can be logistically challenging.

Without proper consultation, ethical blind spots remain undetected, leading to incomplete risk evaluations.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.