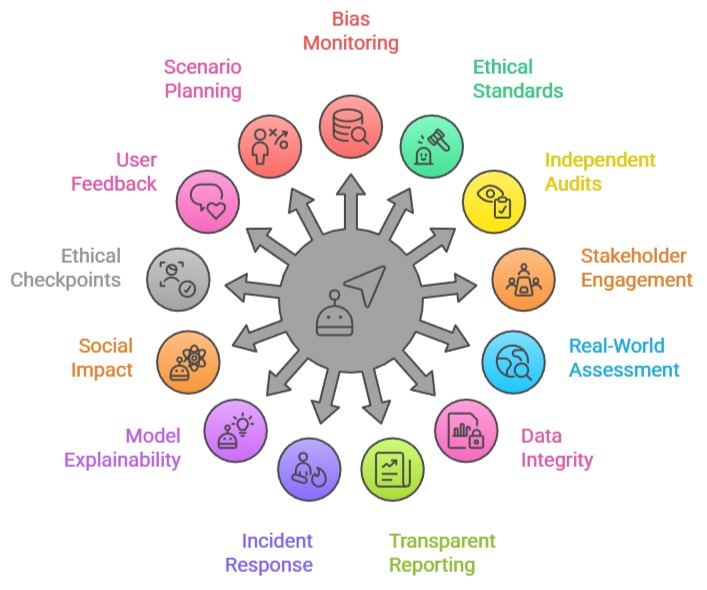

Continuous evaluation of ethical implications refers to the ongoing process of monitoring, assessing, and improving the ethical impact of data science systems throughout their lifecycle—from data collection to deployment and long-term use.

Since AI models evolve, environments change, and data patterns shift, ethical risks cannot be addressed just once at the beginning; they require constant oversight.

Modern data science applications—such as recommender systems, predictive policing, medical algorithms, or financial risk models—can unintentionally reinforce biases, threaten privacy, or cause societal harm if not regularly reassessed.

Continuous evaluation ensures that issues like unfair outcomes, model drift, discrimination, misuse, transparency gaps, and unintended consequences are identified early and corrected responsibly.

It also allows organizations to adapt to evolving laws, community expectations, user needs, and new risks emerging from real-world deployment.

Ethical evaluation is not a one-time audit but a sustained practice involving data scientists, product managers, legal teams, and domain experts.

Continuous Monitoring, Auditing, and Accountability in AI

1. Monitoring Models for Bias and Drift

Continuous evaluation includes real-time monitoring of models to detect bias, unfair treatment, or performance degradation.

Over time, data distributions change (called model drift), which can result in inaccurate or discriminatory predictions.

Tracking these shifts ensures models stay aligned with ethical standards and do not unintentionally harm specific groups.

Automated dashboards, fairness metrics, and performance logs help identify discrepancies early.

This constant oversight allows data scientists to retrain or update the model before issues escalate.

Monitoring also reveals hidden trends or ethical risks that were not visible during testing. Ultimately, continuous evaluation protects users from unfair or unsafe model behavior.

2. Updating Ethical Standards Based on Regulations and Industry Trends

Laws and regulations such as GDPR, CCPA, and AI Act are continually updated, requiring AI systems to adapt to new compliance rules.

Continuous evaluation ensures that models do not violate emerging privacy, transparency, or fairness guidelines.

Industry standards like ISO/IEC AI governance frameworks also evolve, demanding regular reassessment of internal policies.

Ethical norms shift as society becomes more aware of AI risks, so organizations must keep their ethical frameworks current.

This approach prevents legal penalties, public backlash, and operational disruptions.

As expectations evolve, ethical evaluation helps maintain alignment with global best practices.

3. Conducting Periodic Independent Audits

In addition to internal review, external audits provide unbiased evaluation of ethical risks.

Independent auditors can identify flaws that internal teams may overlook due to familiarity or organizational pressure.

These audits assess everything from data governance to fairness testing, model documentation, privacy safeguards, and transparency practices.

Periodic audits help ensure that AI systems are inspected from diverse perspectives, increasing credibility.

They also demonstrate accountability to regulators, customers, and partners. Continuous auditing fosters a culture of responsibility rather than one-time compliance checks.

4. Engaging Stakeholders and Impacted Communities

Continuous ethical evaluation includes actively collecting feedback from users, affected communities, and external stakeholders.

Many ethical risks only become visible when real users interact with the system in dynamic environments.

Community involvement helps teams understand cultural, social, and contextual impacts that quantitative metrics may miss.

Stakeholder feedback reveals concerns about fairness, trust, privacy, usability, or psychological harm.

Integrated feedback loops ensure AI evolves responsibly and remains aligned with societal expectations.

This engagement builds transparency, reduces harm, and improves long-term adoption.

5. Assessing Real-World Consequences After Deployment

While pre-deployment testing predicts risks, real-world deployment often reveals entirely new ethical consequences.

Continuous evaluation helps identify emergent harms—such as disproportionate errors, feedback loops, or misinterpretations of results.

For instance, predictive policing systems may reinforce crime patterns if not regularly reassessed.

Real-world analysis ensures that model decisions improve user welfare rather than worsen inequalities.

This kind of evaluation transforms AI from a static tool into a dynamically managed system that adapts responsibly.

6. Ensuring Data Quality, Security, and Privacy Over Time

Ethical evaluation includes reviewing whether data remains accurate, representative, and securely stored throughout the system’s life.

Poor data quality can create biased or unreliable outputs, making ongoing data hygiene essential.

Security audits ensure no sensitive information becomes vulnerable to breaches or misuse.

As new data is added, privacy expectations may change, requiring new anonymization or encryption methods. Continuous monitoring helps address these evolving risks proactively.

7. Transparent Documentation and Reporting

Continuous evaluation requires clear and updated documentation covering model behavior, versions, decisions, and ethical assessments.

Transparent reporting builds trust and helps auditors understand how models evolve.

Documentation creates accountability by clearly recording biases found, actions taken, and improvements made.

It also supports cross-team collaboration, enabling legal, ethical, and technical teams to work cohesively.

Over time, transparency reduces confusion, improves explainability, and strengthens governance.

8. Establishing an Ethical Incident Response Plan

Continuous evaluation involves preparing for potential ethical failures and setting up protocols for quick response.

Incident response plans outline procedures for identifying issues, halting harmful models, notifying stakeholders, and correcting errors.

This proactive structure reduces damage when ethical failures occur. Incident analysis also provides insights for improving future systems.

This approach ensures that the organization is not reactive but strategically prepared to handle ethical challenges.

9. Continual Review of Model Explainability and Interpretability

Continuous ethical evaluation includes reassessing how understandable and interpretable a model remains as it evolves.

Models often grow more complex during retraining, which can reduce the clarity of decision-making processes.

Ensuring consistent interpretability is essential for informed consent, trust-building, and regulatory compliance.

Techniques like SHAP, LIME, and counterfactual explanations must be updated to reflect the latest model versions.

If explanations degrade over time, users may misunderstand risks or misinterpret predictions.

Regular interpretability reviews help address transparency gaps promptly. This approach ensures that AI is accountable and explainable at every stage of its lifecycle.

10. Evaluating the Long-Term Social Impact of AI Systems

Some ethical risks only become visible after prolonged use—not during initial testing.

Continuous evaluation helps uncover long-term societal impacts such as discrimination, polarization, exclusion, or harmful behavioral shifts.

For Example, recommender systems may create addictive patterns or reinforce ideological echo chambers.

Long-term impact monitoring ensures AI serves public well-being rather than causing subtle but widespread harm.

This evaluation requires interdisciplinary collaboration with sociologists, psychologists, and policy experts.

Ongoing analysis helps ensure technological benefits remain balanced with societal values.

11. Implementing Ethical Checkpoints Across the ML Pipeline

Continuous evaluation is strengthened by embedding ethical checkpoints at data collection, preprocessing, modeling, deployment, and maintenance stages.

Each checkpoint ensures that no stage introduces new risk—such as biased sampling or unethical feature engineering.

Checklists and governance frameworks help teams document ethical considerations at each milestone.

These checkpoints create traceability and accountability throughout the pipeline.

Ethical reviews become part of routine workflows rather than afterthoughts. This fosters a culture where ethics is a continuous responsibility rather than a compliance requirement.

12. Integrating User Feedback and Incident Reports into Model Updates

Users often detect harms or inconsistencies before developers do.

Continuous evaluation encourages the integration of user complaints, feedback, or anomaly reports into model improvement cycles.

This feedback loop helps identify real-world ethical issues that metrics may overlook. Incident logs are analyzed to understand patterns of bias, misinformation, or safety risks.

As feedback accumulates, the system evolves ethically to meet user expectations. This user-centered approach keeps the model’s behavior aligned with human values and practical needs.

13. Scenario Planning and Ethical Stress Testing

Continuous evaluation includes testing extreme or unexpected scenarios to assess potential failures.

Ethical stress-testing examines how models behave under adversarial inputs, shifting environments, or unusual user behaviors.

This prevents catastrophic failures, such as discriminatory outputs or security breaches.

Scenario planning helps identify blind spots that standard testing misses. This forward-thinking approach ensures resilience, fairness, and safety under real-world variability.

Real-World Case Studies of Ethical Evaluation

Case Study 1: Google Photos’ Image Labeling Failure (2015–Ongoing)

Google Photos’ image recognition system once mislabeled Black individuals as “gorillas.”

Although the model was immediately patched, Google continued to evaluate its ML systems for years to prevent similar racial misclassification.

The case showed that one-time fixes are insufficient—continuous ethical auditing is required.

Post-incident evaluations included diverse data collection, regular fairness audits, and testing with broader demographic samples.

This case emphasized ongoing bias detection, long-term monitoring, and transparent improvement cycles.

Case Study 2: LinkedIn’s AI Recruiter Fairness Evaluation (2019–Present)

LinkedIn discovered that its job recommendation system favored male candidates over female candidates due to historical hiring biases.

The company conducted continuous ethical evaluations involving fairness metrics, explainability checks, and bias-mitigation retraining.

Rather than a single audit, LinkedIn implemented an ongoing fairness pipeline that revalidates models after every update.

This long-term approach improved model equity and set new industry standards for ethical HR analytics.

Case Study 3: Amazon’s Failed Hiring Algorithm (2014–2017)

Amazon scrapped its hiring algorithm after discovering it consistently penalized women’s resumes. Initially, the system passed technical evaluations but failed ethically once deployed on real data.

Continuous evaluation revealed that the model adapted bias from historical male-dominant data.

Amazon’s failure highlighted the need for ongoing monitoring even after deployment, because ethical issues can emerge as models observe new patterns.

The company shifted toward human-AI hybrid hiring assessments and implemented long-term fairness governance.

Case Study 4: Twitter/X Image Cropping Algorithm Bias (2020–2021)

Users discovered that Twitter’s automatic image cropping algorithm favored lighter-skinned faces and men over women.

After public scrutiny, Twitter conducted months of continuous ethical evaluations, testing thousands of images across ethnicities, genders, and facial features.

Regular audits showed skewed saliency detection, prompting Twitter to remove automated cropping entirely.

This case illustrates how continuous evaluation—not one-time testing—reveals hidden real-world harms.

Case Study 5: Spotify’s Recommendation Bias & Behavioral Impact Reviews

Spotify continuously evaluates its recommender algorithms for psychological, behavioral, and fairness impacts.

Ethical evaluations revealed that recommending similar genres repeatedly creates “musical bubbles” that reduce exposure to diverse artists.

Updated practices now include long-term impact analysis, feedback loops, and diversity-enhancing recommendation strategies.

This ongoing evaluation addresses both fairness and societal influence.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.