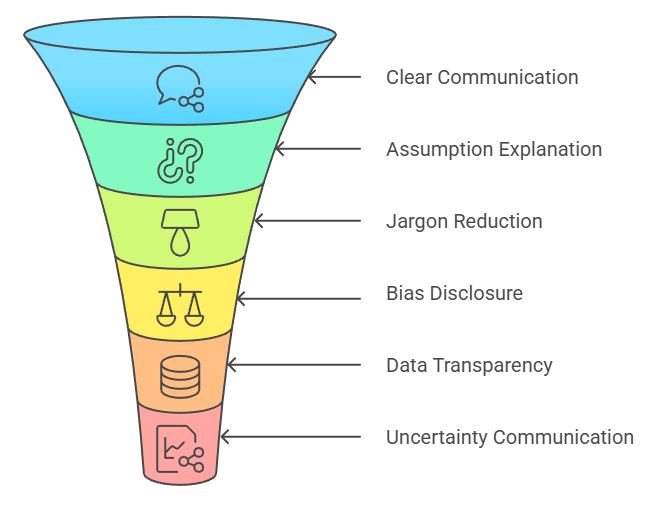

Responsible communication and transparency are essential ethical principles in data science because they ensure that insights, models, and decisions are shared accurately, clearly, and honestly with all stakeholders.

Data science outcomes influence business strategy, public policies, financial decisions, customer experiences, and even legal judgments.

Therefore, data scientists must communicate not only technical results but also the limitations, assumptions, uncertainties, and risks associated with them.

Transparency ensures that users and decision-makers understand how insights were generated and prevents blind trust in models that may contain errors or biases.

In an age where machine learning systems operate as “black boxes,” transparent communication becomes even more critical.

It bridges the gap between technical teams and non-technical stakeholders, enabling better decision-making, reducing confusion, and preventing misinterpretation of analytical results.

Communicating Data Science Methods and Results Transparently

1. Communicating Results Clearly and Honestly

Data scientists must communicate their findings in a way that is truthful, precise, and easy to understand for non-technical stakeholders.

This includes avoiding exaggeration, misleading claims, or selective presentation of results that favor a certain narrative.

Clear communication means explaining what the analysis shows, what it does not show, and what uncertainties still exist.

Visualizations should be accurate and avoid manipulation through scale distortion or deceptive design choices.

Honesty helps prevent flawed decisions that could arise from misunderstood or misrepresented insights.

Ethical communication builds long-term trust and positions analytics as a reliable decision-making tool.

2. Explaining Assumptions, Limitations, and Risks

Every model is built on assumptions—data quality, sampling methods, variable selection, and algorithmic constraints.

Data scientists must clearly explain these assumptions to ensure stakeholders understand where the model might fail.

Similarly, limitations such as missing data, low sample sizes, biased sources, or model instability must be openly disclosed.

Transparency also requires discussing risks such as potential bias, privacy concerns, or unintended consequences of deploying the model.

Explaining limitations prevents blind over-reliance on analytics and encourages responsible decision-making. This level of clarity ensures the ethical and safe use of data-driven insights.

3. Avoiding Technical Jargon and Improving Interpretability

Responsible communication means translating complex technical concepts—like hyperparameters, overfitting, or model drift—into language that non-experts can easily understand.

Overuse of jargon alienates stakeholders and increases the risk of misinterpretation.

Ethical communication requires simplifying explanations without losing accuracy, possibly using analogies, examples, or visual aids.

Interpretability also involves choosing model types or tools (e.g., SHAP, LIME) that make predictions transparent when needed.

Making models understandable empowers decision-makers and ensures accountability.

Clarity in communication strengthens cross-functional collaboration and reduces stakeholder frustration.

4. Disclosing Biases and Fairness Concerns in Models

Data scientists must transparently communicate any detected biases in the dataset or algorithm, along with how these biases may influence outcomes.

This includes describing any known disparities across gender, race, region, or socioeconomic status.

Ethical communication requires describing the fairness metrics used, the mitigation steps taken, and the limitations of those interventions.

Bringing these concerns forward prevents organizations from inadvertently deploying discriminatory systems.

Openness around bias also enables stakeholders to evaluate the ethical trade-offs involved. Transparency fosters fairness and reduces harm to vulnerable populations.

5. Ensuring Transparency in Data Sources and Methods

Transparency requires disclosing where the data came from, how it was collected, and what preprocessing steps were applied.

Stakeholders should know whether data was acquired ethically, whether consent was obtained, and whether any sensitive attributes were used.

Communicating methodology—feature selection, model choice, validation strategy, and performance metrics—helps stakeholders verify the model’s integrity.

Transparency also prevents accusations of manipulation or data misuse.

Clear documentation enables audits, reproducibility, and compliance with regulatory standards, which strengthens organizational accountability.

6. Communicating Uncertainty and Avoiding Overconfidence

Data science results always carry uncertainty, whether due to data variability, modeling assumptions, or real-world unpredictability.

Ethical communication requires quantifying and clearly explaining uncertainty—through confidence intervals, error rates, prediction ranges, and scenario analysis.

Data scientists must avoid presenting models as perfect or definitive, especially in high-risk domains such as healthcare or finance.

Acknowledging uncertainty prevents unrealistic expectations and encourages cautious decision-making.

Responsible communication helps stakeholders understand the limits of predictions and reduces the risk of harmful consequences.

7. Providing Actionable and Ethical Recommendations

Responsible communication involves delivering not just results but also practical, ethical, and balanced recommendations.

Data scientists must consider the social implications of their suggestions and avoid advocating for actions that compromise privacy, fairness, or user rights.

Recommendations should include ethical alternatives, risk mitigations, and long-term considerations.

They must be presented in a way that helps leaders make responsible decisions rather than simply following algorithmic outputs.

Offering nuanced and ethical guidance reinforces trust and positions data scientists as strategic partners rather than just technical analysts.

Real-World Cases of Responsible Communication & Transparency

Real-world cases show that failures in communication and transparency can be as damaging as technical flaws in data and AI systems. Clear, honest, and timely communication about data use, model logic, risks, and limitations is essential for maintaining public trust and accountability.

1. Cambridge Analytica & Facebook Scandal (2018)

Cambridge Analytica harvested data from millions of Facebook users without informed consent and used psychographic profiling for political targeting.

The major ethical issue was not just data misuse but lack of transparency in communicating how user data was being collected, analyzed, and applied.

Facebook failed to communicate the risks and scope of data access to users and regulators, causing massive public distrust.

This case underscores that communication transparency is crucial in explaining data usage policies and downstream effects of AI-driven targeting.

It also highlights the need for responsible disclosure when algorithms impact public opinion, elections, and democracy.

2. Apple Card Gender Bias Controversy (2019)

Users reported that Apple’s credit card algorithm assigned significantly lower credit limits to women, even when they had stronger financial histories than men.

The communication failure occurred because Apple and Goldman Sachs did not explain how the model made decisions or what variables were used.

Stakeholders were left confused, and regulators demanded transparency. Lack of clear explanation made the public assume intentional discrimination.

The case proves that communicating model logic, fairness assessments, and evaluation methods can prevent reputational harm and build trust.

3. Zillow Home Pricing Algorithm Collapse (2021)

Zillow’s AI-based home-buying model overestimated real estate prices, leading the company to purchase thousands of overpriced houses and suffer $300+ million in losses.

The organizational failure stemmed from poor communication about model uncertainty and risk assumptions.

Data scientists had reportedly warned leadership about volatility and low confidence in predictions, but these warnings were not communicated clearly or acted upon.

This case illustrates the need for transparent communication of model limitations, confidence intervals, and worst-case scenarios—especially when multi-billion-dollar decisions rely on predictions.

4. Uber Self-Driving Car Accident (2018)

A self-driving Uber vehicle struck a pedestrian due to misclassification by the AI system. Investigations showed that the communication between engineering teams, safety teams, and executives failed.

Model risk, detection uncertainty, and safety concerns had not been adequately communicated to decision-makers.

Misalignment and lack of transparency about model limitations contributed to a tragic outcome.

This highlights the importance of transparent reporting about model risks, especially in safety-critical systems.

5. UK A-Level Grading Algorithm Failure (2020)

When exams were canceled during COVID-19, the UK used an algorithm to predict student grades.

The system downgraded many students unfairly, especially from disadvantaged areas.

The failure occurred because authorities did not communicate how the algorithm worked, what data it used, or the biases it might create.

Students, parents, and schools felt blindsided, leading to nationwide protests and reversal of decisions.

This case stresses the importance of openly communicating model criteria, fairness challenges, and potential bias before deployment.

Major Communication Failures in Data Science

Major communication failures in data science occur when insights are presented without clarity, context, or honesty. These failures show why transparent reporting, clear explanations, and ethical communication are just as critical as technical accuracy in data-driven decision-making.

1. Not Reporting Uncertainity or error levels

Presenting predictions as absolute truths is a major ethical mistake.

For Example, forecasting revenue without confidence intervals creates unrealistic expectations and pressures teams into wrong strategies.

Failure to communicate uncertainty often leads to costly decisions, such as overinvesting or misallocating resources.

2. Hiding Bias or Negative Findings

Sometimes analysts only present favorable results to please leadership or avoid criticism.

But hiding bias, skewed data, or weak performance is unethical and risky.

This failure causes stakeholders to assume the model is reliable when, in reality, it may discriminate or fail catastrophically in real use.

3. Misleading Visualizations

Charts with distorted axes, incomplete labels, cherry-picked time ranges, or manipulated scales misrepresent truth.

Organizations may unintentionally make harmful decisions because a visualization “looked good” but hid important details.

Visual deception—intentional or accidental—breaks ethical transparency.

4. Poor Documentation and Opaque Workflows

Models without proper documentation, audit trails, or transparency reports make it impossible for future teams to understand how decisions were made.

Lack of clear communication leads to compliance violations, data misuse, and inability to explain model behavior to regulators or customers.

5. Failure to Communicate Data Quality Issues

When analysts don't disclose missing data, sampling bias, or errors in data collection, stakeholders trust results that may be fundamentally flawed.

This communication failure leads to decisions built on weak foundations, often resulting in financial losses or customer dissatisfaction.

6. Overconfidence in Model Accuracy

Communicating a model as “highly accurate” without explaining the test conditions, constraints, or real-world variability causes over-trust.

When deployed, reality often differs from controlled test environments.

Overconfidence leads to ethical breaches when models fail in unexpected scenarios.

7. Over-reliance on Jargon

Many teams fail to simplify AI concepts, leading stakeholders to misunderstand results.

When terms like “model drift,” “L1 regularization,” or “hyperparameters” are used without explanation, decision-makers misinterpret the impact of insights.

This communication gap leads to flawed business decisions, wrong deployments, or loss of trust in analytics teams.