Data privacy laws and regulations establish the legal framework that governs how organizations collect, use, store, share, and protect personal data.

As data becomes central to modern digital services, governments worldwide have implemented strict regulations to safeguard individual rights and prevent misuse of sensitive information.

These laws outline what constitutes personal data, define consent requirements, enforce transparency, and set penalties for violations.

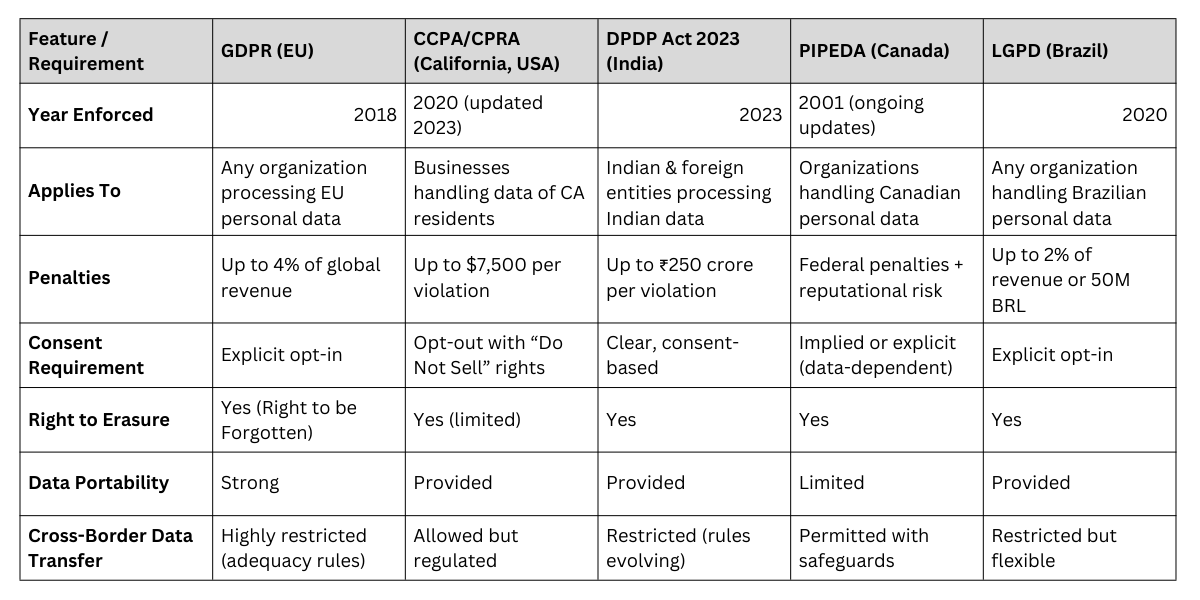

Regulations such as the General Data Protection Regulation (GDPR) in Europe, California Consumer Privacy Act (CCPA) in the U.S., Digital Personal Data Protection (DPDP) Act 2023 in India, and similar frameworks across Asia and the Middle East reflect a global shift toward stronger data governance.

These laws are essential because organizations today handle unprecedented volumes of user data—from browsing patterns and biometrics to financial details and location history.

Without clear regulations, companies may exploit data for profit, surveillance, or discriminatory practices.

Data privacy laws ensure accountability by mandating privacy-by-design, ethical data handling, secure storage practices, and user empowerment through rights like data access, correction, and deletion.

For data scientists, understanding these legal requirements is critical to building compliant, transparent, and trustworthy systems.

Data Privacy, Compliance, and Governance in Modern AI Systems

1. Global Evolution of Data Privacy Regulations and Why They Matter

Over the past decade, the explosive growth of digital platforms has forced governments worldwide to strengthen privacy regulations.

Laws like GDPR set global benchmarks by requiring explicit consent, clear communication of data usage, and strong user rights.

Countries including India, Brazil, Japan, and South Korea have followed similar frameworks.

This global trend reflects an increasing recognition that personal data is as sensitive as physical property. Regulations ensure data is not collected excessively or used for unethical profiling.

For companies operating across borders, compliance requires understanding multiple legal frameworks and adapting systems accordingly.

2. Key Principles of Modern Privacy Laws (Consent, Transparency, Purpose Limitation)

Most data protection regulations share core principles aimed at protecting individuals.

Consent must be freely given, informed, and revocable at any time.

Transparency requires organizations to clearly disclose what data they collect, how it is used, and who it is shared with.

Purpose limitation ensures that data collected for one reason cannot be repurposed without consent.

These principles prevent hidden data harvesting, reduce misuse, and give individuals greater control.

Data scientists must incorporate these principles into data pipelines, ensuring ethical and compliant model development.

3. Data Subject Rights: Access, Correction, Portability & Erasure

Modern laws empower individuals with comprehensive rights over their data.

The right to access allows users to know what data an organization holds.

The right to rectification lets them correct inaccuracies. Data portability enables users to transfer their data to another service provider.

The right to erasure popularly known as the “right to be forgotten” allows individuals to request deletion of their data.

These rights strengthen user autonomy and require organizations to maintain robust data management systems.

Data scientists must design models and datasets to respect user requests without compromising system integrity.

4. Security Requirements and Breach Notification Standards

Privacy regulations mandate strong security controls such as encryption, access restrictions, anonymization, and regular audits.

Organizations must protect data from unauthorized access, theft, or accidental exposure. In many regions, data breaches must be reported to authorities and affected individuals within strict timelines, often 24–72 hours.

Failure to disclose breaches can lead to severe penalties. These requirements push companies to adopt proactive risk management.

For data scientists, secure data handling practices—from preprocessing to model deployment—are essential for maintaining compliance.

5. Penalties, Enforcement, and Organizational Accountability

Privacy laws include heavy financial penalties to ensure strict adherence. Under GDPR, fines can reach €20 million or 4% of annual global revenue, whichever is higher.

India’s DPDP Act imposes significant penalties for data leaks, misuse, or non-compliance.

Organizations must appoint Data Protection Officers (DPOs), conduct impact assessments, and adopt privacy-by-design frameworks.

This accountability ensures data protection is not just a legal requirement but a fundamental business responsibility.

For data scientists, documenting data sources, consent logs, and model decisions is crucial for audits and regulatory reviews.

6. Cross-Border Data Transfer Restrictions and Compliance Challenges

Many laws restrict transferring personal data across borders unless the receiving country ensures adequate protection. GDPR requires standard contractual clauses, while India’s DPDP Act has specific rules for data processing outside the country.

These restrictions impact global companies, cloud storage decisions, and data science workflows.

Organizations must choose compliant storage providers and ensure international partners follow privacy standards.

Data scientists working with global datasets must be mindful of residency requirements, anonymization rules, and jurisdictional limitations.

7. Emerging Trends: AI Governance, Algorithmic Transparency & Automated Decision Rights

Newer privacy regulations increasingly focus on algorithmic accountability. GDPR includes rights related to automated decision-making where individuals can contest AI-driven outcomes.

Many countries are developing AI-specific legislation that requires model transparency, bias audits, and explainability.

As AI becomes more integrated into finance, healthcare, and public services, these rules will become stricter.

Data scientists must prepare for a future where AI governance, ethical audits, and transparent model explanation are legally mandatory, not optional.

8. Data Minimization and Storage Limitation Requirements

Most global privacy laws mandate that organizations only collect data that is necessary for a specific purpose—known as data minimization.

This prevents excessive or irrelevant data collection that increases risk. Storage limitation ensures that data is not kept longer than required, reducing the exposure window for breaches.

Data scientists must design pipelines that automatically delete or anonymize data after its intended use.

This controls dataset size, reduces cost, and helps maintain compliance. These principles encourage more efficient and ethical data practices across industries.

9. Privacy-by-Design and Privacy-by-Default in Data Science Pipelines

Privacy-by-design requires embedding privacy safeguards into systems from the earliest stages rather than treating them as add-ons.

This includes secure architecture, limited access permissions, anonymized datasets, and documented consent mechanisms.

Privacy-by-default requires settings that favor users’ privacy without needing manual adjustment.

For data scientists, this means building models that do not rely on unnecessary personal identifiers and ensuring that system configurations prioritize minimal exposure of sensitive data.

It strengthens public trust and reduces legal liabilities.

10. Special Protections for Sensitive Personal Data

Many regulations classify certain categories of data as “sensitive” and require stronger safeguards.

These include biometric data, financial information, health records, political beliefs, sexual orientation, and children’s data.

Mishandling sensitive data can lead to severe harm and higher penalties.

Data scientists working in healthcare, finance, or biometric identification must use stricter encryption, anonymization, and access controls.

Models trained on sensitive categories often require explicit consent and additional documentation during audits.

11. Ethical Challenges in Interpreting Privacy Laws for AI Models

AI systems often need large datasets, but privacy laws restrict collection, sharing, and retention—creating practical conflicts.

Regulators expect organizations to justify why personal data is needed and whether synthetic or anonymized data can be used instead.

For data scientists, interpreting what is legally permissible during feature engineering, model training, and experimentation can be complex.

Ethical tension arises when business goals demand more data than the law allows, requiring strong governance and responsible decision-making.

12. Role of Third-Party Vendors and Cloud Platforms in Compliance

Many companies rely on external tools, cloud services, analytics platforms, or API providers.

Privacy laws treat these third-party vendors as “processors,” and organizations are accountable for ensuring they follow the law.

This includes verifying encryption standards, geographical storage location, data access logs, and breach response procedures.

Data scientists must ensure that external tools used for modeling or storage comply with jurisdictional requirements.

Vendor agreements now routinely include privacy assessments, stricter contracts, and ongoing audits.

Major Global Data Privacy Laws