Algorithmic bias refers to systematic and unfair discrimination that occurs when machine-learning models produce outcomes that disproportionately harm or benefit certain groups.

These biases often emerge from skewed training data, flawed feature selection, historical inequalities, or unmonitored model behavior.

As data-driven systems increasingly power decisions in hiring, lending, policing, healthcare, and social services, algorithmic bias has become a critical ethical concern.

Modern AI systems learn patterns from real-world data, but if that data reflects past discrimination or societal imbalances, the models may reinforce or even amplify those disparities.

Even well-intentioned developers can unknowingly introduce bias by overlooking edge cases, misinterpreting correlations, or failing to test fairness across demographic segments.

As a result, automated decisions can impact millions without transparency or accountability.

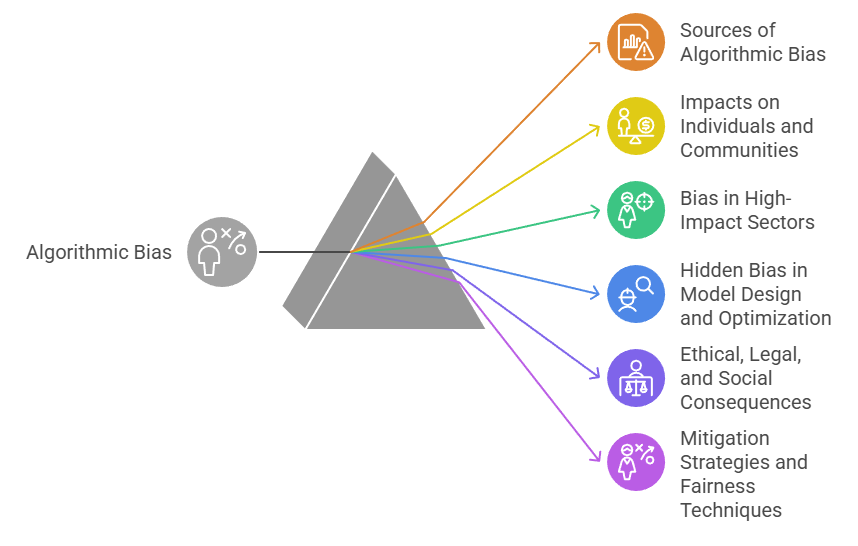

Understanding Algorithmic Bias and Fairness in AI

1. Sources of Algorithmic Bias

Algorithmic bias can arise from imbalanced datasets, underrepresented groups, or poor labeling quality, leading algorithms to learn inaccurate patterns.

It may also emerge from historical biases embedded in past decisions—such as hiring or policing records—that train models to repeat unfair judgments.

Feature engineering choices can unintentionally favor certain populations, while lack of domain expertise may cause data scientists to overlook sensitive variables.

Additionally, data pipelines may inherit structural inequalities already present in society.

Bias also arises when algorithms are optimized solely for accuracy without considering fairness metrics.

2. Impacts on Individuals and Communities

Algorithmic bias can lead to wrongful job rejections, unfair loan denials, biased medical treatments, and disproportionate policing of minority groups.

Communities may face long-term exclusion when algorithms repeatedly reinforce discriminatory patterns.

These effects can reduce public trust in AI systems and discourage engagement with institutions that rely on automated decisions.

Over time, repeated harm deepens socioeconomic gaps and perpetuates cycles of disadvantage.

In extreme cases, biased systems can determine life-altering outcomes such as prison sentencing or healthcare prioritization.

The cumulative impacts are often invisible yet profoundly damaging, especially for marginalized communities.

3. Bias in High-Impact Sectors (Hiring, Finance, Healthcare, Policing)

In hiring, biased screening algorithms may filter resumes based on gendered vocabulary or past workforce disparities.

In finance, credit scoring models may rely on neighborhood data linked to historical segregation.

Healthcare algorithms may under-diagnose chronic conditions in minority groups if training data underrepresents them.

Predictive policing systems may direct law-enforcement resources toward areas with biased historical crime data, creating a feedback loop of over-policing.

Insurance risk models may set higher premiums for disadvantaged groups due to skewed correlations.

These failures highlight how bias affects essential services and carries significant ethical consequences.

4. Hidden Bias in Model Design and Optimization

Many algorithmic biases remain hidden because models are optimized primarily for accuracy or profit, not fairness.

If developers overlook fairness metrics or cross-group performance evaluation, systemic disparities remain unseen.

Feature importance techniques may hide variables that function as proxies for protected attributes, such as ZIP codes representing race or socioeconomic status.

Model explainability challenges make it difficult for organizations to detect embedded discrimination.

Additionally, lack of diverse teams reduces awareness of potential bias. The result is a technically accurate model that is ethically harmful.

5. Ethical, Legal, and Social Consequences

Biased algorithms can expose organizations to legal liabilities, especially under GDPR, EU AI Act frameworks, and emerging AI fairness regulations worldwide.

Ethical consequences include reputational damage, public backlash, and loss of consumer trust.

Socially, algorithmic discrimination reinforces inequality, reduces access to opportunities, and damages civic participation.

When automated systems perpetuate unfair outcomes at scale, they weaken democratic values and institutional legitimacy.

The cumulative effects highlight the need for responsible AI governance and transparent accountability structures.

6. Mitigation Strategies and Fairness Techniques

Mitigation requires a multi-step approach: understanding data distributions, auditing models across demographic groups, and applying fairness constraints like equalized odds or demographic parity.

Techniques such as rebalancing datasets, removing proxy variables, and correcting biased labels can reduce harmful patterns.

Post-processing adjustments may modify outputs to ensure equitable treatment. Interdisciplinary reviews and stakeholder consultations ensure alignment with societal norms.

Continuous monitoring is essential because bias can reappear as data drifts or population patterns change.

Responsible AI practices embed fairness throughout the full lifecycle—not only during model development.

Real-World Failures

1. Amazon’s Hiring AI (2018) — The system learned from past male-dominated hiring data and systematically penalized resumes containing “women’s” keywords.

2. COMPAS Recidivism Tool — Predictive policing algorithm falsely labeled Black defendants as higher risk, worsening inequalities in the legal system.

3. Apple Card Gender Bias Incident — Women were assigned lower credit limits than men with similar financial profiles due to opaque scoring logic.

4. Healthcare Risk Algorithms — A widely used U.S. hospital algorithm under-identified high-risk Black patients because cost data (biased metric) was used to proxy health needs.

Success Stories

1. LinkedIn’s Fairness Interventions reduced gender bias in job recommendations by auditing model behavior across demographic segments.

2. Microsoft’s FairLearn Toolkit promoted transparency and offered debiasing techniques adopted across industries.

3. Google’s Inclusive ML Approach uses diverse datasets and rigorous fairness evaluations to reduce representation bias in vision models.