Ethical Issues in Artificial Intelligence examine the moral challenges that arise when AI systems influence human decisions and social outcomes.

In responsible data science, this topic focuses on risks such as bias, privacy violations, lack of transparency, and unintended harm.

Addressing these issues ensures that AI systems are fair, accountable, and aligned with human values.

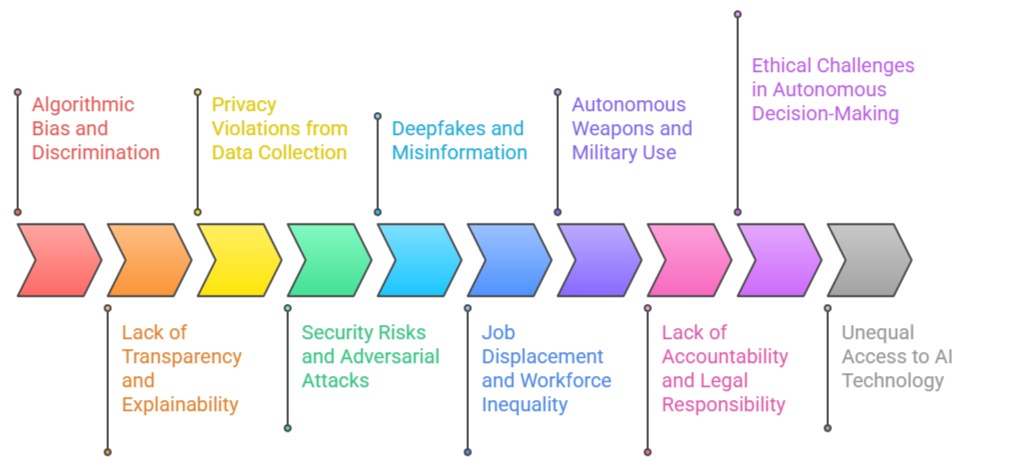

Important Ethical Issues in Artificial Intelligence

1. Algorithmic Bias and Discrimination

AI systems often inherit biases from the data they are trained on, leading to unfair outcomes for certain groups.

These biases can manifest in hiring tools, credit scoring, healthcare decisions, or criminal justice predictions.

Even small biases in training data can amplify after deployment, causing large-scale discrimination.

AI bias also affects marginalized communities who are underrepresented in datasets.

As AI becomes more widely used, the impact of unfair outcomes grows exponentially. Continual fairness testing and bias mitigation strategies are required. The challenge lies in eliminating both explicit and hidden biases across complex datasets.

2. Lack of Transparency and Explainability

Many AI models, especially deep learning systems, operate as “black boxes,” making it difficult to understand why they make certain decisions.

This lack of explainability reduces trust, limits accountability, and creates legal challenges.

For example, if an AI denies a loan or predicts a disease incorrectly, the affected individual deserves an explanation.

Lack of transparency also makes it harder to detect hidden bias, unethical data usage, or model errors.

Explainable AI (XAI) frameworks exist but remain limited in complex applications. Ensuring interpretability becomes increasingly important as AI influences high-stakes decisions.

3. Privacy Violations from Data Collection

AI systems often rely on massive amounts of user data, which raises concerns about surveillance, consent, and privacy rights.

Companies may collect more data than necessary to improve model accuracy, leading to intrusive profiling.

Facial recognition tools can identify individuals without consent, creating a risk of misuse by governments or corporations.

AI-powered tracking systems can monitor behavior, location, and personal preferences.

These issues make privacy protection a major ethical challenge. Ensuring secure storage, anonymization, and informed user consent becomes essential as AI adoption increases.

4. Security Risks and Adversarial Attacks

AI models are vulnerable to adversarial attacks where small, deliberate modifications to inputs can cause large errors.

For Example, altering a few pixels can fool image recognition systems, posing risks in cybersecurity and autonomous driving.

Attackers can also poison training data or exploit model weaknesses to cause harmful outcomes.

These vulnerabilities create ethical challenges because they threaten user safety and trust.

Continuous security testing and robust model hardening are necessary. AI security is becoming a top priority as systems are increasingly used in critical infrastructure.

5. Deepfakes and Misinformation

AI-generated deepfakes can create highly realistic fake images, videos, or audio that mislead the public.

These tools enable identity theft, political manipulation, defamation, and misinformation at unprecedented scale.

Deepfakes can distort elections, damage reputations, or create social conflict. Detecting them becomes harder as generative AI improves.

The ethical challenge lies in balancing creative uses of AI with the need to prevent malicious exploitation.

Strong verification, authentication, and content moderation systems are essential to combat this threat.

6. Job Displacement and Workforce Inequality

AI automation threatens jobs in manufacturing, retail, customer service, finance, logistics, and even knowledge professions.

While new jobs may emerge, displaced workers may lack the skills needed for future roles.

This creates economic inequality and social instability. Ethical challenges involve helping societies transition to AI-driven economies through reskilling and fair labor policies.

If unmanaged, AI-driven automation may widen the gap between wealthy tech-driven businesses and low-skill workers. Ensuring equitable distribution of AI benefits becomes critical.

7. Autonomous Weapons and Military Use

AI-powered weapons raise severe ethical concerns because they can make life-or-death decisions without human oversight.

Autonomous drones, robotic soldiers, and AI targeting systems create risks of accidental escalation and loss of human control.

Malfunctioning or hacked systems could cause catastrophic outcomes.

Many global organizations demand bans or strict regulations on lethal autonomous weapons.

However, nations continue to invest heavily in AI for military advantage. This remains one of the most urgent ethical debates surrounding AI.

8. Lack of Accountability and Legal Responsibility

When AI systems cause harm, determining who is legally responsible becomes complex.

Is it the developer, the user, the vendor, or the organization deploying the model? This lack of clarity creates ethical and legal gaps.

For example, if an autonomous car causes an accident, accountability may be shared across multiple parties.

Clear accountability frameworks and policies are needed.

Without them, victims may not receive justice, and organizations may avoid responsibility.Establishing liability in AI failures is essential for public trust.

9. Ethical Challenges in Autonomous Decision-Making

AI systems making independent decisions raise concerns about moral reasoning and value alignment.

Autonomous vehicles, for instance, may encounter dilemmas where human judgment would normally apply.

AI cannot fully understand human values, emotions, or context, leading to morally questionable outcomes.

This creates ethical risks in healthcare, finance, and governance.

Ensuring AI systems follow human-centric ethics is an ongoing challenge. Value alignment research continues but remains far from perfect.

10. Unequal Access to AI Technology

Advanced AI systems are expensive and controlled by a few major corporations, leading to inequality between countries, companies, and communities.

AI access gaps can widen global digital divides, leaving developing nations behind. Social inequalities worsen when only wealthy societies benefit from AI advancements.

Ethical challenges involve making AI accessible, safe, and affordable for all. Without fair access, AI may reinforce systemic inequalities rather than improve lives universally.

Future Trends & Predictions in AI Ethics

1. Global AI Governance Frameworks Will Become Standard

Countries are increasingly aligning on common ethical principles for AI. In the future, we will see the creation of international AI governance treaties—similar to climate agreements—that enforce transparency, accountability, safety testing, and responsible use.

These frameworks will address cross-border concerns like data transfers, AI weaponization, and global bias, making ethical compliance a global norm rather than a regional choice.

2. Mandatory Algorithmic Audits

Governments and enterprises will implement routine, legally required audits to assess model fairness, bias, explainability, privacy safeguards, and environmental impact.

AI audits will become as essential as financial audits, with independent organizations certifying whether a model meets ethical standards, especially in high-risk fields like banking, hiring, healthcare, and law enforcement.

3. Rise of “Ethical AI Officer” and Specialized Teams

Organizations will create dedicated roles such as Chief AI Ethics Officer, AI Risk Manager, and Ethics Review Committees.

These teams will oversee model lifecycle governance, ensure regulatory compliance, conduct impact assessments, and serve as a bridge between technical and non-technical stakeholders.

4. Explainable AI Will Become a Legal Requirement

As black-box systems become more problematic, regulations will demand interpretable and transparent models—especially in critical sectors.

Explainability tools (e.g., SHAP, LIME, counterfactual explanations) will evolve to become more intuitive, domain-specific, and standardized for public reporting.

5. Ethical Generative AI Controls

Generative AI will introduce stricter safeguards against misinformation, deepfakes, election interference, synthetic identities, and automated propaganda.

Future ethical frameworks will require digital watermarking, source verification tools, and real-time detection systems to maintain information integrity.

6. Emotion-Aware & Neuro-AI Regulation

As new systems can read emotions, brain signals, or intimate behavioral patterns, future ethics policies will protect cognitive freedom, mental privacy, and individual autonomy.

“Neuro-rights” will emerge as a new branch of digital human rights, preventing unauthorized manipulation of human thoughts or emotions.

7. Environmental Sustainability in AI

Future AI ethics will evaluate the carbon footprint of model training and deployment.

Organizations will be required to disclose energy usage, use greener data centers, and consider sustainability metrics before building large-scale models.

8. Shift Toward Human-Centered & Value-Aligned AI

AI systems will increasingly be designed around human values, cultural diversity, accessibility, and inclusion.

Ethical AI will prioritize human empowerment rather than automation for automation’s sake, focusing on augmenting—not replacing—human judgment.

9. AI-Powered Ethical Decision-Support Tools

Ironically, AI itself will be used to detect ethical risks—monitoring bias trends, flagging harmful outputs, identifying drift, and predicting unintended consequences.

These meta-AI systems will act as “ethical guardians” for other algorithms.

10. Dynamic & Continuous Ethical Compliance

Future ethics will move away from one-time evaluations and adopt real-time, continuous risk assessment.

Models will be monitored throughout their lifecycle for bias drift, performance degradation, or ethical violations, ensuring ongoing safety.

11. Personalized AI Ethics Based on Use-Case Categories

Different sectors (healthcare, HR, law, finance, defense, education) will have customized ethics rules.

A one-size-fits-all framework won’t work; instead, domain-specific ethical guidelines will become mandatory for context-sensitive applications.

12. Stronger Focus on Accountability & Liability

Legal systems will define clear responsibility for unethical AI outcomes—whether the blame falls on developers, deployers, data providers, or vendors. AI liability laws will ensure that harm caused by autonomous or semi-autonomous systems is traceable and compensable.

13. Public Awareness and Digital Literacy Programs

As AI becomes more embedded in everyday life, public education about algorithmic fairness, misinformation, deepfake awareness, and privacy rights will grow.

Countries may introduce digital literacy as a core curriculum, empowering citizens to understand and challenge AI-driven decisions.

14. Ethical Safeguards for Autonomous AI Agents

With the rise of autonomous agents that execute tasks, make purchases, negotiate, or generate code, ethics will address concerns related to free will, autonomy, malicious use, and the delegation of human authority to machines.

Guardrails will ensure that autonomous agents cannot engage in harmful or manipulative behaviors.

15. AI Ethics Benchmarks & Standardization: The future will bring globally accepted ethical benchmarks for evaluating fairness, robustness, safety, and environmental sustainability. These standards will shape the entire AI industry and help organizations measure ethical maturity.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.