Privacy, bias, and fairness are three foundational pillars of ethical data science, especially as AI systems become deeply integrated into societal functions such as hiring, lending, policing, healthcare, and digital services.

Privacy ensures that individuals maintain control over their personal information, preventing misuse, unauthorized surveillance, and exploitation.

As datasets grow larger and more detailed, ethical data management becomes essential for protecting sensitive information and preserving personal autonomy.

Bias, on the other hand, refers to systematic errors or unfair patterns in data or algorithms that disadvantage certain groups.

Since historical datasets often reflect social inequalities, biased models can unintentionally amplify discrimination.

Recognizing, detecting, and mitigating bias is therefore a critical responsibility in ethical data science.

Fairness ensures that algorithms treat all individuals and groups equitably, providing equal access, opportunity, and outcomes regardless of demographic background.

As AI-driven decisions increasingly affect human lives, fairness is essential for maintaining trust, social justice, and legal compliance.

Understanding Privacy, Bias, and Fairness in Responsible Data Science

Responsible data science goes beyond technical accuracy to address how data and algorithms impact people and society.

Understanding privacy, bias, and fairness helps ensure that data-driven systems are ethical, trustworthy, and aligned with legal and social responsibilities.

1. Privacy Protects Individuals from Surveillance and Misuse of Data

Privacy ensures that individuals have control over how their personal information is collected, stored, processed, and shared.

As organizations gather large datasets from apps, sensors, transactions, and online behavior, privacy safeguards prevent intrusive surveillance and profiling.

Without ethical privacy practices, sensitive information may be exploited by corporations, governments, or malicious actors, leading to identity theft, discrimination, or manipulation.

Privacy also supports legal compliance with regulations such as GDPR, CCPA, and India’s DPDP Act, which set strict rules on consent and data protection.

Ethical privacy practices encourage transparency, user control, and secure data handling. By prioritizing privacy, data scientists help preserve human dignity and autonomy in a digital age.

2. Ethical Privacy Requires Informed Consent and Data Minimization

Informed consent ensures that users understand what data is being collected, why it’s needed, and how it will be used, rather than being tricked by vague or complex policies.

Ethical data minimization means collecting only the information necessary for a specific purpose, avoiding over-collection that could increase risk.

This reduces exposure to breaches, prevents misuse, and limits the impact if data is compromised.

Consent and minimization build trust between users and organizations, showing respect for personal boundaries.

This approach also supports better data governance by reducing storage burdens and focusing on high-quality, relevant information.

Ultimately, ethical consent practices reinforce transparency and fairness in data-driven operations.

3. Bias Distorts Algorithms and Reinforces Existing Inequalities

Bias arises when datasets contain unbalanced, incomplete, or historically discriminatory information, causing models to produce unfair results.

This can lead to disproportionate disadvantages for certain groups—for example, unfair loan rejections, biased hiring recommendations, or discriminatory policing predictions.

Bias often goes unnoticed because it is embedded in real-world data that reflects systemic inequalities.

Ethical awareness requires data scientists to critically examine the sources, structure, and assumptions behind datasets.

Tools and fairness metrics help detect hidden patterns that may harm marginalized communities.

By addressing bias at every stage, organizations prevent the replication of social injustices. Bias mitigation is therefore essential for building trustworthy and equitable AI systems.

4. Bias Can Be Introduced at Multiple Stages of the Data Pipeline

Bias does not only originate in datasets—it can appear during data collection, labeling, feature engineering, model selection, or even interpretation of results.

Collection bias arises when certain groups are underrepresented; labeling bias occurs when humans apply subjective judgments; and algorithmic bias emerges from optimization choices that prioritize accuracy over fairness.

Understanding these sources helps data scientists pinpoint where issues originate.

Ethical frameworks encourage testing models across demographic groups, examining imbalanced outcomes, and adjusting methodologies accordingly.

Recognizing that bias can appear anywhere in the pipeline empowers teams to build safer, more inclusive systems. This perspective promotes long-term vigilance and continuous ethical improvement.

5. Fairness Ensures Equal Treatment and Equal Access to Opportunities

Fairness in data science ensures that AI systems do not favor or disadvantage individuals based on attributes such as race, gender, age, income, or location.

Ethical fairness examines whether outcomes, predictions, and recommendations are equitable across all groups.

This involves evaluating error rates, predictive accuracy, and the distribution of benefits or risks among different populations.

Fairness also requires understanding cultural and contextual differences, ensuring that models serve diverse communities with sensitivity.

By prioritizing fairness, organizations create AI systems that improve societal equity rather than reinforcing discrimination.

Ultimately, fairness is a moral, social, and legal requirement for responsible data science.

6. Fairness is Context-Dependent and Requires Careful Trade-Offs

Fairness is not a one-size-fits-all concept—the appropriate fairness metric depends on the use case, societal context, and potential consequences of decision errors.

For instance, equal opportunity may be essential in job applications, while equal false-negative rates may be more important in healthcare diagnostics.

Ethical decision-making involves balancing these fairness goals with accuracy, business needs, and user expectations.

Trade-offs must be made transparently and documented clearly to avoid hidden biases.

Engaging diverse stakeholders helps ensure that fairness aligns with community values. This dynamic understanding of fairness fosters responsible, human-centered AI design.

7. Privacy, Bias, and Fairness Are Interconnected Ethical Pillars

These three principles are deeply connected—protecting privacy reduces the risk of biased profiling, mitigating bias supports fairness, and fairness ensures that privacy protections benefit all groups.

For Example, improper handling of sensitive data may introduce bias, and lack of fairness may lead to discriminatory privacy practices.

Ethical frameworks encourage data scientists to consider these principles together rather than in isolation.

This holistic approach helps build robust systems that are safe, transparent, and trustworthy.

When integrated properly, these pillars protect individuals’ rights while enabling responsible innovation. Addressing them collectively strengthens the overall integrity of data science.

8. Privacy Enhances User Trust and Supports Ethical AI Adoption

Strong privacy practices help organizations build long-term trust with users, ensuring they feel safe sharing their data.

When users trust that their information will not be misused, manipulated, or sold without consent, they are more likely to participate in digital services.

Privacy also strengthens brand loyalty and reduces backlash caused by scandals such as unauthorized data sharing or breaches.

Companies that demonstrate strong privacy values often avoid costly legal consequences and maintain competitive advantage.

Ethical privacy practices include transparent communication, user control, data encryption, and secure storage.

Ultimately, privacy-driven trust accelerates responsible AI adoption and supports a healthier digital ecosystem.

9. Bias Analysis Encourages Better Data Quality and Model Reliability

Addressing bias improves the quality, robustness, and reliability of machine learning models.

When datasets are balanced, diverse, and accurately representative, models perform better across real-world scenarios.

This reduces errors, improves generalization, and prevents harmful edge-case failures.

Ethical bias evaluation encourages data scientists to use fairness metrics, stratified sampling, and bias-aware algorithms.

These practices also reveal hidden assumptions or flawed data-collection methods that undermine accuracy.

By reducing bias, organizations avoid reputational damage and legal scrutiny linked to discriminatory outcomes.

Ultimately, bias mitigation strengthens both ethical and technical performance.

10. Fairness Improves Social Outcomes and Supports Inclusive Innovation

Fairness ensures that AI benefits society equally rather than creating or strengthening inequalities. Inclusive models can improve access to healthcare, education, finance, and public services for underserved groups.

For Example, fair algorithms in credit scoring open opportunities for individuals with limited financial history but strong repayment behavior.

Fairness-driven innovation also helps companies reach wider audiences and operate ethically across cultures and markets.

As AI becomes global, fairness guidelines prevent unintentional discrimination caused by cultural biases.

This approach supports social well-being, protects vulnerable communities, and fosters sustainable AI adoption worldwide.

11. Privacy, Bias, and Fairness Reduce Regulatory and Compliance Risks

Governments worldwide are implementing strict rules for AI transparency, data protection, and algorithmic fairness.

Ethical implementation of privacy, bias mitigation, and fairness helps organizations comply with laws such as GDPR, the EU AI Act, India’s DPDP Act, and US state-level AI transparency policies.

Failure to address these principles can lead to lawsuits, fines, product bans, and reputational damage.

Ethical compliance also prepares teams for audits, documentation requirements, and external evaluations.

Integrating these principles into workflows ensures smoother regulatory approval for AI systems.

Ultimately, ethical adherence protects organizations from legal uncertainties and operational disruptions.

12. Fairness Requires Continuous Monitoring, Not One-Time Evaluation

Fairness is not guaranteed permanently—models can become unfair over time due to shifts in population, behavioral patterns, or data collection changes.

Ethical fairness therefore requires ongoing tracking, periodic auditing, and recalibration of models.

Monitoring ensures that error rates remain equitable and that no demographic groups are disproportionately affected.

This is especially important for high-impact decisions in hiring, policing, healthcare, and lending.

Continuous fairness checks also help detect model drift, unintended bias, or changes in input distributions.

Ethical vigilance ensures long-term stability, accuracy, and fairness in real-world environments.

Real-World Examples

1. Google Photos Mislabeling Case (Bias)

Google Photos mistakenly labeled African American individuals as “gorillas” due to biased training data.

This incident highlighted how racial bias in datasets can cause highly offensive and harmful outcomes.

It pushed companies globally to re-evaluate dataset diversity, labeling procedures, and fairness standards.

2. Cambridge Analytica Scandal (Privacy & Manipulation)

Cambridge Analytica harvested millions of Facebook users’ data without consent and used it to influence political campaigns.

This raised worldwide concerns about digital privacy, surveillance, and manipulation, leading to stronger regulations like GDPR and global privacy reforms.

3. Amazon’s Biased Hiring Algorithm (Bias & Fairness)

Amazon developed an AI hiring tool that favored male candidates because it learned from past hiring data dominated by men.

The algorithm automatically downgraded résumés containing the word “women’s.” Amazon scrapped the tool, illustrating how historical bias creates discriminatory outcomes.

4. Apple Card Gender Discrimination Case (Fairness)

Users reported that Apple Card offered significantly lower credit limits to women than men with similar financial profiles.

This triggered investigations and exposed fairness issues in credit-scoring algorithms used by major financial institutions.

5. Healthcare Risk Prediction Bias (Fairness & Bias)

A widely adopted health risk model in US hospitals underestimated the needs of Black patients because it used medical spending as a proxy for health needs.

Since Black patients historically spend less on healthcare despite higher needs, the model produced unfair results.

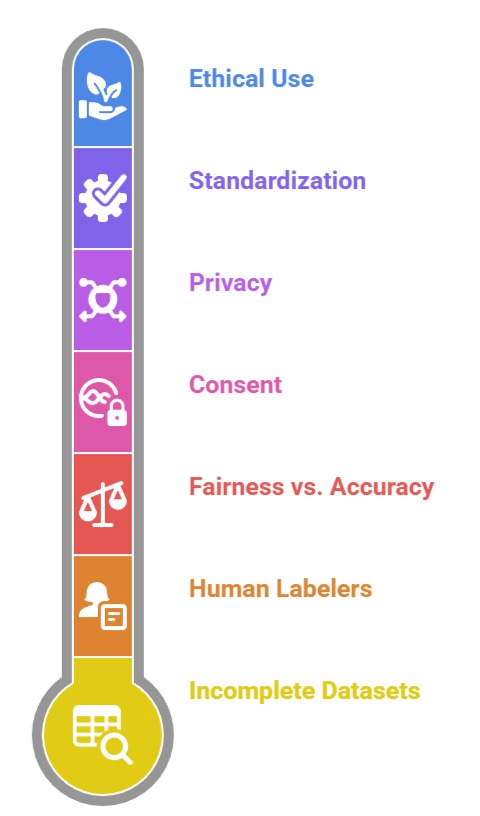

Practical Challenges

1. Dealing with Incomplete or Skewed Datasets: Most real-world datasets contain missing values, imbalance across demographic groups, or historical discrimination. Identifying these biases takes time, and fixing them requires specialized techniques like re-sampling, reweighting, or fairness-aware modeling.

2. Balancing Fairness with Accuracy and Business Goals: Improving fairness often reduces accuracy or changes optimization trade-offs. Organizations sometimes struggle to justify fairness decisions when they conflict with business objectives, creating ethical dilemmas for data teams.

3. Difficulty Obtaining Consent in Complex Systems: Users often do not read or understand privacy policies, making “informed consent” difficult to achieve. Large-scale systems like IoT devices or apps collect data continuously, complicating transparency.

4. Lack of Standardized Fairness Guidelines Across Industries: Different industries need different fairness metrics—what works in finance may not work in healthcare. This lack of universal fairness standards creates confusion about which metrics are appropriate.

5. Hidden Bias Introduced by Human Labelers: Datasets labeled by humans may contain subjective judgments, stereotypes, or cultural biases. These human biases silently propagate into the models unless carefully monitored.

6. Ensuring Ethical Use After Deployment: Even if a model is built ethically, it may be misused by others after deployment—for surveillance, profiling, or manipulation. Controlling downstream use remains a difficult ethical challenge.

7. Technical Complexity of Ensuring Privacy: Advanced privacy techniques—such as differential privacy, encryption, and anonymization—require specialized expertise. Improper implementation may give a false sense of security while still exposing private data.