Auditability creates shared documentation and monitoring dashboards that different departments—legal, compliance, engineering, risk, operations can understand.

This ensures alignment across technical and non-technical teams regarding how models should behave.

Standardized audit logs and monitoring frameworks help organizations implement uniform governance rules.

This cross-functional visibility helps reduce misunderstandings, streamline decision-making, and ensure that ethical principles are integrated throughout model development and deployment.

Audit Trails and Model Monitoring for Responsible AI

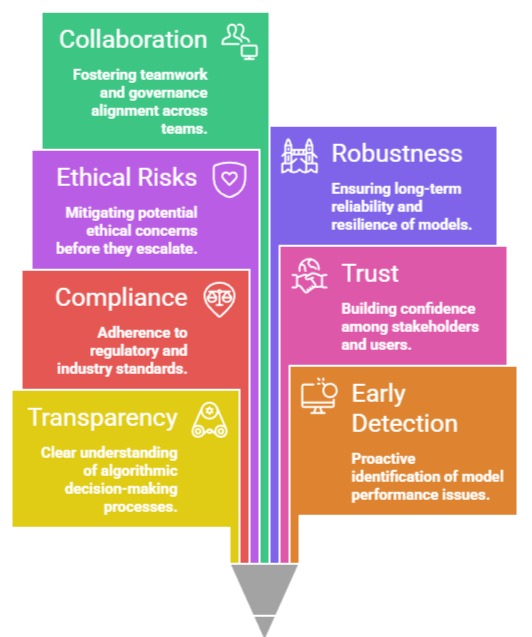

1. Ensures Transparency in Algorithmic Decision-Making

Auditability provides a clear documentation trail that allows organizations to see how models reached specific decisions, which makes it possible to verify whether outcomes align with ethical and legal expectations.

It enables teams to identify which variables influenced predictions and which data sources were used.

This transparency is critical when working in domains like finance or healthcare where decisions have direct impacts on lives.

It also allows regulators to review processes without exposing sensitive intellectual property.

Ultimately, transparency reinforces user trust, reduces the risk of hidden biases, and ensures that models operate according to intended policies.

2. Enables Early Detection of Model Drift and Performance Degradation

Model monitoring continuously tracks model behavior to detect any drop in accuracy, precision, or fairness caused by changes in real-world data.

Drift may emerge due to societal shifts, market changes, seasonality, or evolving user behavior, leading the model to produce unreliable or biased outcomes.

Monitoring systems alert data scientists before the drift significantly impacts service quality.

This early detection helps avoid harmful decisions, financial losses, or reputational damage.

By quickly triggering retraining pipelines or model updates, organizations maintain operational stability and ethical performance.

3. Supports Compliance with Regulations and Industry Standards

Audit logs and monitoring reports help organizations meet legal obligations related to fairness, explainability, and risk mitigation.

Many emerging laws require organizations to demonstrate how AI makes decisions and maintain detailed records for audits.

Proper auditability ensures traceability across the entire lifecycle data collection, preprocessing, model training, validation, deployment, and updates.

This reduces regulatory penalties and ensures smoother compliance reviews.

It also helps companies adhere to internal ethics policies, certification frameworks, and quality assurance standards.

4. Builds Trust with Stakeholders and End Users

Transparent monitoring and auditing practices show users that the organization is committed to fairness and accountability.

Stakeholders—including customers, employees, and regulators—gain reassurance that automated systems are not being used irresponsibly.

Documented evidence of monitoring increases confidence in the system’s reliability and ethical foundation.

This helps mitigate fears of “black box” decision-making.

Over time, clear communication backed by audit data becomes a competitive advantage as trust becomes a key differentiator for companies using AI.

5. Helps Identify Ethical Risks Before They Escalate

Monitoring tools detect inequities, biases, or unfair outcomes in real time, preventing discriminatory impact from spreading across large populations.

Issues such as unequal rejection rates, gender bias, or unfair prioritization often begin subtly and worsen without intervention.

Audit logs act as evidence to investigate root causes, from data imbalance to flawed feature engineering.

Identifying risks early prevents reputational damage and avoids user harm, lawsuits, or non-compliance.

This creates a proactive ethical system instead of a reactive one.

6. Improves Model Robustness and Long-Term Reliability

Auditability provides insights into where models fail, why they fail, and which scenarios cause errors.

Continuous monitoring reveals patterns of misprediction or vulnerabilities such as adversarial inputs.

These insights guide improvements in feature selection, model architecture, or data engineering.

Over time, models become more adaptable, consistent, and resilient.

Continuous feedback loops allow organizations to update models with better training data and more reliable algorithms, ensuring that systems remain effective throughout their lifecycle.

7. Facilitates Cross-Team Collaboration and Governance Alignment

Auditability creates shared documentation and monitoring dashboards that different departments—legal, compliance, engineering, risk, operations—can understand.

This ensures alignment across technical and non-technical teams regarding how models should behave.

Standardized audit logs and monitoring frameworks help organizations implement uniform governance rules.

This cross-functional visibility helps reduce misunderstandings, streamline decision-making, and ensure that ethical principles are integrated throughout model development and deployment.

Importance of Auditability and Monitoring

1. Prevents Ethical Failures and Public Harm

Robust audit systems allow organizations to catch unfair or harmful outcomes before they affect large numbers of users.

For Example, if a hiring algorithm starts rejecting candidates from a minority group disproportionately, continuous monitoring will signal the issue early.

This prevents discrimination from becoming systemic and protects vulnerable groups from inequitable treatment. Ethical prevention is more cost-effective than damage control after a crisis.

2. Strengthens Organizational Accountability Frameworks

With clear audit trails, it becomes easier to identify who built, approved, and deployed a model, ensuring accountability across the AI lifecycle.

This clarity prevents blame-shifting during failures and promotes responsible development practices.

It also encourages leadership to prioritize fairness and governance as part of their core responsibilities.

3. Enhances Decision Interpretability for End Users

Companies can use audit data to provide understandable explanations to individuals affected by automated decisions.

This improves user rights, such as the right to explanation under GDPR. Transparent reporting helps reduce user frustration and supports ethical communication.

4. Minimizes Operational and Legal Risks

Monitoring prevents legal exposure arising from biased or inaccurate outcomes.

Early warnings can help companies adjust models before regulators intervene.

This reduces penalties, service disruptions, or lawsuits from individuals citing discriminatory outcomes.

5. Facilitates Responsible Innovation Rather Than Restrictive Caution

Organizations with strong monitoring feel more confident deploying innovative AI systems because they know they have safeguards.

This creates an environment where innovation and ethics coexist rather than conflict.

Challenges of Auditability & Model Monitoring

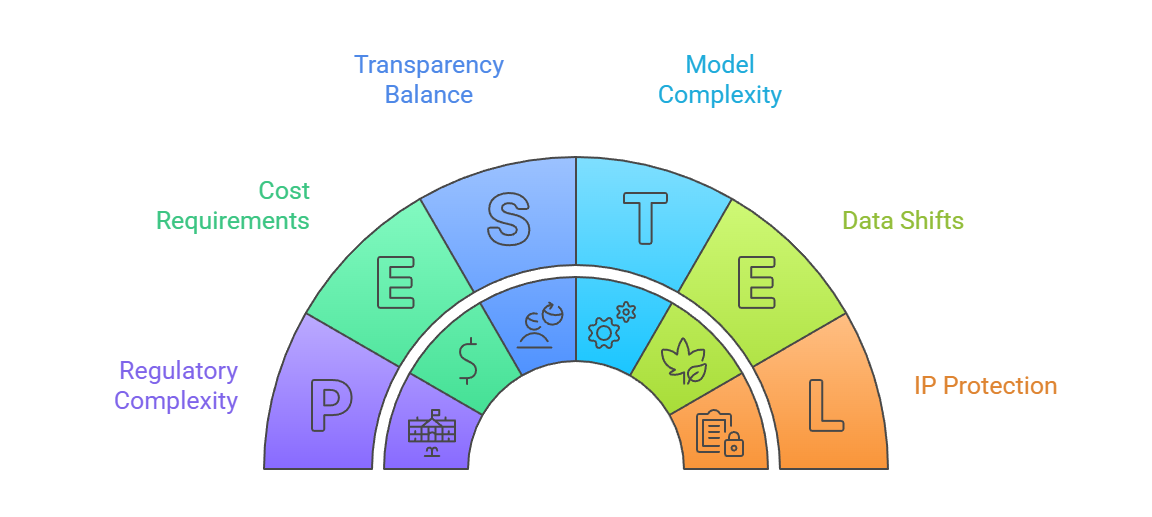

1. Complexity of Modern ML Models

1. Complexity of Modern ML Models

Advanced deep learning and ensemble models are often opaque, making it difficult to generate clear and interpretable audit trails.

Unlike simple models, their internal decision structures are not easily understood, which limits transparency.

Organizations struggle to balance performance and interpretability.

This complexity increases the burden on monitoring systems and demands better explainable AI tools.

2. Cost and Resource Requirements

Setting up monitoring infrastructure, logging systems, and audit pipelines requires considerable investment.

Smaller organizations may lack the budget or expertise to implement full-scale systems.

Additionally, storing audit logs securely while complying with data privacy laws increases operational overhead.

3. Data Quality and Context Shifts

Monitoring can only detect issues; it cannot fix poor-quality or biased data upstream.

When user behavior changes rapidly, drift detection becomes harder to fine-tune.

Misconfigured alerts may lead to false positives or negatives, reducing trust in the monitoring system.

4. Balancing Transparency and Intellectual Property

Companies struggle to provide transparency without exposing trade secrets or proprietary model details.

Regulators expect explainability, while businesses aim to protect competitive advantage.

Achieving both simultaneously is difficult and requires careful governance planning.

5. Regulatory Complexity Across Regions

Different countries impose different transparency requirements, making global compliance challenging.

Auditability standards across regions vary significantly, requiring organizations to adapt systems based on jurisdiction.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.