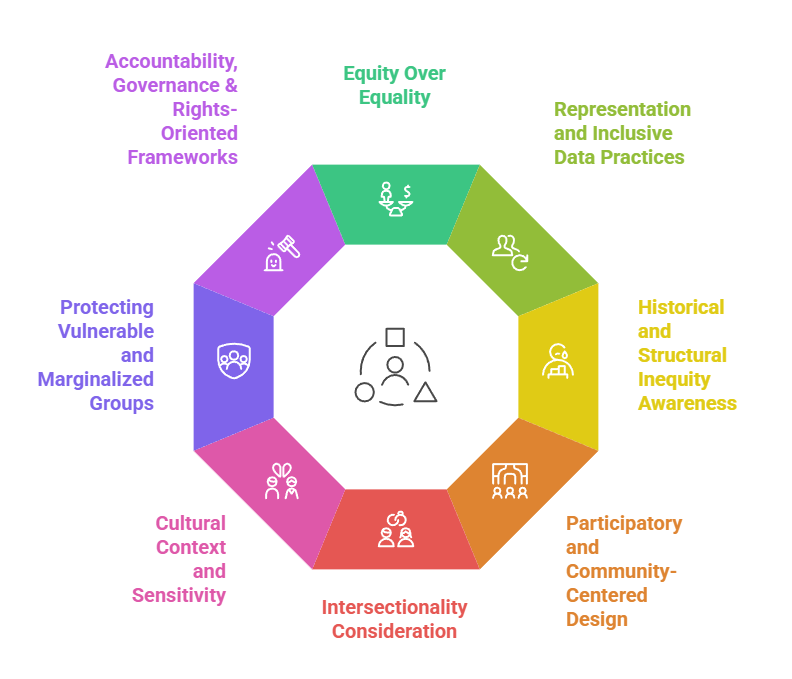

Equity and Social Justice Principles focus on ensuring that data-driven systems do not reinforce existing social inequalities or marginalize vulnerable groups.

In responsible data science, these principles require models and datasets to promote fair access, equal opportunity, and inclusive outcomes across diverse populations.

Ethical decision-making must actively consider historical disadvantage and societal impact, not just technical performance.

Principles of Equity & Social Justice in Data Science

1. Equity Over Equality

Equity means giving people what they need to achieve fair outcomes, rather than treating everyone the same.

In data science, this means adjusting models, thresholds, or training strategies to compensate for groups with disadvantages.

Equality would apply identical rules to everyone, but this often amplifies historical unfairness.

Equity acknowledges the unequal starting points created by discrimination, poverty, colonization, or marginalization.

Ethical AI systems must identify these disparities and build compensatory mechanisms. This ensures the technology works fairly for all communities, not just majority groups.

2. Representation and Inclusive Data Practices

This principle focuses on ensuring datasets accurately reflect all groups, especially minorities, people with disabilities, rural populations, and culturally diverse communities.

Inclusive data reduces errors and prevents systems from being biased toward majority populations. It requires deliberate efforts in data collection, annotation, and evaluation to ensure fairness.

Missing or underrepresented groups must be identified early through sampling audits.

Without representation justice, ML models cannot deliver socially just outcomes. Proper representation also includes culturally meaningful context, not just numeric counts.

3. Historical and Structural Inequity Awareness

AI systems must recognize that data often reflects decades of discrimination, colonial patterns, racial profiling, and social inequality.

Models trained on such data risk reinforcing these injustices unless corrective actions are taken.

This principle requires identifying systemic bias sources—such as biased policing data or unequal healthcare access—and adjusting models accordingly.

Ethical developers must analyze why disparities exist rather than treating them as objective patterns. This leads to more socially responsible predictions. Fair models must actively counteract, not reproduce, historical injustice.

4. Participatory and Community-Centered Design

Communities directly affected by AI systems must be included in the design, testing, and deployment stages.

This principle emphasizes consultation with marginalized groups to understand context-specific risks, cultural norms, and fairness expectations. Community involvement helps uncover biases that engineers may overlook.

It also builds trust, transparency, and legitimacy for the technology. Participatory design ensures that AI serves real human needs rather than institutional convenience.

Ethical systems must reflect the lived experiences of the people they impact.

5. Intersectionality Consideration

Individuals often belong to multiple identity categories—gender, race, caste, disability, age, income—which together shape their risk of discrimination.

Data science must evaluate how models perform across these intersections instead of looking at single factors.

Intersectionality helps reveal hidden biases affecting groups like Black women, Indigenous LGBTQ+ individuals, or low-income elderly populations.

Ethical AI must analyze multilayered identity impacts to prevent compounding harms.

Without intersectionality, fairness evaluations remain incomplete and may still disadvantage vulnerable subgroups.

6. Cultural Context and Sensitivity

AI systems must respect cultural differences, linguistic variations, social norms, and community-specific contexts.

Without cultural sensitivity, models may misinterpret language, gestures, or behavior from certain populations and make unfair predictions.

This principle demands regionalized data, multilingual models, and input from cultural experts.

Culturally aware design prevents harmful stereotypes, misclassification, and miscommunication.

It ensures AI systems do not impose dominant cultural norms as “default” or universal. Culture-aware data science supports global inclusiveness.

7. Protecting Vulnerable and Marginalized Groups

Vulnerable populations—such as refugees, children, minorities, rural groups, and low-income households—require additional protections.

AI systems must not exploit their data or expose them to harm through decisions that affect essential services.

Ethical data stewardship includes strict consent, secure storage, and careful limitations on data reuse.

Vulnerable groups often lack the power to challenge algorithmic decisions, making responsible design crucial.

This principle ensures AI does not deepen existing inequalities but instead shields those most at risk.

8. Accountability, Governance & Rights-Oriented Frameworks

Ethical AI requires oversight structures such as fairness audits, risk assessments, transparency reports, and bias documentation.

Accountability mechanisms ensure that equity isn’t optional but a formal responsibility.

Rights-based frameworks emphasize human dignity, non-discrimination, and legal protections across regions.

Algorithms influencing critical life decisions must be explainable, contestable, and regularly reviewed.

Governance ensures long-term social justice by maintaining ethical standards throughout the AI lifecycle.

It also supports cross-disciplinary collaboration between engineers, ethicists, policymakers, and civil society.

Practical Challenges in Implementing Equity & Social Justice

Implementing equity and social justice in data-driven systems is complex and extends far beyond technical model design. These challenges highlight the need to balance ethical responsibility, organizational constraints, and real-world social dynamics when building fair and inclusive AI solutions.

1. Lack of High-Quality, Diverse, and Inclusive Data

Many AI systems rely on datasets that disproportionately represent majority groups, urban populations, or digitally active users, while marginalizing rural communities, ethnic minorities, and low-income groups.

Without adequate representation, algorithms learn patterns that favor dominant groups and misinterpret others.

Collecting diverse data is often costly due to logistical barriers, language limitations, cultural differences, and legal restrictions.

Some communities may also distrust data collection due to historical misuse. As a result, developers struggle to balance inclusiveness with privacy and compliance.

This creates a foundational challenge in building equitable models.

2. Conflicts Between Model Accuracy and Equity Goals

Improving equity may sometimes decrease short-term accuracy or performance metrics, leading organizations to resist equity measures.

For example, adjusting thresholds to reduce discrimination in loan models may slightly increase false positives or false negatives.

Businesses may prioritize efficiency, profit, or speed over socially just outcomes.

Teams must navigate trade-offs between technical optimization and ethical responsibility.

Many practitioners lack frameworks to balance these priorities, making equity seem like a “cost” rather than a societal obligation. This tension slows down the adoption of equity-driven designs.

3. Difficulty in Measuring Equity and Social Justice

Unlike accuracy or precision, equity does not have a single universal metric.

It requires analyzing fairness across multiple demographic and intersectional subgroups, which complicates evaluation.

Some forms of systemic inequality—such as caste bias, racial profiling, or educational disparity—cannot be fully captured through quantitative measures.

Intersectional fairness is even harder because sample sizes for many subgroups may be small.

Social justice also requires qualitative assessments, community insights, and contextual understanding. This makes equity measurement both technically and socially complex.

4. Institutional, Political, and Cultural Resistance

Organizations may resist fairness and equity measures because they require transparency, increased accountability, and potential admission of existing biases.

Some sectors—like policing, credit scoring, or hiring—may oppose changes that reveal structural discrimination.

Political systems may resist equity-driven AI because they disrupt power hierarchies or expose unfair policies.

Cultural beliefs may shape norms around gender, caste, or ethnicity, influencing decisions about fairness.

This resistance makes it challenging for data science teams to push equity reforms without organizational or political support.

5. Limited Community Engagement and Participation

Many AI systems are built by developers who have no lived experience with the communities affected by the algorithms.

Without consulting these communities, teams fail to understand cultural nuances, social vulnerabilities, or local challenges.

Lack of engagement leads to solutions that may seem “accurate” from a technical perspective but harmful in real-world contexts.

Communities may also feel excluded, powerless, or mistrustful of AI systems imposed on them.

Participatory design requires time, resources, and interdisciplinary collaboration, which many projects overlook. This creates a gap between technological design and social impact.

6. Embedded Historical and Structural Inequalities in Data

Data often encodes decades of discriminatory practices—racial profiling, caste segregation, gender inequality, colonization patterns, or socio-economic disparities.

Removing such embedded bias is extremely challenging because these patterns are subtle, systemic, and deeply intertwined with social structures.

Models built on such data risk legitimizing injustice as “objective” or “predictive.”

Correcting structural bias requires historical awareness, ethical judgment, and sometimes the removal of entire datasets.

Many organizations lack the expertise or willingness to address these deep-rooted inequities.

7. Lack of Explainability and Transparency in AI Models

Many powerful models—especially deep learning systems—operate as black boxes, making their decision-making difficult to interpret.

When models lack transparency, affected individuals cannot challenge or understand biased outcomes. Without explainability, equity violations remain hidden and difficult to prove.

Many fairness techniques require interpretable models, which may not align with industry preferences for high-performance black-box models. Developers struggle to balance interpretability, accuracy, and fairness, especially in high-risk sectors like finance and healthcare.

8. Legal, Compliance, and Ethical Ambiguities

Laws around fairness, discrimination, and data rights vary widely between countries, and many regions lack clear guidance for algorithmic equity. Data scientists often face uncertainty about what is legally permissible or ethically acceptable.

Some fairness strategies—such as collecting sensitive demographic data for analysis—may conflict with privacy laws.

Legal ambiguity creates a risk-averse environment where organizations avoid equity interventions.

Without strong regulatory frameworks, fairness becomes optional and inconsistently applied across sectors.

9. Resource Limitations and Skill Gap

Implementing equity requires advanced expertise in ethics, fairness metrics, sociology, law, and machine learning.

Most teams lack interdisciplinary skills to approach equity holistically.

Smaller organizations may not have the budget or tools for fairness audits, bias testing, or ethical oversight.

Training teams on equity principles is time-intensive and costly, causing delays. Equity-related practices are often deprioritized due to deadlines, budget constraints, and business pressure.

10. Unintended Consequences and Overcorrection Risk

While correcting bias, algorithms may unintentionally overcompensate, creating new forms of unfairness.

For Example, modifying decision thresholds to help disadvantaged groups may unintentionally disadvantage others if not carefully analyzed.

Overcorrection can also lead to political or public backlash, reducing trust in the system.

This challenge shows why equity is not simply a mathematical adjustment but a continuous, careful balancing act. Ethical oversight and iterative testing are necessary to avoid new inequities.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.