As data-driven systems increasingly influence decisions in hiring, healthcare, banking, policing, and education, detecting and mitigating bias has become a central pillar of ethical data science.

Bias can originate from unbalanced datasets, flawed labeling, poor feature engineering, or historical inequalities reflected in the data.

Without proper detection mechanisms, biased models can create harmful, unfair, and discriminatory outcomes at scale, often unnoticed until damage has been done.

Bias detection involves systematically evaluating models and datasets to identify disparities across demographic groups such as gender, race, age, geography, or socioeconomic status. Mitigation,

on the other hand, includes strategies that reduce, control, or eliminate unfair patterns using preprocessing adjustments, in-model constraints, or post-processing corrections.

Modern bias mitigation goes beyond technical fixes it includes ethical considerations, stakeholder involvement, impacts on affected groups, and alignment with fairness regulations.

Organizations increasingly adopt fairness dashboards, automated audits, interpretability tools, and continuous monitoring frameworks as part of Responsible AI governance.

Operational Bias Mitigation in Machine Learning Systems

Operational bias mitigation focuses on embedding fairness checks and controls throughout the entire machine learning lifecycle.

By systematically detecting, measuring, and correcting bias at data, model, and deployment stages, organizations can build reliable, ethical, and inclusive AI systems.

1. Dataset Bias Detection Techniques

Dataset bias detection includes analyzing the representation of different demographic groups to identify under-sampled or overrepresented segments.

Tools such as distribution charts, data profiling, and fairness dashboards help uncover imbalances early.

Label bias is examined through cross-group consistency checks to ensure that labels are not influenced by stereotypes or historical inequalities.

Data scientists must check for proxy variables like ZIP codes or language patterns that indirectly reflect sensitive attributes.

Statistical metrics such as disparate impact ratios help quantify representation fairness.

Regular audits ensure that datasets remain balanced even as data drifts. These techniques prevent unfairness before models are even trained.

2. Model-Level Bias Auditing & Fairness Metrics

Bias detection continues during model evaluation using fairness metrics such as demographic parity, equal opportunity, disparate impact, and predictive equality.

These metrics compare model behavior across demographic segments to identify disparities in error rates, false positives, or false negatives.

Tools like AIF360, FairLearn, and Google’s What-If Tool help visualize fairness issues and identify patterns hidden by aggregate accuracy scores.

Audits also examine feature importance to ensure sensitive proxies do not heavily influence predictions.

Continuous evaluation is essential because fairness can degrade when incoming data changes. Model auditing ensures equitable performance regardless of group identity.

3. Preprocessing Mitigation (Data Rebalancing & Cleaning)

Preprocessing mitigation involves modifying datasets before model training to reduce systematic bias.

Techniques include oversampling minority classes, undersampling majority classes, or using synthetic methods like SMOTE to create balanced datasets.

Fair representation learning methods transform data into protected attribute-neutral formats.

Data cleaning also ensures labels are free from human bias by using cross-validation, expert review, or de-biasing filters.

Removing or masking sensitive variables and their proxies helps prevent models from learning unfair correlations.

These preprocessing steps aim to create a fairer foundation for machine learning models.

4. In-Model Mitigation (Fairness-Aware Algorithms)

In-model mitigation integrates fairness constraints directly into the training process.

Algorithms can be modified to penalize discriminatory predictions or optimize for multiple fairness metrics alongside accuracy.

Techniques like adversarial debiasing train a secondary model to detect and remove bias while the main model learns predictions.

Regularization-based approaches discourage reliance on sensitive attributes or proxy variables.

Fair-loss functions ensure that error rates remain consistent across demographic groups.

These approaches make fairness an inherent part of the model rather than an afterthought applied post-training.

5. Post-Processing Mitigation (Adjusting Outputs)

Post-processing techniques adjust model predictions after training when direct modifications to data or algorithm are not possible.

Methods like equalized odds post-processing modify decision thresholds for specific groups to align performance metrics.

Reject option classification allows model decisions to be corrected manually when confidence is low and fairness risk is high.

Score calibration ensures consistent interpretation of probabilities across demographic segments.

Rule-based overrides can intervene when predictions produce disproportionate harm. These techniques help organizations fix unfair outcomes without retraining entire models.

6. Explainability & Interpretability as Bias Detection Tools

Explainability tools such as SHAP, LIME, and feature attribution methods play a critical role in uncovering hidden bias.

They help data scientists understand why a model makes certain decisions and whether those decisions rely on unfair or sensitive features.

Interpretability also improves transparency for stakeholders, enabling external review of potentially discriminatory logic.

When explanations show overreliance on a specific demographic indicator, corrective action can be taken.

Explainability acts as both a bias-diagnosis mechanism and a validation tool for fairness claims. Increasing interpretability is essential for regulatory compliance and user trust.

7. Continuous Monitoring & Human Oversight

Bias mitigation is not a one-time activity; it requires continuous monitoring as new data flows into production systems.

Real-time dashboards track fairness metrics to detect drift or emerging disparities.

Human oversight committees review outputs in high-stakes domains such as healthcare, finance, and hiring.

Feedback loops enable affected users to report unfair outcomes, helping improve model accountability.

Periodic re-training ensures fairness remains stable over time.

Continuous governance prevents slow, unnoticed increases in inequality caused by changes in population or data patterns.

8. Cross-Demographic Performance Evaluation

Evaluating model performance solely on overall accuracy hides disparities between demographic segments.

Cross-group analysis compares false positives, false negatives, precision, recall, and risk scores across different attributes like gender, age, and race.

This helps detect hidden disparities even when the model appears reliable in aggregate.

A fair system must ensure consistent performance regardless of a user’s background, and deviations signal underlying bias.

Cross-performance evaluation also reveals intersectional bias (e.g., impacts on “Black women” vs “women” overall), which is often overlooked.

This granular approach ensures that fairness is evaluated holistically.

9. Bias-Resistant Feature Engineering

Feature engineering plays a powerful role in bias reduction by removing or transforming problematic attributes.

Proxy variables such as location, name, device type, or browsing patterns may encode sensitive information.

Engineers must critically analyze correlations to prevent models from learning discriminatory shortcuts.

Techniques like feature clustering, fairness-driven feature elimination, and correlation heatmaps help identify features that transmit unintended bias.

Ethical feature engineering builds a transparent feature set aligned with fairness goals and reduces the risk of harmful model behavior.

10. Fairness-Aware Model Selection and Benchmarking

Choosing models that naturally support fairness improves mitigation effectiveness.

Linear models are easier to audit, while tree-based models handle nonlinear patterns but can encode more hidden bias.

Some advanced models offer built-in fairness control mechanisms or interpretable decision boundaries.

Benchmarking multiple algorithms helps identify the most ethically suitable option rather than optimizing only for accuracy.

Fairness must be a selection criterion alongside performance, scalability, and domain fit.

11. Stakeholder and Domain Expert Involvement

Involving domain experts (e.g., doctors, HR specialists, financial analysts) ensures fairness decisions align with real-world practices and community values.

Stakeholders help validate assumptions, highlight sensitive variables, and provide context for interpreting model behavior.

Their involvement prevents technical teams from misinterpreting fairness requirements or overlooking societal impacts.

Ethical AI development becomes collaborative rather than purely mathematical, leading to more human-centered and inclusive systems.

12. Regulatory and Compliance-Based Bias Audits

Governments are introducing strong rules (EU AI Act, FTC AI Guidelines, India’s upcoming DPDP applications) requiring formal fairness assessments.

Organizations must document datasets, modeling decisions, fairness metrics, and risk analyses.

External audits may verify compliance for high-risk AI systems such as credit scoring or hiring tools.

Compliance frameworks make continuous fairness monitoring and transparent reporting mandatory, shaping how businesses design and deploy ethical AI systems.

Real-World Case Studies

1. LinkedIn Job Recommendation Bias Reduction

LinkedIn discovered that its job recommendation system was favoring male profiles due to engagement-based ranking signals.

By conducting fairness audits, analyzing gender-segmented performance, and adding fairness constraints, LinkedIn reduced gender disparities in job exposure.

This success demonstrates the impact of fairness-aware ranking and continuous monitoring in real-world recommender systems.

2. Google Photos Misclassification Incident

Google Photos once mislabeled African-American individuals as “gorillas,” exposing severe representation bias in its training datasets.

Google implemented stricter fairness testing, increased dataset diversity, and introduced additional layers of validation for sensitive categories.

The incident reinforced the importance of demographic balance and fine-grained bias detection in computer vision systems.

3. Healthcare Risk Prediction Algorithm Bias (U.S.)

A widely used algorithm that predicted which patients needed extra medical support under-identified Black patients.

The algorithm used historical healthcare spending as a proxy for health risk—an inherently biased assumption because marginalized groups often spend less due to systemic barriers.

After audits revealed the bias, developers replaced the proxy variable and recalibrated the model. This case highlights the danger of hidden proxy variables and the need for domain expert review.

4. Credit Scoring and Apple Card Gender Bias

Reports indicated women received lower credit limits compared to men with similar financial profiles.

This led regulators to audit the system, exposing the need for fairness testing across demographic groups before deployment.

This case emphasizes the importance of transparency and continuous fairness audits in financial decision-making systems.

5. YouTube Recommendation Filter Bubble Issues

Bias was detected in YouTube’s recommendation algorithm, which amplified extreme content for specific groups due to engagement-optimization.

Mitigation involved adjusting ranking criteria, adding human review, and building safety layers to reduce harmful spirals.

This demonstrates how fairness concerns extend beyond discrimination to societal wellbeing.

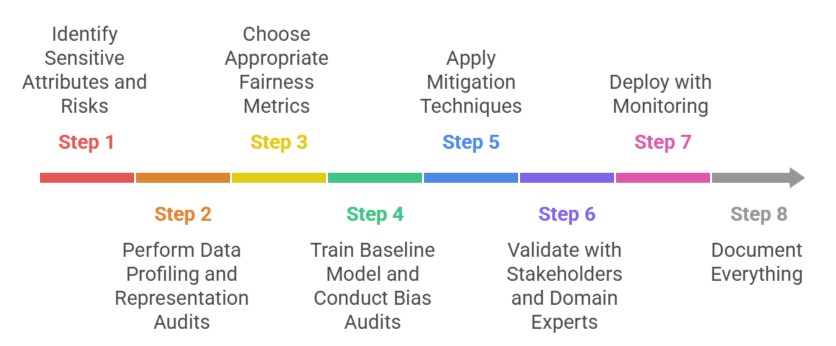

Practical Implementation Steps

Step 1: Identify Sensitive Attributes and Risks

Define which demographic dimensions (gender, race, age, etc.) require fairness checks. Conduct risk assessments to determine potential harm.

Step 2: Perform Data Profiling and Representation Audits

Analyze dataset distributions, identify imbalances, check for missing groups, and uncover proxy variables. Use fairness dashboards for visualization.

Step 3: Choose Appropriate Fairness Metrics

Select metrics such as demographic parity, equal opportunity, or equalized odds based on domain requirements. Different sectors require different fairness standards.

Step 4: Train Baseline Model and Conduct Bias Audits

Evaluate model performance for each demographic group. Compare false-positive/negative rates, prediction calibration, and output fairness.

Step 5: Apply Mitigation Techniques

Preprocessing: balancing datasets, removing sensitive features, correcting biased labels.

In-Model: fairness constraints, adversarial debiasing, regularization strategies.

Post-Processing: threshold adjustments, calibrated outputs, human-in-the-loop interventions.

Step 6: Validate with Stakeholders and Domain Experts

Ensure that fairness decisions make sense in real-world conditions and not only mathematically.

Step 7: Deploy with Monitoring

Activate real-time fairness monitoring, alerts for bias drift, and periodic re-evaluation of models.

Step 8: Document Everything

Maintain transparency by recording datasets used, fairness metrics applied, mitigation methods, and audit results—crucial for compliance and accountability.