The Extract, Transform, and Load (ETL) process is a foundational element within Business Intelligence (BI) that enables organizations to combine and prepare data from multiple, diverse sources. ETL transforms raw data into structured and unified formats suitable for analysis, reporting, and decision-making. As data volumes and variety increase, effective ETL processes ensure data accuracy, quality, and timeliness, forming the backbone of reliable BI systems.

Extract: Collecting Data from Multiple Sources

The extraction phase involves retrieving relevant data from various source systems, which may include databases, files, APIs, cloud services, or external feeds. The primary goal is to collect data without altering the source systems while ensuring minimal disruption.

1. Sources include internal systems such as ERP, CRM, financial platforms, as well as external sources like social media, market data, and IoT devices.

2. Data extraction can be performed in batch mode at scheduled intervals or in real-time for continuous data flows.

3. During extraction, basic validation checks can be applied to ensure the data meets expected schemas or formats.

Transform: Cleaning and Preparing Data

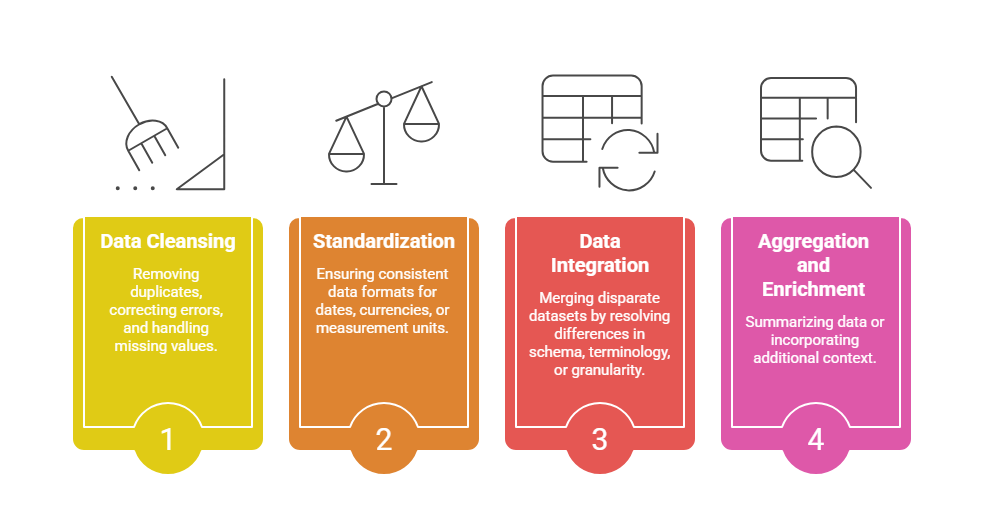

Transformation is the core and often the most complex step where raw extracted data is cleansed, validated, and converted into a consistent format for analysis.

Business rules and calculations are applied to derive new metrics or categorize data. This stage enhances data quality and usability, enabling accurate analytics and reporting.

Load: Delivering Data into Target Systems

The final stage is loading the transformed data into the destination system, usually a data warehouse, data lake, or database, optimized for querying and analysis.

1. Load processes can be full loads (replacing data) or incremental loads (updating changes to reduce system load).

2. Data loading strategies depend on latency requirements—some BI applications require real-time updates, whereas others operate on batch intervals.

3. Ensuring referential integrity and conformity with the target schema is vital during loading.

Modern ETL Practices and Evolution

The ETL process has evolved with technological advances to support larger volumes of varied data types and more agile BI environments.

1. Automation and Orchestration: Modern ETL tools automate workflows, minimize manual intervention, and enable scheduled or event-driven processing.

2. Cloud-Native ETL: Cloud platforms allow scalable ETL with elastic compute resources and integration with cloud data warehouses.

3. Real-Time and Streaming ETL: Technologies like Apache Kafka enable continuous data ingestion and processing for real-time analytics.

4. Data Quality and Governance: Integration of validation rules, lineage tracking, and compliance monitoring ensures data trustworthiness and regulatory adherence.

5. ELT Variation: A related approach where data is first loaded into the target system and then transformed within it, leveraging the compute power of modern data warehouses.

Importance of ETL in Business Intelligence

ETL plays a vital role in business intelligence by ensuring that data from multiple sources is unified, accurate, and readily available for decision-making. It significantly reduces manual preparation efforts, speeding up access to clean and usable information.

By supporting everything from routine reporting to advanced predictive analytics, ETL enables organizations to meet diverse analytical needs. Additionally, it seamlessly integrates both legacy and modern data sources, creating a cohesive and reliable BI environment.