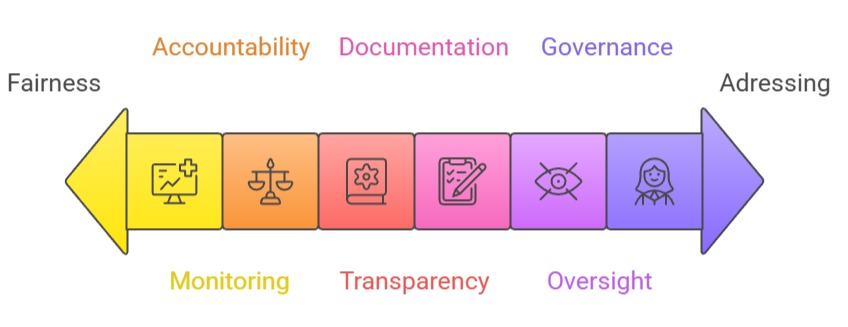

Fairness, accountability, and transparency (FAT) are core ethical pillars guiding the responsible development and deployment of healthcare analytics and AI systems.

As hospitals adopt predictive models, clinical decision support tools, and automated diagnostic algorithms, ensuring that these technologies operate equitably and reliably becomes essential.

Fairness focuses on reducing bias and preventing discrimination against certain demographic groups, especially marginalized populations who historically experienced unequal access to care.

Accountability ensures that healthcare organizations, developers, and clinicians remain responsible for AI outcomes, particularly in high-stakes situations where errors can cause harmful medical consequences.

Transparency emphasizes explainability and clarity, allowing clinicians, patients, and oversight authorities to understand how analytics systems generate predictions.

These principles protect patient rights, maintain trust in AI-driven healthcare tools, and promote safer decision-making.

Transparent systems help clinicians interpret model outputs, while accountability frameworks ensure clear ownership when models fail or produce misleading results.

Fairness ensures that health analytics support equitable treatment, irrespective of age, gender, race, socioeconomic status, or disability.

Together, FAT principles create a strong ethical foundation that allows advanced analytics and AI to enhance care delivery without reinforcing inequalities or compromising patient safety.

Ethical Governance of Healthcare Analytics and AI

1. Ensuring Fairness in Healthcare Analytics Models

Fairness requires that predictive models operate consistently across diverse patient populations without favoring or disadvantaging specific groups.

Historical datasets often contain inherent biases such as underrepresentation of rural communities, women, or certain ethnic groups—which can distort model performance.

Fairness analysis involves evaluating model outcomes across demographic subgroups to detect disparities in accuracy, false positives, and false negatives.

Techniques like re-balancing datasets, fairness-aware algorithms, and subgroup-specific calibration help mitigate these issues.

Ensuring fairness is essential to maintaining equitable care and preventing the reinforcement of long-standing healthcare inequalities.

2. Addressing Algorithmic Bias and Clinical Equity Concerns

Biases in healthcare algorithms can emerge from data, model assumptions, or real-world clinical workflows.

For Example, risk scoring tools may misclassify minority patients if training data does not reflect their health patterns.

This can lead to unequal treatment allocation, misdiagnosis, or increased risk. Addressing algorithmic bias involves continuous monitoring, bias testing, and open evaluation of feature selection.

Clinicians must be trained to recognize when AI predictions may harbor bias. Equitable AI systems promote better health outcomes by ensuring all patients receive accurate and fair assessments.

3. Accountability Frameworks in Healthcare AI Deployment

Accountability ensures that healthcare institutions and developers remain responsible for AI-driven decisions and outcomes.

This includes clearly defining who is accountable when errors occur software developers, model designers, hospital administrators, or clinicians.

Accountability also involves maintaining audit trails, version control, and documentation that tracks how models were trained, updated, and validated.

Regulatory bodies increasingly expect healthcare organizations to maintain governance structures for AI oversight. Accountability builds trust by ensuring that AI tools do not operate autonomously without human responsibility.

4. Importance of Human Oversight and Human-in-the-Loop Systems

Even the most advanced AI systems require human judgment, especially in healthcare where decisions impact patient safety. Human-in-the-loop frameworks require clinicians to review, confirm, or override AI recommendations. \

This reduces risks such as automation bias, where clinicians follow incorrect predictions without question.

Oversight ensures that AI supports clinical decisions rather than replacing critical thinking.

Integrating AI outputs with clinical expertise maintains safety, encourages ethical decision-making, and ensures AI models are used responsibly.

5. Transparency Through Explainable AI (XAI) Techniques

Transparency helps clinicians understand how and why an AI model makes a specific prediction.

Tools such as SHAP values, LIME, feature attribution maps, and attention visualizations reveal the factors influencing the model’s decision.

Transparency is essential for validating model reliability, identifying bias, and ensuring models align with clinical reasoning.

Explainability builds clinician trust and supports compliance with regulatory requirements that demand clear documentation of AI decision-making processes. Transparent models are easier to audit, validate, and improve over time.

6. Model Documentation, Reporting, and Ethical Communication

Ethical analytics requires detailed documentation of model design, data sources, limitations, and validation strategies.

Reporting frameworks such as Model Cards, Datasheets for Datasets, and TRIPOD guidelines help communicate critical information to clinicians, researchers, regulators, and patients.

Clear documentation ensures that users understand a model’s intended purpose, performance boundaries, and potential risks.

Transparent communication prevents misuse, supports informed decision-making, and enhances the ethical deployment of AI tools in clinical environments.

7. Monitoring, Auditing, and Continuous Evaluation of Models

Healthcare environments change rapidly as disease patterns evolve, new treatments emerge, and population demographics shift.

Continuous monitoring ensures AI models remain accurate, unbiased, and clinically reliable over time.

Regular audits examine model performance across subgroups, detect drift, and identify potential fairness issues.

Monitoring also includes real-world outcome evaluation to confirm that models align with clinical reality.

Continuous evaluation ensures long-term accountability, improves safety, and sustains the model’s relevance in changing healthcare environments.

8. Governance, Ethical Oversight, and Regulatory Compliance

Governance structures—such as ethics committees, AI oversight boards, and regulatory review processes ensure responsible AI development and deployment.

These bodies evaluate model fairness, privacy compliance, performance reliability, and risk management.

Governance frameworks help organizations adhere to regulations from health authorities, data protection laws, and AI-specific standards.

Ethical oversight prevents harmful applications, reinforces accountability, and ensures that analytics solutions serve patient interests.

Strong governance is essential for maintaining public trust in healthcare AI.