Evaluating and validating machine learning models in healthcare requires more rigor than in many other industries because clinical decisions directly influence human lives.

Unlike general ML applications that may rely heavily on accuracy or simple validation methods, healthcare demands metrics that capture real-world clinical priorities such as identifying high-risk patients, minimizing false negatives, assessing long-term outcomes, and ensuring fair model performance across diverse populations.

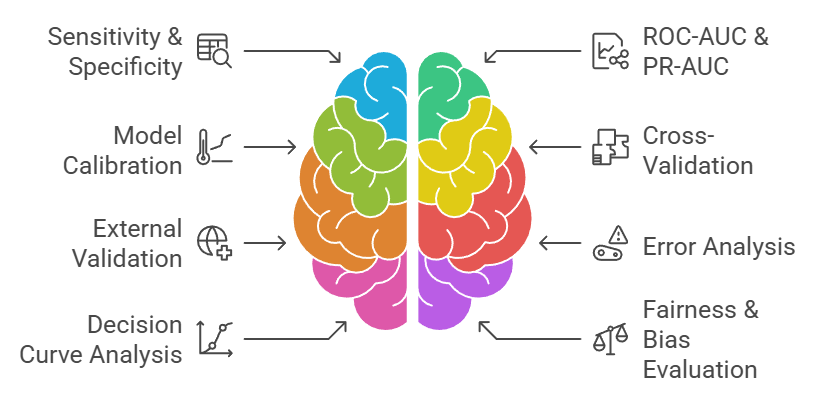

Traditional metrics like accuracy, precision, recall, and F1-score must often be complemented by healthcare-specific metrics such as sensitivity, specificity, ROC-AUC, PR-AUC, calibration curves, survival metrics, decision curves, and cost-sensitive evaluations.

Model validation in healthcare also involves evaluating robustness across population groups, testing performance on external datasets, performing cross-validation, and integrating domain-specific constraints.

Techniques such as stratified validation, temporal validation, prospective validation, and real-world clinical trials ensure that models are reliable beyond controlled datasets.

Model calibration becomes essential because probability outputs must reflect true clinical likelihoods for example, the probability of sepsis or heart failure must be trustworthy.

Due to the high risk of harm from incorrect predictions, healthcare model evaluation emphasizes interpretability, fairness analysis, and error analysis to reduce bias and support safe deployment.

Ultimately, model evaluation and validation ensure that predictive systems are not only accurate but clinically relevant, ethical, safe, and aligned with patient care outcomes.

Comprehensive Evaluation Framework for Clinical AI Models

1. Importance of Sensitivity & Specificity in Healthcare Models

Sensitivity (true positive rate) and specificity (true negative rate) are crucial metrics because they directly map to clinical risks. High sensitivity ensures that patients with diseases are correctly identified, reducing the chance of dangerous false negatives.

High specificity ensures that healthy individuals are not incorrectly labeled as sick, preventing unnecessary tests and anxiety.

Depending on the medical context—such as cancer screening, sepsis alerts, or infectious disease detection clinicians prioritize sensitivity or specificity differently.

For deadly diseases, missing a case can be catastrophic, making sensitivity critical. These metrics provide far more meaningful insight than accuracy when dealing with imbalanced clinical datasets, where positive cases are rare but life-threatening.

2. Role of ROC-AUC and PR-AUC in Imbalanced Healthcare Data

Many clinical datasets are highly imbalanced; for example, only a small percentage of patients may develop complications or rare diseases.

ROC-AUC measures the model's ability to discriminate between classes across thresholds, while PR-AUC focuses specifically on precision and recall, making it ideal for rare event prediction.

PR-AUC helps evaluate how well a model identifies high-risk patients without generating excessive false alarms. These metrics provide holistic performance evaluation even when class distribution is skewed.

Clinicians often rely on ROC-AUC and PR-AUC to understand overall model discrimination and usefulness in real-world risk assessment.

3. Model Calibration & Clinical Probability Reliability

Calibration evaluates whether a model's predicted probabilities reflect real-world risk.

For example, if a sepsis prediction model assigns a 30% risk, clinicians need confidence that roughly 30 out of 100 similar patients will indeed develop sepsis.

Poorly calibrated models can lead to over-treatment or missed interventions. Calibration plots, Brier scores, and reliability curves are used to assess probability accuracy.

Good calibration is essential in triage systems, risk calculators, and early warning tools because clinicians base decisions on risk thresholds. A well-calibrated model improves trust, usability, and patient safety.

4. Cross-Validation and Temporal Validation for Clinical Stability

Standard K-fold cross-validation is not always appropriate for healthcare data due to temporal changes in patient populations and workflows.

Temporal validation—training on past data and testing on future data provides a more realistic simulation of clinical deployment.

Stratified cross-validation ensures the model is exposed to the proper distribution of rare disease cases across folds.

Repeated validation helps assess stability and reduces the risk of overfitting to specific patient groups. This ensures the model generalizes well to new patients and clinical settings.

5. External Validation Across Hospitals and Populations

A model trained on data from one hospital or region may not perform well on other populations due to differences in demographics, equipment, workflows, or disease patterns.

External validation tests the model on datasets from multiple institutions, ensuring robustness and fairness. This step prevents models from being biased toward specific age groups, ethnicities, or comorbidity profiles.

Without external validation, even highly accurate models may perform poorly in real-world deployments. It is a mandatory requirement for clinically approved AI systems.

6. Error Analysis and Clinical Risk Interpretation

Beyond measuring performance, healthcare AI requires deep error analysis to understand why false positives or false negatives occur. Each type of error carries different clinical consequences

For example, failing to detect sepsis is far more dangerous than incorrectly flagging it. Error patterns often reveal underlying bias, missing variables, or population segmentation issues.

Clinicians and data scientists work together to interpret these patterns and refine models.

Error analysis is essential for improving safety, reducing unintended harm, and ensuring clinical decision support tools function reliably.

7. Decision Curve Analysis for Real-World Clinical Benefit

Decision curve analysis (DCA) evaluates the net clinical benefit of using a model across different probability thresholds.

This helps determine whether a model actually improves patient outcomes compared to existing clinical judgment or standard care.

DCA highlights the trade-offs between intervention benefits and unnecessary harm.

It is especially useful in screening tasks, risk stratification, and resource-limited healthcare settings.

Models with high accuracy may still offer little real-world benefit if they do not improve decision-making—DCA reveals these gaps clearly.

8. Fairness and Bias Evaluation for Ethical Healthcare AI

Healthcare models must be evaluated for fairness across gender, age, ethnicity, socioeconomic status, and comorbidity groups.

Bias in predictions can cause unequal treatment or worsen health disparities.

Fairness metrics such as demographic parity, equal opportunity, subgroup AUC, and error rate differences help assess whether models behave consistently across populations.

Bias evaluation ensures the system does not unfairly disadvantage vulnerable groups. Regulatory bodies increasingly require fairness monitoring for healthcare AI deployment.