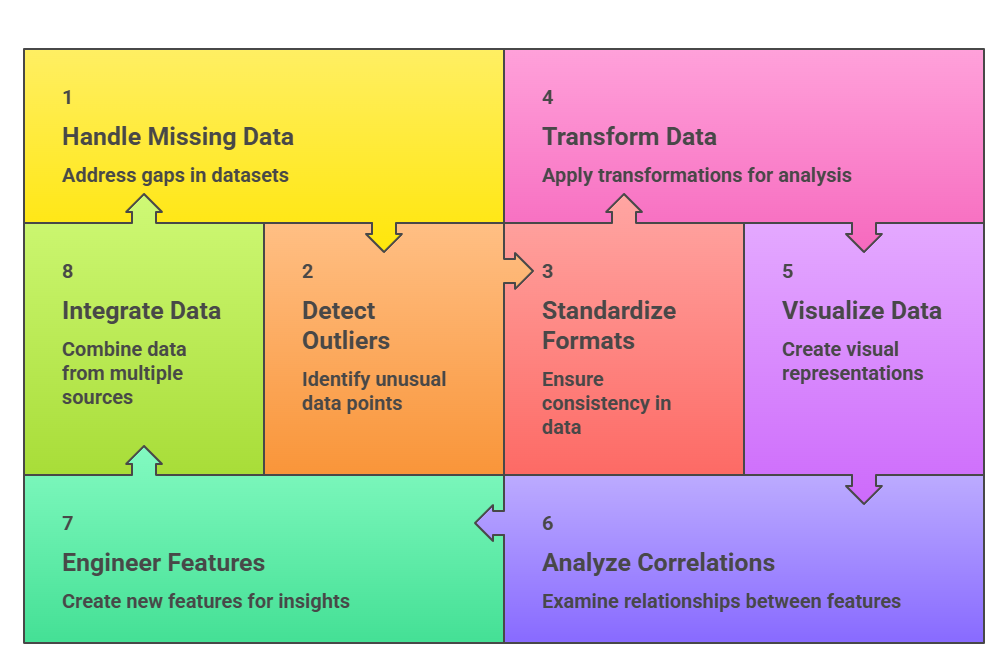

Cleaning and exploring health datasets is one of the most critical steps in healthcare analytics, as medical data is often incomplete, inconsistent, unstructured, and collected from multiple systems such as EHRs, lab systems, imaging devices, and wearable sensors.

Before any meaningful analysis or modelling, data scientists must thoroughly preprocess the dataset to remove errors, fill missing values, standardise formats, and ensure clinical accuracy.

Healthcare data also contains noise from manual entry, device malfunctions, and differing documentation styles, making data cleaning essential for reliable insights.

Exploratory Data Analysis (EDA) helps uncover hidden patterns, detect outliers, identify clinical trends, and highlight data quality issues.

Through virtualisation, summary statistics, and correlation analysis, EDA supports better understanding of patient populations, disease patterns, resource usage, and treatment outcomes.

Techniques such as distribution analysis, time-series exploration, and cohort segmentation help data scientists evaluate the structure and behaviour of healthcare data.

Because healthcare decisions often rely on high-stakes insights, clean and well-explored datasets ensure that subsequent models whether for diagnosis, prediction, or optimisation—are accurate, trustworthy, and clinically meaningful.

Effective preprocessing and EDA allow healthcare organisations to identify potential risks, personalise care strategies, and improve operational efficiency.

In the era of AI and digital health, mastering these techniques is essential for transforming raw medical data into actionable healthcare intelligence.

Healthcare Data Analysis: Cleaning, Exploration, and Feature Design

1. Handling Missing and Incomplete Data

Missing values are extremely common in healthcare datasets due to incomplete patient records, skipped tests, and inconsistent documentation.

Techniques such as mean/median imputation, interpolation, and KNN imputation are used to fill gaps while preserving data integrity.

In some cases, advanced methods like multiple imputation or predictive modelling help estimate missing values accurately.

Data scientists must also distinguish between missing-at-random and systematic missingness to avoid biased results.

Clinical judgment is crucial—for example, missing lab results may indicate the test was unnecessary or unavailable. Proper handling of missing data improves the reliability of downstream analysis and ensures models reflect real patient conditions.

2. Detecting and Managing Outliers

Outliers in healthcare data may represent errors, extreme physiological values, or true clinical anomalies. Identifying them involves statistical techniques such as z-scores, IQR ranges, or visual inspection through box plots and scatterplots.

Rather than blindly removing outliers, healthcare analysts must evaluate whether they represent meaningful medical events, such as dangerously high blood pressure or rare lab abnormalities.

Sometimes outliers are caused by device malfunctions or entry errors, requiring correction or removal.

Accurate outlier handling ensures that predictive models are not distorted and that clinical conclusions remain valid. Understanding outliers also helps reveal important patient subgroups or unusual disease patterns.

3. Standardising Data Formats and Units

Healthcare datasets often contain inconsistent measurements, varied date formats, or conflicting terminology.

For example, temperature may be recorded in Celsius in one system and Fahrenheit in another, or medications may use different naming conventions. Standardisation techniques convert all data into unified formats, ensuring compatibility across datasets.

Mapping to standard vocabularies like SNOMED, ICD-10, or LOINC also enhances interoperability. Standardisation makes merging and analysing data easier and prevents errors caused by misaligned units or formats.

This step is essential for large-scale analytics, multi-hospital studies, and AI model deployment.

4. Data Transformation and Normalisation

Transformation techniques such as normalisation, scaling, log transformation, and encoding help prepare diverse medical variables for analysis.

Normalisation ensures that variables with large ranges, such as lab values or financial data, do not dominate statistical models.

Categorical variables—like diagnosis types or treatment categories are encoded into numerical forms suitable for machine learning.

Transformation also helps address skewed distributions common in health data, such as hospital stay length or medication usage.

These techniques improve the performance, accuracy, and interpretability of analytical models.

5. Exploratory Visualisations and Pattern Discovery

Visualisation is essential for understanding healthcare datasets and identifying trends or anomalies. Tools like histograms, heat-maps, time-series charts, and cohort plots reveal relationships between clinical variables.

Visualisations help detect seasonality in diseases, demographic patterns, treatment effectiveness, and risk factors. For example, plotting blood glucose levels over time may reveal diabetic trends or medication impacts.

Good visual exploration helps clinicians interpret insights quickly and supports data-driven decision-making. It transforms complex datasets into clear, actionable findings.

6. Correlation and Feature Relationship Analysis

Exploring correlations between variables helps identify important clinical relationships, such as links between lifestyle metrics and chronic conditions.

Correlation matrices, pair plots, and feature importance analyses highlight how different variables influence each other.

In healthcare, correlations can uncover risk factors, medication effects, or comorbidities that impact patient outcomes.

Analysts must also look for spurious or misleading correlations due to confounding variables. Understanding feature interactions helps in building better predictive models and guiding medical decision support systems.

7. Handling Missing Data Strategically

Missing values are common in healthcare datasets due to incomplete patient histories, skipped lab tests, or system errors.

Techniques such as mean/median imputation, KNN-based imputation, regression-based filling, or using domain-specific rules help recover information without distorting clinical patterns.

Analysts must inspect the pattern of missingness to distinguish between MCAR, MAR, and MNAR, which influences how biases may propagate into models.

In many cases, removing missing data blindly can lead to loss of critical minority insights, especially in rare disease datasets. Visualisation tools like missingness maps or correlation heat-maps help identify variables most affected.

Advanced methods such as multiple imputation or deep-learning imputation (e.g., autoencoders) are increasingly used for better accuracy.

8. Detecting and Treating Outliers in Clinical Measurements

Outliers in healthcare data may represent measurement errors or genuinely critical clinical events, making thoughtful detection essential.

Methods such as IQR analysis, z-scores, and isolation forests help identify abnormal patterns in variables such as glucose levels, heart rate, or medication doses.

Instead of removing outliers immediately, analysts must distinguish between true clinical anomalies (e.g., hypertensive crisis) and erroneous device readings.

Outlier handling may involve transformation, capping, or smoothing techniques depending on data sensitivity. Visualisation using box-plots and density curves helps understand how extreme values influence distribution.

Proper outlier treatment ensures predictive models remain stable and reflective of real-world patient conditions.

9. Normalisation and Standardization of Medical Variables

Healthcare datasets often combine features measured on drastically different scales, such as BMI, cholesterol, blood pressure, medication dosage, and imaging-derived metrics.

Normalising or standardizing these features helps algorithms like k-means, logistic regression, and neural networks perform optimally.

Standardization ensures fair weighting, preventing larger-scale variables from overshadowing clinically important but numerically smaller variables.

Additionally, techniques such as min–max scaling, z-score scaling, and robust scaling improve convergence during model training.

In clinical research, maintaining interpretability while scaling data is crucial, so reverse transformations are often used to make findings meaningful to healthcare professionals.

10. Feature Engineering for Clinical Insight Extraction

Feature engineering transforms raw medical data into meaningful representations that enhance analytical results.

In healthcare, this may include deriving risk scores, time-interval features, comorbidity indexes, or aggregated vitals over time.

Creating clinically relevant features often requires collaboration with domain experts to ensure that transformations reflect biological and medical logic.

Temporal feature engineering is especially critical in EHR data where trends, fluctuations, or episode-based segments hold diagnostic value.

Tools like sliding windows and sequence embeddings modernise the extraction process. Well-designed features significantly improve predictive model performance and clinical decision-making.

11. Data Integration from Multiple Healthcare Systems

Healthcare data frequently originates from various departments—labs, imaging, pharmacy, billing, wearables, and EHR systems leading to fragmentation.

Integration techniques help unify these datasets by aligning patient identifiers, timestamps, variable definitions, and formats.

Challenges include inconsistent coding, missing metadata, and device-specific measurement variations.

Data harmonization using mapping tables, standard terminologies, and ETL pipelines ensures compatibility. I

ntegrated datasets yield more holistic insights, supporting longitudinal studies and accurate clinical predictions.

As healthcare increasingly adopts IoT devices and telemedicine platforms, scalable integration strategies are becoming essential for high-quality analytics.