Legal regulations and patient data consent form the foundation of responsible healthcare data science, ensuring that sensitive medical information is collected, processed, and used in ways that protect individual rights and public trust.

With the rise of electronic health records (EHRs), AI diagnostic tools, cloud-based hospital systems, and large-scale predictive models, safeguarding patient privacy has become more critical than ever.

Laws such as HIPAA (USA), GDPR (EU), and country-specific health data protection acts govern how healthcare organizations store, share, and analyze patient data.

These regulations define clear guidelines for data security, anonymity, accountability, and breach reporting to prevent misuse or unauthorized access.

Patient data consent is equally important because it ensures that individuals understand how their health information will be used—whether for treatment, research, AI model training, or health analytics.

Modern consent frameworks emphasize transparency, patient autonomy, and ongoing control over data usage.

Concepts like informed consent, dynamic consent, and opt-in/opt-out models empower patients while enabling ethical innovation in healthcare AI.

Maintaining legal compliance not only avoids penalties but also ensures models are trustworthy, explainable, and aligned with ethical medical practices.

In today’s data-driven healthcare environment, understanding legal frameworks and obtaining proper patient consent are essential for developing responsible analytics systems, ensuring fairness, and protecting public confidence in AI-enabled healthcare solutions.

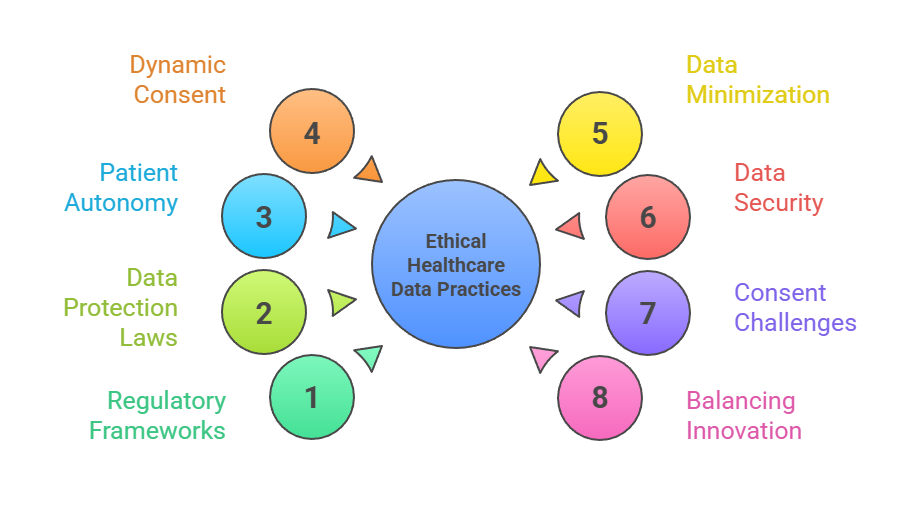

Ethical Healthcare Data Practices

1. Importance of Regulatory Frameworks in Healthcare Data Science

1. Importance of Regulatory Frameworks in Healthcare Data Science

Legal regulations establish boundaries for how healthcare data can be collected, stored, and processed, ensuring patient rights are protected throughout the data lifecycle.

These frameworks address confidentiality, data integrity, secure transfer protocols, audit trails, and accountability mechanisms.

In the absence of such rules, sensitive data could be misused for discrimination, insurance denial, financial exploitation, or unethical research practices.

Regulations also help standardize practices across institutions, allowing smoother and safer data sharing for clinical research and AI modeling.

Robust legal frameworks thus serve as safeguards that balance innovation with patient protection.

2. HIPAA, GDPR and Global Health Data Protection Laws

HIPAA (Health Insurance Portability and Accountability Act) in the U.S. focuses on safeguarding protected health information (PHI), requiring strict controls on access, encryption, and optional de-identification.

GDPR, applied in the European Union, provides even stricter protections by granting individuals full control over their personal data, including the right to access, delete, or restrict processing.

Many countries now follow similar health-specific laws such as India’s Digital Personal Data Protection Act and the UK's Data Protection Act ensuring international alignment.

These laws ensure that healthcare data science projects operate within safe, lawful boundaries.

3. Ensuring Patient Autonomy Through Informed Consent

Informed consent ensures patients understand what data is being collected, why it is needed, who will access it, and how it will be used. This process upholds autonomy and makes data collection ethically sound.

Consent forms must be clear, accessible, and free from technical jargon so patients can make meaningful decisions.

In AI applications, consent must include information about potential model training, data sharing with research teams, and long-term storage.

Informed consent strengthens the patient–provider relationship by building trust and encouraging transparency in digital health practices.

4. Dynamic Consent and Modern Models of Patient Data Permission

Dynamic consent allows patients to continuously manage their data rights, opting in or out of specific uses over time.

This is particularly valuable in AI-driven healthcare, where new research uses may emerge long after initial data collection.

Digital portals enable patients to update their preferences whenever needed, ensuring ongoing participation control.

Dynamic consent improves engagement, reduces ethical conflicts, and enhances trust.

It also supports precision medicine, where evolving datasets are essential for training personalized predictive models.

5. Data Minimization, Anonymization, and De-identification Requirements

Legal regulations increasingly promote data minimization collecting only what is necessary for a specific purpose—to reduce privacy risks.

Techniques such as anonymization, tokenization, and de-identification are essential for removing personally identifiable information (PII) before using data for analytics or AI development.

Properly anonymized datasets minimize the risk of re-identification while still supporting valuable insights.

These practices ensure compliance with laws and help organizations maintain ethical standards in data-driven research.

6. Legal Responsibilities for Data Security and Breach Management

Healthcare providers and data processors must implement robust security measures—such as encryption, access controls, authentication protocols, and regular audits to prevent data breaches.

Legal regulations typically require quick reporting of breaches, transparent communication to affected individuals, and corrective actions to prevent recurrence.

Breaches can lead to severe financial penalties, operational downtime, and a long-term loss of public trust.

Strong legal accountability ensures that organizations prioritize data security as a fundamental responsibility.

7. Consent Challenges in AI and Big Data Healthcare Projects

AI projects often rely on large, diverse datasets that may have been collected for purposes different from the models’ intended use.

This creates challenges in obtaining valid consent, especially when historical or multi-institutional data is involved.

Additionally, patients may not fully understand the implications of AI training or secondary data use.

Ensuring consent remains valid and ethically sound requires clear communication, ongoing updates, and adherence to privacy-by-design principles.

These challenges highlight the need for evolving consent frameworks in modern healthcare.

8. Balancing Innovation with Patient Privacy and Legal Compliance

Healthcare organizations must balance the need for data-driven innovation with strict adherence to legal and ethical obligations.

While AI, predictive analytics, and personalized medicine rely on large datasets, these advancements must not compromise privacy or patient rights.

Legal compliance encourages responsible innovation by promoting transparency, fairness, and secure data-sharing practices.