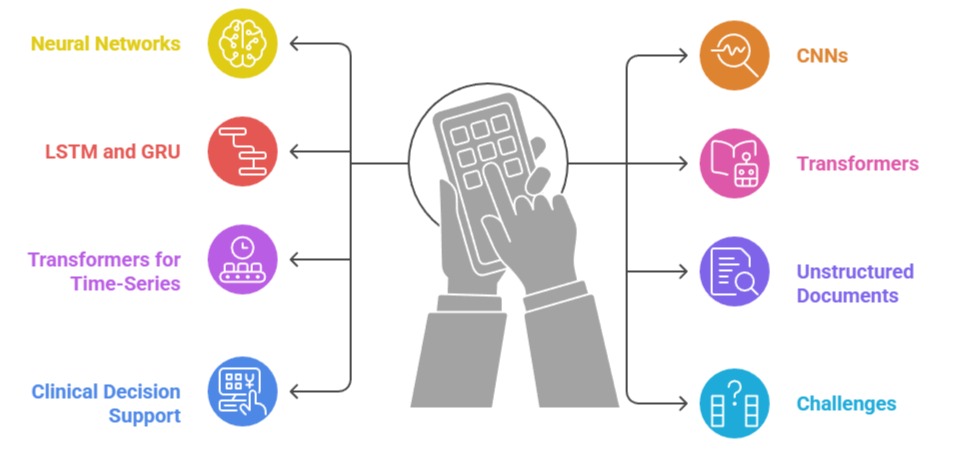

Neural networks and transformer-based architectures have become fundamental tools in modern healthcare AI, enabling highly accurate analysis of clinical text, medical records, physiological signals, and time-series patient data.

Traditional statistical models often struggle to capture complex, nonlinear patterns present in healthcare data, whereas neural networks can automatically learn hierarchical representations that reflect real-world clinical complexity.

This makes them highly effective for diagnosing diseases, predicting risks, understanding patient trajectories, and processing large volumes of unstructured information.

Clinical text such as doctors’ notes, discharge summaries, pathology reports, and radiology interpretations is rich in insights but difficult to analyze using rule-based methods.

Transformer models like BERT, BioBERT, and ClinicalBERT excel because they understand context, medical terminology, and sentence structure, enabling tasks such as entity extraction, summarization, and clinical sentiment analysis.

Meanwhile, time-series patient data from wearables, ICU monitors, and continuous lab measurements require models capable of capturing temporal dependencies and long-term patterns.

Neural networks such as LSTMs, GRUs, CNN-LSTMs, and attention-based transformers provide accurate predictions for sepsis detection, arrhythmia classification, deterioration prediction, and chronic disease monitoring.

Deep Learning Models for Clinical Prediction and Analysis

1. Neural Networks for Learning Complex Healthcare Patterns

1. Neural Networks for Learning Complex Healthcare Patterns

Neural networks excel at identifying nonlinear relationships that exist in clinical datasets, which often include complex lab interactions, comorbidities, and physiological indicators.

Their layered architecture allows models to learn increasingly abstract features from raw signals to high-level medical concepts enabling accurate disease prediction and risk stratification.

Dense neural networks are widely used for structured EHR data where relationships between variables are not straightforward.

They adapt well to large datasets, continuously improving as new patient information becomes available.

Their flexibility makes them valuable for tasks such as diagnosis classification, treatment response prediction, and modeling patient outcomes.

Neural networks have become a core engine behind modern automated healthcare analytics.

2. Role of CNNs in Healthcare Time-Series and Signal Analysis

Convolutional Neural Networks (CNNs), originally designed for image processing, also perform exceptionally well in analyzing physiological signals like ECG, EEG, and respiratory waveforms.

Their ability to extract local patterns makes them ideal for identifying peaks, anomalies, or recurring patterns that indicate clinical abnormalities.

CNNs reduce manual feature engineering by learning filters directly from raw signals, simplifying workflows while improving accuracy.

They are widely used in arrhythmia detection, sleep stage classification, and neurological disorder analysis.

When combined with temporal models like LSTMs, CNNs can capture both spatial and temporal patterns, enhancing prediction performance.

This makes CNNs highly effective in real-time monitoring settings such as ICUs and remote patient tracking.

3. LSTM and GRU Networks for Sequential Clinical Data

LSTMs and GRUs are specifically designed to capture long-term dependencies in time-series data, which is crucial for understanding patient trajectories, medication effects, and chronic disease progression.

They retain information over longer periods, allowing them to model trends such as gradual deterioration or recovery patterns.

These models are particularly useful for early warning systems that predict sepsis, cardiac arrest, or readmission risk. Their sequential learning approach mirrors how clinicians interpret patient history over time.

LSTM-based models can process irregular and high-dimensional data, making them well-suited for EHR time-series, wearable device data, and lab result sequences.

4. Transformers for Clinical Text Understanding

Transformer architectures revolutionized natural language processing by enabling context-aware understanding of text without relying on sequential processing like RNNs.

In healthcare, transformers such as BERT, ClinicalBERT, BlueBERT, and BioGPT are used to extract medical entities, identify diagnoses, summarize reports, and analyze clinical notes with high precision.

Their attention mechanisms help models focus on the most important words or phrases, mimicking how clinicians read and interpret medical documents.

Transformers handle long documents efficiently, making them suitable for analyzing entire medical histories or multi-page reports.

They significantly improve coding accuracy, documentation quality, and clinical decision support tasks.

5. Transformers for Time-Series Data Through Attention Mechanisms

Transformers are increasingly applied to time-series healthcare data due to their ability to capture both short-term and long-term dependencies using self-attention.

Unlike RNN-based models, transformers process all time steps simultaneously, enabling faster training and better understanding of complex temporal relationships.

This is particularly beneficial for ICU monitoring, wearable data analysis, and forecasting disease progression.

Attention mechanisms help identify which time points are clinically meaningful, such as sudden spikes in heart rate or drops in oxygen saturation.

Transformers often outperform LSTMs in large datasets, making them an emerging standard in advanced healthcare prediction models.

6. Handling Unstructured Medical Documents at Scale

Neural networks and transformers enable automated analysis of unstructured medical documents, which constitute nearly 80% of healthcare data.

Using NLP techniques, models can extract symptoms, medications, lab results, and clinical impressions without manual chart review.

This reduces the administrative burden on clinicians while improving data accessibility for research and AI systems.

Transformers understand abbreviations, clinical jargon, and contextual meanings, enabling more accurate document classification and information retrieval.

Large-scale text processing supports population health management, epidemiology studies, and real-time clinical decision support.

7. Enhancing Clinical Decision Support with Deep Learning Models

Deep learning models integrate various data streams text, vitals, imaging, genetics to offer holistic predictive insights for diagnosis, prognosis, and treatment planning.

Neural networks can recognize subtle patterns that are difficult for humans to detect, enabling earlier identification of clinical risks.

Transformers enhance interpretability through attention visualization, helping clinicians understand why a prediction was made.

These models support real-time decision-making in critical care by continuously updating risk scores and alerts.

Their integration into EHR systems represents a major advancement in precision medicine and personalized treatment.

8. Challenges and Considerations in Using Deep Learning for Healthcare Data

While powerful, neural networks and transformers require large, high-quality datasets, making them sensitive to missing data, imbalance, and noise.

Their black-box nature raises questions about interpretability and trust in clinical settings.

Deployment requires careful validation, fairness assessment, and bias analysis to ensure safe and equitable performance.

Additionally, training large transformer models demands significant computational resources and strict privacy safeguards due to sensitive patient data.

Despite these challenges, ongoing advancements in explainable AI and efficient architectures are accelerating their adoption in healthcare.