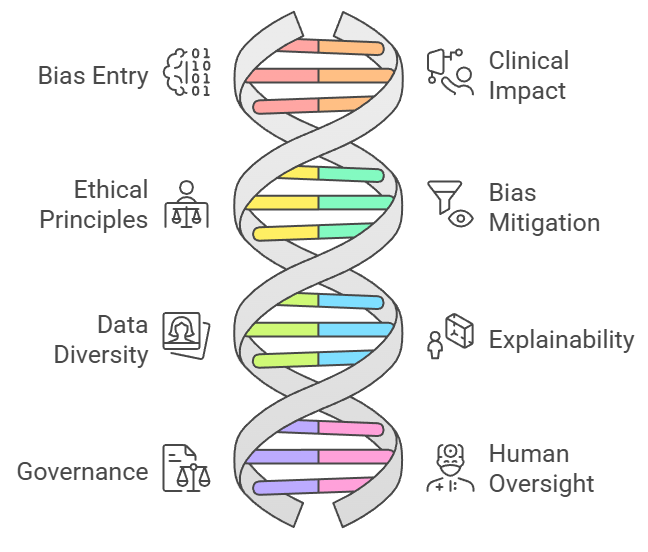

Ethics and bias in healthcare AI models are critical concerns because AI systems influence decisions that directly affect patient lives, clinical outcomes, and access to care.

Healthcare AI often relies on historical medical data, which may contain existing inequalities, demographic imbalances, or patterns of systemic discrimination.

When models are trained on such data, they inadvertently learn and amplify biases, leading to unfair predictions such as misdiagnosis in underrepresented populations, unequal treatment suggestions, or inaccurate risk scoring.

Ensuring ethical AI usage involves not only technical fairness methods but also a deeper understanding of clinical context, societal values, and patient rights.

Healthcare AI must align with principles of equity, transparency, accountability, and patient-centered care. This requires continuous monitoring, robust validation across diverse patient groups, clear documentation of model limitations, and clinician involvement in evaluation.

Explainability plays a key role, as clinicians and patients need to understand how predictions are generated especially in sensitive domains like triage, disease classification, radiology, and mental health assessment.

Ethical deployment also requires compliance with data protection laws, safeguarding patient privacy, and preventing the misuse of sensitive medical information.

With AI increasingly embedded in diagnostics, decision support systems, and risk prediction tools, addressing bias and fairness is essential to ensure trust, safety, and responsible use.

A proactive ethical framework ensures that AI enhances healthcare quality without compromising equity or patient well-being.

Responsible AI Design and Deployment in Healthcare

1. Understanding How Bias Enters Healthcare AI Systems

Bias can enter AI models through data collection, feature selection, sampling methods, or embedded assumptions in clinical documentation.

Historical data often reflects inequalities such as lower healthcare access for certain ethnic or socioeconomic groups which AI systems may inadvertently learn.

Models trained on skewed datasets may underperform for underrepresented groups, producing inaccurate diagnoses or risk estimates.

Even seemingly neutral variables can encode demographic patterns that reinforce disparities.

Understanding these pathways helps build safeguards and ensures fairness at every stage of model development.

2. Impact of Bias on Clinical Decision-Making and Patient Safety

Biased AI models can negatively influence clinical decisions by providing inconsistent or inaccurate outputs for specific patient groups.

This may result in delayed diagnoses, incorrect treatment recommendations, inappropriate risk stratification, or unequal allocation of medical resources.

In high-stakes settings like emergency care or cancer detection, even small biases can lead to life-threatening consequences.

When clinicians unknowingly rely on biased predictions, trust in AI systems deteriorates. Addressing bias is therefore essential for maintaining patient safety, improving medical accuracy, and ensuring equitable treatment delivery.

3. Ethical Principles for Fair and Responsible Healthcare AI

Healthcare AI must follow ethical principles such as justice, beneficence, autonomy, and non-maleficence.

Justice ensures fairness across demographic groups, while beneficence and non-maleficence require models to maximize benefit and minimize harm.

Patient autonomy demands transparency so individuals understand how AI-based decisions affect their care.

Ethical frameworks guide model design, evaluation, and deployment, ensuring that AI aligns with societal values and medical standards.

These principles encourage responsible innovation and safeguard vulnerable populations from unintended harm.

4. Techniques to Detect and Mitigate Bias in AI Models

Various techniques help identify and reduce bias, such as fairness metrics (equal opportunity, demographic parity), stratified validation, adversarial debiasing, and re-sampling methods.

Data augmentation can improve representation of minority groups, while model constraints can enforce fairness-aware learning.

Post-hoc corrections help adjust biased predictions before presenting outputs to clinicians.

Continuous monitoring during deployment ensures that models remain fair as patient populations evolve.

Combining technical methods with clinical expertise creates robust, equitable AI systems.

5. Importance of Diverse and Representative Healthcare Data

AI fairness depends heavily on the diversity and quality of training data.

Collecting datasets that include a wide range of ages, ethnicities, genders, and socioeconomic backgrounds ensures models learn realistic medical variations.

Representative data improves the accuracy of diagnostic tools, risk scoring systems, and treatment prediction models across all populations.

Without such diversity, models generalize poorly and may perpetuate health disparities.

Ensuring data inclusiveness is a foundational step toward ethical AI development.

6. Explainability and Transparency in Ethical AI Deployment

Explainability enables clinicians to understand how AI arrives at predictions, which is crucial for trust and accountability.

Transparent models allow users to evaluate whether outputs align with medical reasoning and whether biases may be influencing results.

Tools like SHAP, LIME, and attention visualizations provide insights into model behavior.

Transparency also supports regulatory compliance, informed consent processes, and ethical evaluation. Explainable AI strengthens clinician confidence and enhances the safe integration of AI into healthcare workflows.

7. Governance, Accountability and Legal Compliance

Ethical AI requires strong governance frameworks that define responsibilities for model validation, monitoring, and error handling.

Accountability ensures that developers, institutions, and clinicians understand their roles when AI systems influence patient outcomes.

Compliance with regulations such as HIPAA, GDPR, and emerging AI-specific laws ensures patient privacy and protects against unauthorized data use.

Ethical oversight committees and documentation standards help ensure models remain safe, fair, and legally compliant throughout their lifecycle.

8. Human Oversight and Avoiding Overreliance on AI Tools

AI should support not replace clinical judgment. Human oversight ensures that clinicians validate model outputs, apply contextual understanding, and intervene when predictions seem questionable.

Overreliance on AI can lead to automation bias, where clinicians trust inaccurate outputs without scrutiny.

Embedding human-in-the-loop review processes helps maintain balance between AI insights and clinical expertise.

This approach reinforces patient safety and ensures AI enhances, rather than dictates, medical decision-making.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.