Recurrent Neural Networks (RNNs) and transformer architectures are two foundational deep learning approaches used for modeling sequential data in healthcare.

Many forms of medical information—such as patient histories, clinical notes, diagnostic timelines, ICU monitoring signals, and wearable sensor streams are inherently sequential, requiring models that can capture temporal relationships.

RNN-based models, especially LSTM and GRU networks, were historically the most powerful tools for time-series and text processing because they retain memory of previous inputs, enabling them to learn patterns across time.

These networks have been applied extensively in predicting disease progression, detecting anomalies in physiological signals, forecasting patient deterioration, and extracting insights from medical documentation.

Transformers represent the next major advancement. They replace recurrence with self-attention, allowing the model to understand relationships between distant data points more effectively and process entire sequences simultaneously.

In healthcare, transformers power models like ClinicalBERT and BioGPT, enabling precise clinical text understanding, medical coding, summarization, and extraction of symptoms or treatments from unstructured notes.

For time-series data, transformer-based architectures outperform traditional RNNs on large datasets by identifying long-range dependencies and important temporal features with higher accuracy.

Together, RNNs and transformers provide a comprehensive toolkit for analyzing sequential healthcare data.

They enhance clinical decision support, improve real-time monitoring systems, and enable more accurate predictive analytics across clinical environments. Their use is central to building intelligent, adaptive, and trustworthy healthcare AI systems.

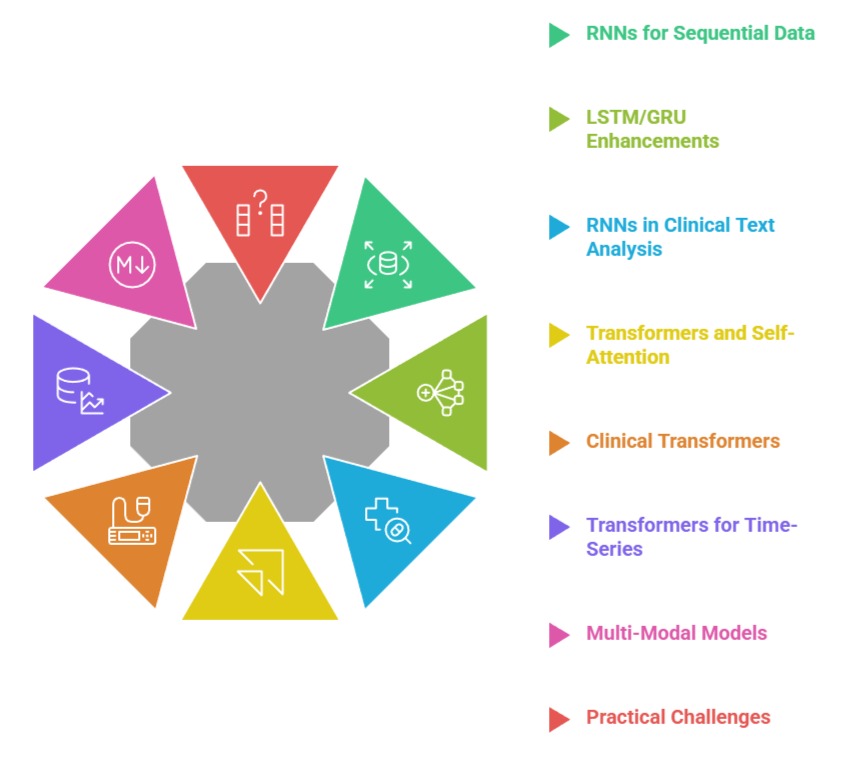

Sequence Modeling Architectures in Healthcare AI

1. Strength of RNNs in Modelling Sequential Healthcare Data

RNNs are explicitly designed to handle sequences, making them well-suited for healthcare data where the order of events matters—such as medication administration, symptom progression, or lab test patterns.

They maintain a hidden memory that updates with each new time step, enabling them to learn temporal dependencies that traditional neural networks cannot capture.

In clinical workflows, RNNs allow models to interpret patient history as a continuous narrative instead of isolated data points.

This makes them effective for tasks like readmission prediction, treatment outcome modeling, and early warning system development.

Their ability to learn from irregularly spaced data also supports real-world EHR environments where information arrives at uneven intervals. Although newer models outperform them in some tasks, RNNs remain reliable for many structured and moderately sized datasets.

2. LSTM and GRU Enhancements for Long-Term Clinical Dependencies

Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) networks address the limitations of simple RNNs by incorporating gates that manage the flow of information.

This allows them to retain long-term context, which is especially critical in healthcare where symptoms may evolve slowly or where early indicators play an important role in later outcomes.

These models excel at analyzing ICU monitoring data, predicting sepsis hours in advance, and understanding multi-visit patient trajectories.

LSTMs and GRUs are also highly adaptable to multi-modal sequences, incorporating vitals, labs, notes, and medication histories into unified prediction systems.

Their structure reduces vanishing gradient issues, enabling deeper and more robust clinical models. They continue to serve as strong baselines for time-series modeling in healthcare applications.

3. RNNs in Clinical Text Analysis and Document Interpretation

While transformers dominate modern NLP, RNNs still offer value in scenarios where data is limited, domain-specific, or requires lightweight deployment.

Bidirectional LSTMs enhance context understanding by processing text in both forward and backward directions, allowing the model to interpret medical terminology more effectively.

These models extract symptoms, diagnoses, medications, and entities from clinical notes with high accuracy, especially when data does not support massive transformer training.

RNNs also serve as interpretable components in hybrid architectures used for clinical coding, document classification, and narrative summarization.

Their lower computational cost makes them suitable for resource-constrained hospitals and edge healthcare devices where efficiency is essential.

4. Introduction to Transformers and Self-Attention in Healthcare

Transformers revolutionized sequence modeling by introducing self-attention, which allows the model to identify important relationships across an entire sequence without relying on incremental memory updates.

This architecture enhances performance in long documents like discharge summaries, detailed patient histories, and multi-page radiology reports.

Transformers can capture subtle contextual details such as negations (“no signs of infection”) or temporal hints (“recent worsening of symptoms”), leading to more reliable text interpretation.

Their parallel processing capabilities enable faster training and deployment across large clinical datasets.

Transformers have become central to state-of-the-art NLP applications in healthcare, powering medical chatbots, documentation automation, and clinical information extraction pipelines.

5. Clinical Transformers for Text: BERT, BioBERT, ClinicalBERT, BioGPT

Domain-specific transformer models trained on medical literature and clinical notes provide superior understanding of healthcare terminology. BioBERT and ClinicalBERT,

For example, significantly outperform generic language models in identifying medical conditions, mapping relationships between symptoms and diagnoses, and interpreting unstructured EHR data.

These models support tasks such as named entity recognition, summarization, medication extraction, and clinical question answering. BioGPT extends capabilities by generating medically coherent text, supporting research automation and clinical document drafting.

These transformers reduce clinician workload and enhance data accessibility, enabling scalable solutions in clinical research and hospital settings.

6. Transformers for Time-Series: Capturing Long-Range Temporal Patterns

Time-series transformers apply self-attention mechanisms to physiological signals, wearable sensor data, and ICU vitals, enabling more accurate predictions compared to traditional RNNs.

Transformers capture both short-term fluctuations and long-term clinical trends, supporting complex forecasting tasks like cardiac event prediction, respiratory failure detection, and deterioration monitoring.

They process signals non-sequentially, allowing the model to identify critical time points that influence outcomes.

This leads to improved interpretability through attention heatmaps, which highlight the most crucial segments of patient data. Their scalability makes transformers ideal for large-scale, continuous monitoring platforms and digital health ecosystems.

7. Unified Multi-Modal Models Combining Text and Time-Series Data

Modern healthcare AI systems increasingly integrate multiple data types—clinical text, lab results, vitals, imaging metadata, and signal patterns.

RNNs and transformers can be combined in hybrid architectures where text is processed by transformers while time-series data is modeled using LSTMs or transformer-based encoders.

Such multi-modal systems provide deep insights into patient health by correlating narrative notes with physiological changes.

These models enhance clinical decision support by predicting risks more accurately and offering context-aware recommendations.

They are also used in AI-driven triage tools, personalized treatment planning systems, and holistic patient monitoring platforms.

8. Practical Challenges, Data Quality Issues, and Deployment Considerations

Using RNNs and transformers in healthcare requires high-quality data, as errors or missing values can undermine model reliability. Transformers especially require large datasets, making them sensitive to data scarcity and imbalanced patient populations.

Model interpretability is a major concern, and healthcare-grade explainability tools are required to ensure clinician trust.

Computational complexity must also be managed, as transformer models may demand powerful hardware and strict privacy safeguards.

Ethical considerations including bias, fairness, and transparency are essential when deploying models that influence clinical decisions.

Despite challenges, ongoing advancements in efficient transformers and explainable deep learning are resolving many deployment barriers.