A/B testing is one of the most widely used experimentation techniques in modern marketing, allowing businesses to compare two versions of a marketing element—such as an email subject line, website layout, ad creative, pricing model, or CTA button—to determine which version performs better.

It is a controlled and data-driven method where one version (A: the control) is tested against a modified version (B: the treatment) to identify which delivers higher engagement, conversions, or revenue outcomes.

A/B testing helps marketers eliminate guesswork by revealing what truly influences customer behavior and what improvements create measurable business impact.

This method is particularly powerful in digital marketing because platforms like Google Ads, Meta Ads, CRM systems, website optimization tools, and email automation platforms support A/B testing natively.

It enables marketers to optimize campaigns, refine messaging, personalize experiences, and continuously improve performance through incremental improvements.

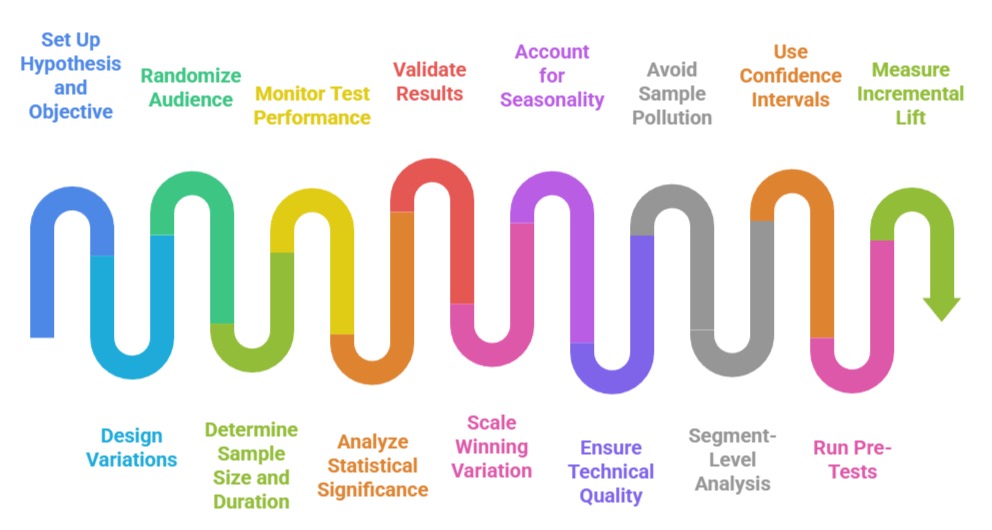

Conducting an effective A/B test requires following a structured process—defining a clear hypothesis, selecting the right audience, calculating sample size, ensuring proper randomization, and measuring the right KPIs.

Analyzing A/B test results involves evaluating statistical significance, confidence intervals, conversion lift, and practical business impact.

Proper analysis ensures marketers do not rely on noisy or premature results, reducing the risk of scaling ineffective strategies.

By combining rigorous methodology with timely execution, A/B testing empowers organizations to make high-impact decisions, enhance customer satisfaction, and maximize ROI in a competitive and fast-changing marketing landscape.

Practical A/B Testing for Marketing Optimization

1. Setting Up a Clear Hypothesis and Test Objective

Every A/B test must begin with a specific hypothesis that articulates what change is being tested and what outcome is expected.

For Example, “Changing the CTA color from blue to orange will increase click-through rate by 10%.”

A defined hypothesis ensures the experiment has a clear direction and measurable goal.

It prevents marketers from running random tests without understanding the impact they’re trying to create.

It also guides the selection of primary metrics, target audience, and variation design.

A well-formulated hypothesis improves test quality, reduces confusion during analysis, and ensures results contribute to meaningful strategic insights.

2. Designing the Variations and Ensuring Proper Implementation

Variation design involves creating two versions—A (control) and B (treatment)—that differ only in the specific element being tested.

Keeping changes minimal ensures the test isolates the impact of the variable.

Implementation accuracy is crucial because incorrect placement of elements, tracking errors, or inconsistent rendering across devices can invalidate results.

Marketers often run a QA step to verify that both versions display correctly across browsers, mobile devices, and user segments before the test goes live.

Proper implementation reduces the risk of technical bias and strengthens the validity of the insights derived.

3. Randomizing the Audience and Maintaining Split Integrity

Audiences should be randomly divided so that each user has an equal chance of seeing either variation.

Randomization reduces systematic bias and ensures the two groups behave similarly except for the tested variable.

Maintaining split integrity means ensuring users do not see both versions, which could distort behavior.

Platform-based A/B testing tools automatically handle this, but manual setups require careful engineering.

Randomization ensures that differences in outcomes reflect true causal effects and not differences in audience characteristics, making statistical analysis more accurate and trustworthy.

4. Determining Sample Size and Establishing Experiment Duration

A/B tests require sufficient sample size to detect real differences between variations.

Too-small sample sizes may produce results that seem significant but are due to random chance.

Statistical calculators help marketers estimate the minimum number of users needed based on baseline conversion rate, expected lift, and confidence level.

Experiment duration also affects result validity—running tests too short may capture temporary spikes, whereas too long might expose users to losing variations unnecessarily.

Establishing proper duration helps balance reliability with efficiency, ensuring decisions are made at the right time.

5. Monitoring Test Performance and Avoiding Early Stopping Bias

Throughout the experiment, marketers monitor tracking accuracy, data consistency, and potential anomalies such as unexpected traffic surges or platform glitches.

However, they must avoid stopping tests prematurely based on early trends, as initial results often fluctuate.

Early stopping introduces statistical bias and increases the likelihood of false positives.

Monitoring the test without acting impulsively ensures that variations receive enough exposure to produce stable, reliable results.

Maintaining discipline during the test is crucial for ensuring the final outcome reflects genuine customer behavior rather than random fluctuations.

6. Analyzing Statistical Significance and Interpreting Results

After the test concludes, marketers evaluate whether one variation outperformed the other with statistical significance.

Key measures include p-values, confidence intervals, conversion lift, and absolute improvement.

Analysts also check for consistency across segments, such as device type or acquisition channel, to ensure the result isn’t skewed.

Interpretation should consider both statistical and practical significance—sometimes a variation may show improvement but not large enough to justify implementation costs.

Proper analysis ensures results are actionable and aligned with business objectives, rather than just mathematically interesting.

7. Validating Results with Secondary and Guardrail Metrics

Beyond the primary metric (like CTR or conversion rate), secondary metrics and guardrail metrics help evaluate broader performance.

For example, a new landing page layout may increase conversions but also increase bounce rate or reduce average order value.

Guardrail metrics ensure no unintended negative impacts occur while optimizing for the primary goal.

This holistic approach safeguards long-term customer experience and revenue health.

Validating results across multiple metrics ensures marketers implement changes that truly benefit overall business performance.

8. Scaling the Winning Variation and Documenting Learnings

Once a variation is declared the winner, marketers roll it out to the full audience or integrate it into broader marketing strategies.

It is also important to document the test setup, results, interpretation, and learnings so future experiments can build on the knowledge gained.

Documentation helps organizations avoid redundant tests, refine testing strategies, and strengthen their culture of experimentation.

Scaling insights ensures that successful variations generate maximum business impact and contribute to continuous, data-driven optimization across campaigns and channels.

9. Accounting for Seasonality and External Influences During Testing

Marketing performance is strongly affected by seasonality, events, holidays, competition, and market conditions.

If an A/B test runs during a festive sale, payday cycle, or sudden spike in demand, the results may not reflect normal user behavior.

Marketers must ensure tests run during stable, representative periods or adjust for anomalies using historical baselines.

Ignoring seasonality can lead to implementing strategies that only perform well under temporary conditions.

Careful planning ensures the test results reflect intrinsic behavioral differences rather than external fluctuations. This improves the reliability of conclusions and protects the business from misleading insights.

10. Ensuring Technical Quality Through Pre-Test QA and Debugging

Before launching an A/B test, thorough quality assurance is essential to confirm all elements load correctly across platforms, devices, browsers, and screen sizes.

Technical issues such as tracking code failures, slow-loading variations, or misaligned layouts can bias users and invalidate results.

QA steps include checking analytics triggers, validating event firing, reviewing heatmaps, and simulating user journeys.

This step is particularly important for high-traffic websites and apps where even minor technical errors can influence thousands of users. Ensuring technical stability makes the experiment fair and strengthens trust in the resulting insights.

11. Avoiding Sample Pollution and Cross-Exposure Between Variations

Sample pollution occurs when users see both A and B variations, undermining the validity of the test.

This is common when users access multiple devices, clear cookies, or encounter caching errors. To prevent cross-exposure, marketers use server-side testing, user-login-based randomization, or persistent identifiers.

Pollution can also occur when email tests are forwarded to others or when ad platforms optimize delivery unevenly.

Ensuring that each user is exposed to only one version helps maintain test purity and makes the results statistically defensible.

12. Segment-Level Analysis for Deeper Customer Insights

While aggregate results provide a general conclusion, segment-level analysis reveals how different customer groups respond.

For instance, variation B may perform better overall, but variation A might perform better among older users or high-intent returning customers.

Analyzing segments such as device type, demographics, geography, traffic source, and customer lifetime value helps uncover hidden patterns.

These insights are invaluable for personalized marketing strategies and can lead to more targeted, effective implementations.

Segment-level analysis transforms a simple A/B test into a rich source of actionable intelligence.

13. Using Confidence Intervals and Bayesian Approaches for Better Insights

Traditional A/B testing relies on frequentist methods such as p-values, but modern marketing teams increasingly adopt Bayesian methods.

Bayesian A/B testing provides intuitive probability-based insights such as “Variation B has a 90% probability of being better than A.

Confidence intervals offer a range of possible outcomes rather than a single-point estimate, giving a clearer picture of uncertainty.

These methods allow marketers to make decisions faster, especially in dynamic environments like paid advertising.

Adopting advanced statistical frameworks improves decision quality and reduces the risk of false positives.

14. Running Pre-Tests and Holdout Experiments for Validation

Before a full-scale A/B test, marketers sometimes run a pre-test (pilot test) on a small audience to validate tracking, sample size calculations, or variation quality.

Additionally, running a holdout experiment—where a small portion of the audience receives the original experience after the test—helps validate whether the improvement sustains over time.

Holdout groups are widely used in email marketing and app personalization to measure the real incremental uplift.

These validation mechanisms ensure that test results are not only significant but also reliable and scalable for future campaigns.

15. Measuring Incremental Lift and True Business Impact

A/B testing should not only identify statistical winners but also determine the actual business impact in terms of incremental revenue, customer lifetime value, or reduced acquisition cost.

Incremental lift analysis measures the difference in outcomes directly caused by the variation. For example, a test may increase CTR but fail to improve actual purchases.

Evaluating full-funnel results—awareness, engagement, conversion, retention—provides a more complete picture of performance.

Incremental lift ensures that marketing decisions are grounded in real economic value, not just surface-level metrics.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.