Experimental design in marketing refers to a structured approach used to test how different marketing changes—such as ad creatives, landing pages, pricing strategies, email subject lines, user journeys, or discount offers—affect customer behavior.

It helps marketers move away from intuition-based decisions and rely on empirical evidence that clearly shows what works and what doesn’t.

By applying principles of statistics, sampling, and hypothesis testing, experimental design allows businesses to isolate causal relationships, meaning they can identify which specific marketing change leads to a measurable improvement in outcomes such as sales, clicks, engagement, or retention.

A well-designed marketing experiment ensures that results are unbiased, reliable, and scalable.

It aims to control confounding variables, randomly assign users to treatment groups, and ensure that all groups being tested are comparable.

This scientific method of testing is now widely adopted across digital marketing platforms like Google Ads, Meta Ads, e-commerce sites, apps, and email automation tools.

Whether a company wants to optimize its website conversion rate, identify the most effective promotional strategy, or test new product features, experimental design provides the framework for making data-driven business decisions.

It reduces risk by validating ideas before full-scale implementation and helps marketers continuously improve results through iterative testing.

Ultimately, it empowers organizations to maximize ROI, allocate budgets effectively, and strengthen customer experiences based on real behavioral evidence.

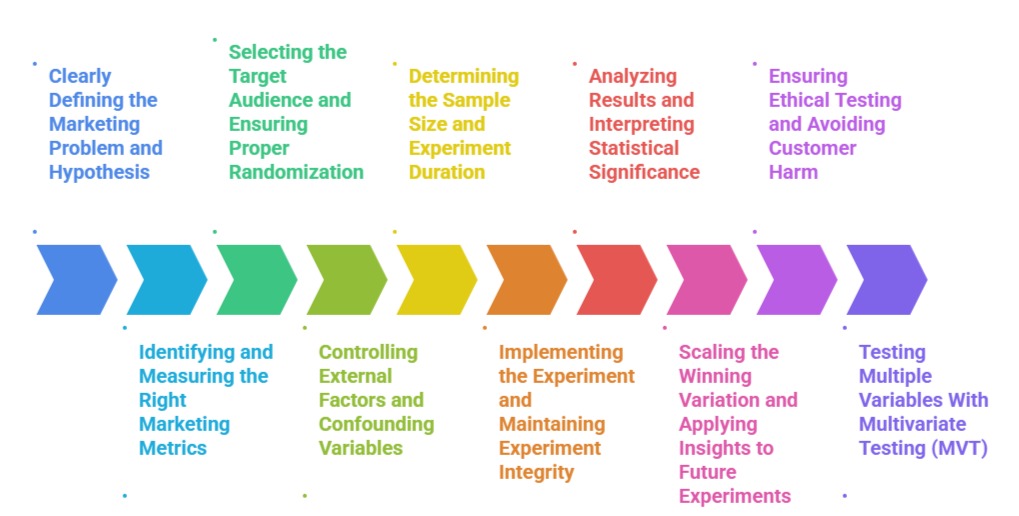

Designing and Running Marketing Experiments

1. Clearly Defining the Marketing Problem and Hypothesis

The first step in experimental design is articulating a clear marketing question—such as evaluating whether a new ad creative increases click-through rate compared to the existing one.

A well-crafted hypothesis specifies what change is expected and why, helping set a measurable direction for the experiment.

This ensures the experiment is not based on vague assumptions but grounded in specific business goals like boosting conversions, improving engagement, or reducing churn.

A precise hypothesis also helps determine which metrics will be tracked and how results will be evaluated later. In marketing, common hypotheses might involve testing color changes on CTAs, promotional variations, or personalization strategies.

By defining the problem early, marketers avoid running unfocused experiments.

2. Identifying and Measuring the Right Marketing Metrics

Choosing the correct performance metric ensures the experiment evaluates the outcome that matters most to the marketing objective.

For example, an email A/B test may focus on open rate or click-through rate depending on the intended behavior.

Marketing experiments often track primary metrics (e.g., conversions), secondary metrics (e.g., time on site), and guardrail metrics (e.g., bounce rate) to ensure that a winning variation does not negatively impact other aspects of performance.

Selecting metrics aligned with the business goal helps avoid misleading interpretations and ensures the experiment’s success is judged accurately.

Using only vanity metrics can lead marketers to make decisions that appear positive but do not drive ROI.

3. Selecting the Target Audience and Ensuring Proper Randomization

A valid marketing experiment requires selecting the appropriate segment of customers and randomly assigning them into control and treatment groups.

Randomization reduces bias and ensures that differences in results are due to the tested variable rather than unrelated factors.

This process is essential across marketing platforms—email tools randomly split lists, ad platforms split audience segments, and websites use randomized visitor assignment.

Proper randomization ensures that both groups behave similarly before the experiment starts, strengthening the causal validity of results.

A poorly randomized experiment may lead to false conclusions that misrepresent customer behavior.

4. Controlling External Factors and Confounding Variables

In marketing experiments, external influences—such as seasonality, competitor promotions, holidays, or traffic spikes—can distort results if not controlled.

A sound experimental design tries to reduce the effect of such confounders through consistent timing, uniform delivery, and equivalent exposure for all groups.

Additionally, ensuring only one variable is changed at a time helps isolate its exact influence.

Marketers must also account for platform algorithms (e.g., ad delivery optimization) that can unintentionally bias results.

Controlling these factors makes results more trustworthy and easier to generalize to real-world decisions.

5. Determining the Sample Size and Experiment Duration

Sample size directly affects the statistical reliability of a marketing experiment.

Too small a sample leads to inconclusive or misleading results, while too large a sample wastes resources and risks exposing customers to suboptimal experiences longer than necessary.

Statistical power calculations are often used to determine the appropriate sample size based on expected effect size and desired confidence levels.

Marketers must also determine the ideal experiment duration—long enough to gather sufficient data but short enough to remain actionable. Seasonality and fluctuations in daily traffic must be considered to avoid premature conclusions.

6. Implementing the Experiment and Maintaining Experiment Integrity

Once the design is finalized, marketers deploy the experiment through platforms like Google Optimize, Meta Ads A/B testing tools, marketing automation platforms, or custom scripts.

Maintaining integrity means ensuring that users are not accidentally exposed to multiple variations and that all variations receive fair exposure.

Monitoring implementation is crucial because technical errors—such as incorrect tracking code placement or inconsistent ad delivery—can compromise the experiment.

Ensuring consistent experience across devices, browsers, and customer segments strengthens data quality and improves the reliability of findings.

7. Analyzing Results and Interpreting Statistical Significance

After collecting data, marketers analyze results using statistical techniques such as confidence intervals, p-values, and lift calculations.

The goal is to determine whether the observed performance differences are statistically meaningful or just random chance.

Interpretation must go beyond simply identifying a winner; marketers need to understand why the change worked and how it impacts broader customer behavior.

This analysis also involves checking for unintended side effects, validating data quality, and ensuring guardrail metrics remain healthy.

A thoughtful analysis ensures the experiment leads to actionable insights rather than premature decisions.

8. Scaling the Winning Variation and Applying Insights to Future Experiments

Once a variation proves successful, the insights are used to scale it across the entire audience or integrate it into long-term strategy.

Marketers also document findings, test conditions, and learnings to support future campaigns and continuous optimization.

Organizations benefit significantly when experimentation becomes part of a systematic test-and-learn culture.

Winning ideas can be adapted to other channels, and failed ideas provide valuable lessons for future hypotheses.

Scaling insights ensures the organization maximizes ROI from each experiment and builds cumulative knowledge over time.

9. Ensuring Ethical Testing and Avoiding Customer Harm

Ethics is a crucial part of marketing experimentation, especially when tests directly influence customer choices, pricing, or communication.

Ethical testing ensures that experiments do not mislead customers, expose them to harmful content, or manipulate them unfairly.

Marketers must avoid using dark patterns, deceptive messages, or discriminatory segmentation.

Transparency in experimentation, especially in areas like personalized pricing or behavioral nudges, helps maintain customer trust.

Ethical testing also ensures compliance with laws such as GDPR, CCPA, and platform privacy rules.

Following ethical guidelines not only protects customers but also strengthens the credibility of experiment results.

10. Testing Multiple Variables with Multivariate Testing (MVT)

Multivariate testing allows marketers to test several elements at once—such as headline, image, color, CTA, and layout—to see which combination performs best.

Unlike A/B testing, which tests one element at a time, MVT evaluates interactions between elements, offering deeper insights into user behavior.

This method is useful for large websites, landing pages, and apps where design variations can scale conversion rates significantly.

However, MVT requires large traffic volumes and more complex statistical analysis.

When executed correctly, it helps marketers optimize entire user experiences instead of just isolated components.

11. Sequential Testing and Bandit Algorithms for Faster Decisions

Sequential testing allows marketers to analyze results continuously rather than waiting for a fixed sample size, helping them stop experiments earlier when strong trends appear.

Bandit algorithms, used in modern ad platforms and optimization tools, dynamically shift traffic toward better-performing variations in real time.

This reduces the opportunity cost of sending users to losing variations and accelerates learning.

These approaches are particularly useful for fast-moving marketing environments such as e-commerce or paid advertising.

They optimize results automatically while still maintaining statistical validity when properly calibrated.

12. Considering Seasonality and Market Conditions in Experiment Planning

Marketing performance shifts based on seasonality, competitive activity, economic trends, and consumer sentiment.

For Example, user responsiveness may differ during festivals, holidays, or sale periods.

Ignoring such variations can lead to misleading results and inappropriate generalizations.

A well-designed experiment considers the timing, traffic fluctuations, and market trends before deployment.

It may also require normalizing results or comparing performance against historical baselines.

Incorporating these adjustments ensures that decisions reflect real customer behavior rather than temporary market noise.

Key Elements of Experimental Design for Marketing

.png)