In modern Business Intelligence (BI) and data analytics, handling large datasets and real-time data streams has become essential for gaining timely insights and maintaining a competitive advantage.

Large datasets originate from sources such as transaction logs, web analytics, sensors, and social media, while real-time streams deliver continuous data flows that require immediate processing. Properly managing both types demands specialized tools, architectures, and techniques to ensure scalability, accuracy, and low latency.

Working with Large Datasets

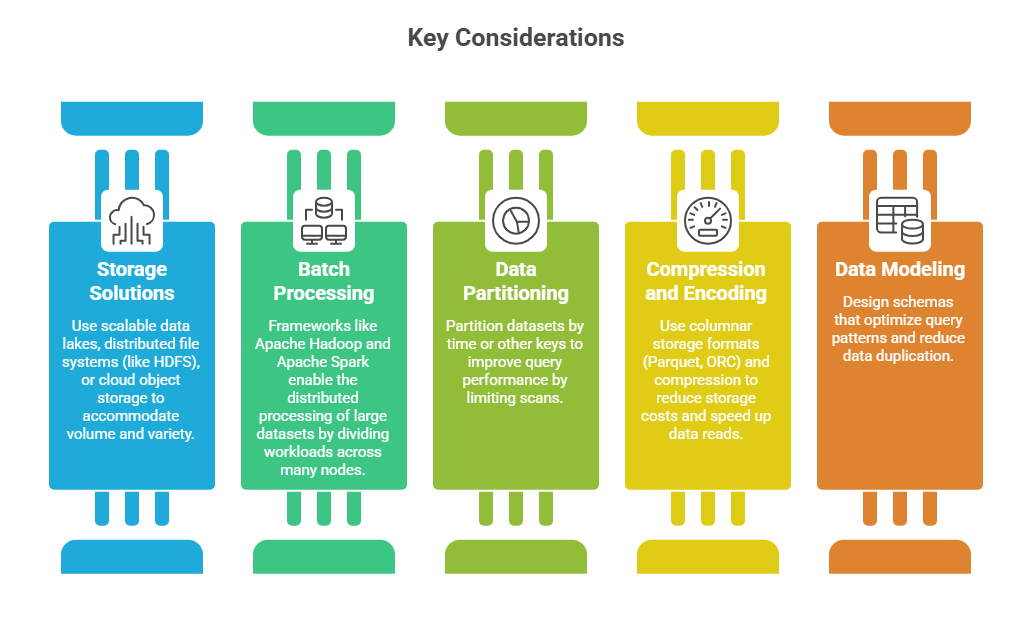

Large datasets involve vast amounts of structured and unstructured data that require robust infrastructure and optimized processing techniques.

Real-Time Data Streams

Real-Time Data Streams

Real-time or streaming data is continuous, high-velocity data generated by various sources, requiring immediate processing and analysis.

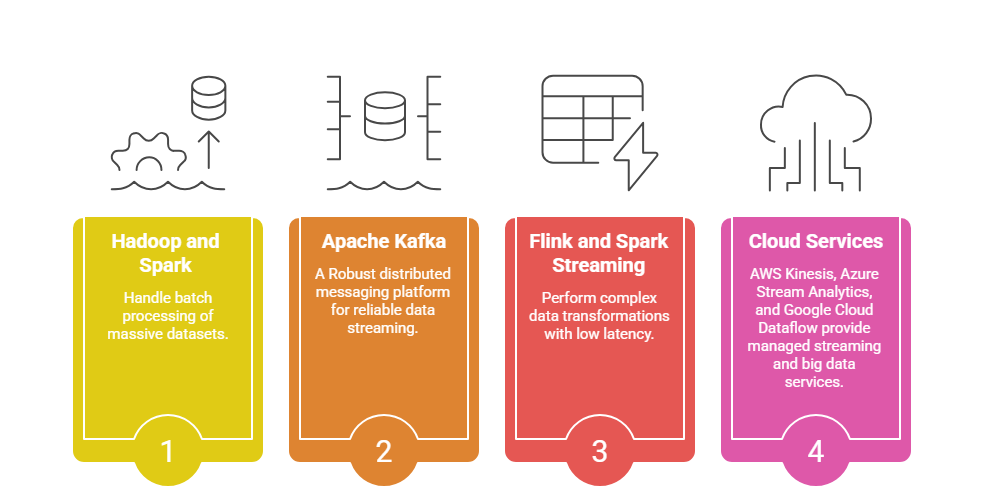

1. Stream Processing Engines: Tools like Apache Kafka, Apache Flink, and Spark Streaming ingest and process data with low latency.

2. Event-Driven Architectures: Systems that react to data events instantly, supporting real-time analytics and alerting.

3. Windowing and State Management: Techniques to aggregate streaming data over time intervals or sessions for meaningful analysis.

4. Fault Tolerance: Ensures data processing resilience through checkpointing and message replay mechanisms.

Integration of Large Datasets and Streaming

Many BI solutions combine batch and stream processing, known as the Lambda or Kappa architecture, to provide comprehensive analytics.

Lambda Architecture: Uses batch processing for comprehensive historical analysis and stream processing for real-time insights.

Kappa Architecture: Simplifies by using only stream processing to handle both historical and real-time data via replayable streams.

Tools and Technologies

Best Practices

To maintain efficient and resilient data systems, consider the practices listed below. These guidelines help strengthen data integrity, scalability, and operational control.

1. Continuously monitor data quality and pipeline performance.

2. Optimize data ingestion rates and implement backpressure handling in streams.

3. Use schema evolution strategies to manage changing data formats.

4. Plan for data security, privacy, and compliance at scale.

5. Ensure scalability by leveraging cloud elasticity and distributed architectures.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.