Running a single Docker container on a local machine is straightforward.

But in the real world, modern applications are rarely a single container, they are composed of many containers, running across multiple servers, needing to scale dynamically, recover from failures automatically, and communicate with each other reliably.

Managing all of this manually is not just difficult, it is practically impossible at any meaningful scale. This is precisely why container orchestration exists.

Orchestration is the automated management of containerized applications across a cluster of machines, handling deployment, scaling, networking, load balancing, and recovery without requiring constant human intervention.

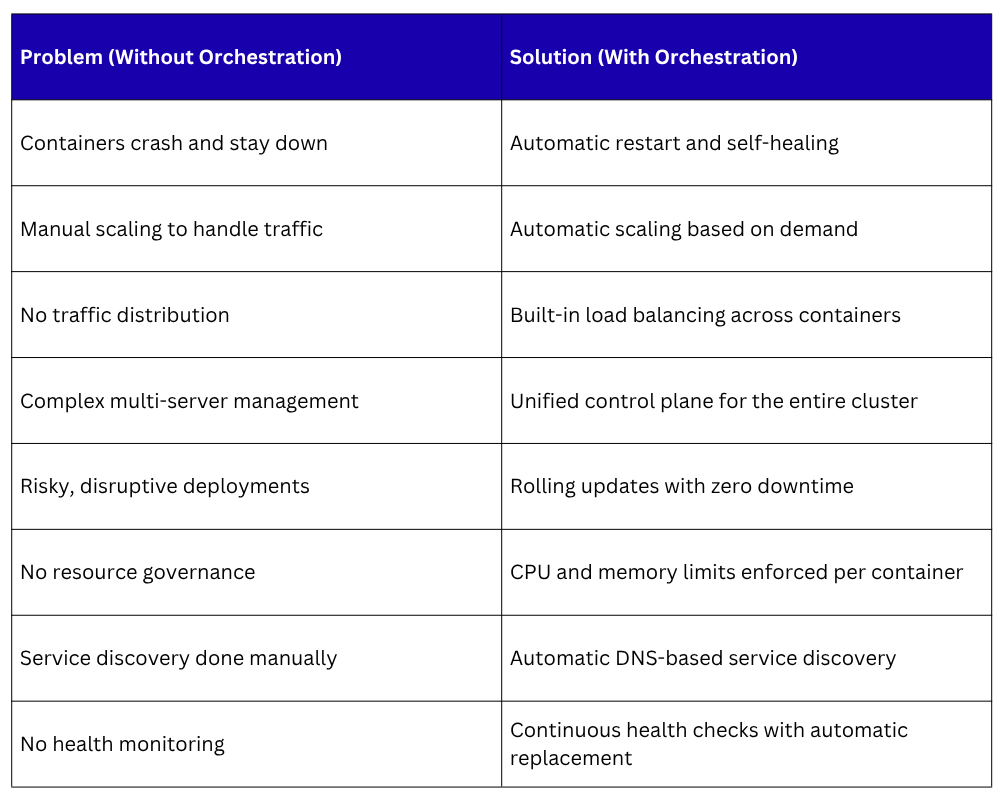

The Limitations of Running Containers Manually

Running containers with docker run works well for a single application on a single machine. The moment an application grows beyond that — more services, more traffic, more servers; manual container management begins to fail in predictable and serious ways.

1. No Automatic Recovery

If a container crashes, it stays down until someone manually restarts it. In a production environment, this means downtime — users experience errors until an engineer intervenes. There is no built-in mechanism to detect failure and recover automatically.

2. Manual Scaling

When traffic increases and an application needs more capacity, someone must manually start additional containers, configure load balancing to include them, and then manually remove them when traffic drops. This is slow, imprecise, and does not respond to real-time demand.

3. No Load Balancing

Docker does not automatically distribute incoming traffic across multiple containers running the same application. Without a separate load balancer configured manually, all traffic hits a single container — creating a bottleneck and a single point of failure.

4. Multi-Host Complexity

Running containers across multiple servers introduces enormous complexity. Which container runs on which server? How do containers on different servers communicate? How is the cluster's overall resource usage balanced? These questions have no simple manual answers.

5. Deployment Coordination

Updating an application that runs as many containers across many servers requires careful coordination — old containers must be replaced with new ones without causing downtime. Doing this manually is slow and error-prone.

6. No Resource Management

Without orchestration, there is no automatic management of how CPU and memory are allocated across containers and servers. One container might consume all the resources on a server, starving others — or servers might sit mostly idle while others are overloaded.

What Orchestration Solves

Container orchestration platforms address every limitation of manual container management — automatically, reliably, and at scale.

Key Capabilities Provided by Orchestration

1. Automated Scheduling

When a new container needs to run, the orchestration platform automatically decides which server in the cluster has the available resources to run it; based on CPU, memory, and other constraints. Engineers simply declare what should run; the platform decides where.

2. Self-Healing

One of the most valuable capabilities of orchestration is self-healing. The platform continuously monitors the health of every container.

If a container crashes, it is automatically restarted. If an entire server fails, the containers that were running on it are automatically rescheduled on healthy servers — with no manual intervention required.

3. Horizontal Scaling

Orchestration platforms can automatically scale the number of running container instances up or down based on metrics like CPU usage, memory consumption, or incoming request volume.

When traffic spikes, more instances are started. When traffic drops, excess instances are removed — optimizing resource usage and cost.

4. Rolling Deployments and Rollbacks

When deploying a new version of an application, orchestration platforms replace old containers with new ones gradually and in a controlled sequence, ensuring that some instances of the application are always running and serving users throughout the update.

If the new version has problems, the platform can automatically roll back to the previous version.

5. Service Discovery and Load Balancing

In a containerized environment, containers start and stop frequently and their IP addresses change constantly.

Orchestration platforms provide built-in service discovery, containers find each other by name rather than IP address. Built-in load balancing distributes traffic evenly across all healthy instances of a service automatically.

6. Configuration and Secret Management

Orchestration platforms provide secure ways to inject configuration values and sensitive secrets — such as database passwords and API keys — into containers at runtime, without hardcoding them into images or exposing them in logs.

7. Storage Management

Orchestration platforms manage persistent storage for containers, automatically attaching the right storage volumes to the right containers, even when containers move between servers.

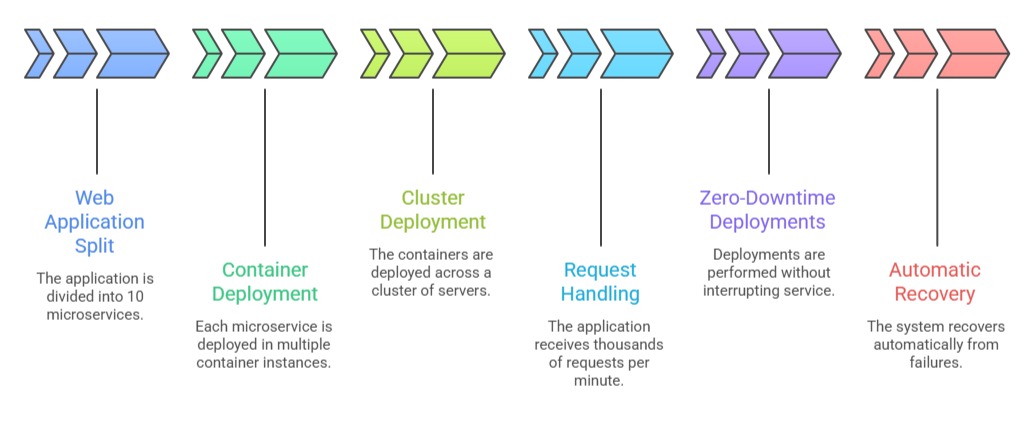

The Scale at Which Orchestration Becomes Essential

To make the need for orchestration concrete, consider what a real-world production application might involve:

Managing this manually — deciding where each container runs, monitoring their health, restarting failures, coordinating deployments, and balancing traffic, would require a dedicated team working around the clock.

With orchestration, all of it is handled automatically by the platform.

The Rise of Microservices and Why It Amplifies the Need

The shift toward microservices architecture, where applications are broken into many small, independently deployable services — has made orchestration not just useful but fundamental.

In a microservices application:

1. Each service is developed, deployed, and scaled independently.

2. Services communicate with each other over a network, requiring reliable service discovery.

3. Different services have different scaling needs, a payment service might need more instances than a notification service.

4. Deployments happen frequently and must not affect other services.

5. Failures in one service must not cascade to bring down others.

All of these requirements demand the automated, dynamic, and intelligent management that only an orchestration platform can provide.

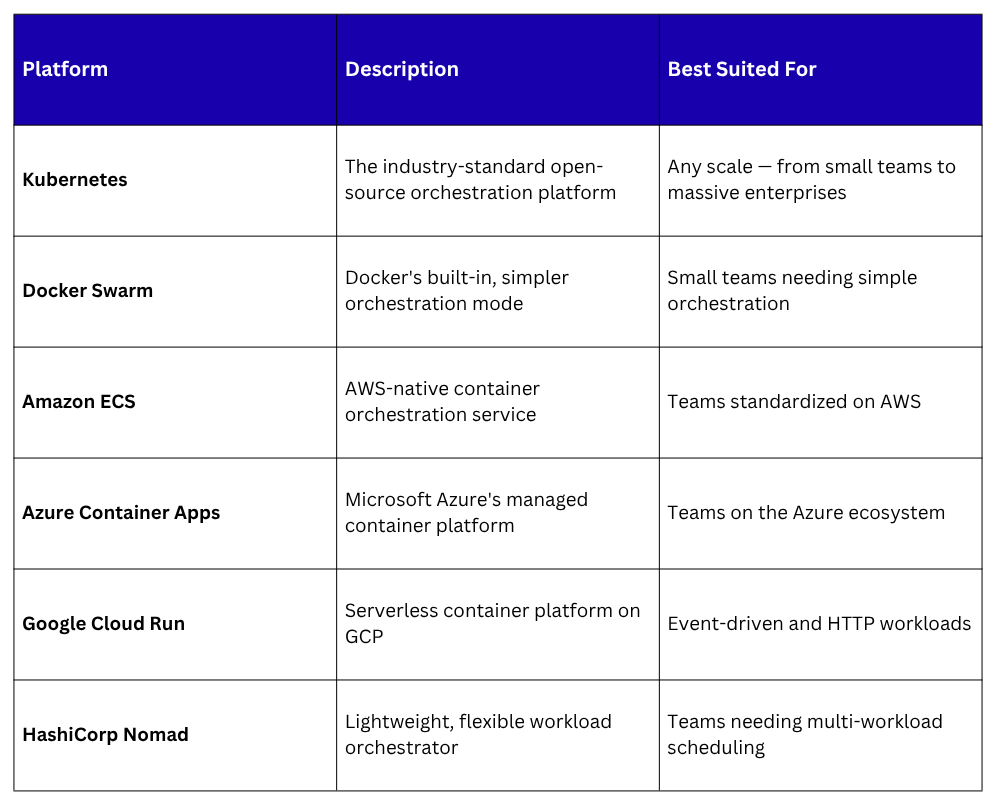

Container Orchestration Platforms

Several container orchestration platforms exist, each designed to address the challenges described above:

Kubernetes has emerged as the clear industry standard, supported by every major cloud provider, adopted by organizations of all sizes, and backed by one of the largest open-source communities in the world.

It is the orchestration platform that the remainder of this module focuses on.

Orchestration and DevOps

Orchestration is not just an operational tool, it is a natural extension of DevOps principles:

1. Automation: Orchestration automates deployment, scaling, and recovery, eliminating manual operational toil.

2. Reliability: Self-healing and rolling deployments ensure applications remain available through failures and updates.

3. Continuous Delivery: CI/CD pipelines deploy directly to orchestration platforms, making frequent and safe releases practical.

4. Infrastructure as Code: Orchestration configurations are defined in code files, version-controlled, and reviewed like any other code change.

5. Observability: Orchestration platforms expose health, resource, and performance data that feeds into monitoring and alerting systems.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.