Deep Learning

Deep Learning is a subset of machine learning that focuses on algorithms inspired by the structure and function of the human brain, called artificial neural networks. Unlike traditional machine learning methods that rely heavily on manual feature extraction, deep learning models automatically learn hierarchical representations from raw data through multiple layers of neurons. Each layer transforms the input data into increasingly abstract features, enabling the model to capture complex patterns and relationships that are difficult to identify manually. Deep learning is particularly effective for high-dimensional data such as images, audio, and text, where conventional algorithms struggle to perform well.

At its core, deep learning involves stacking multiple layers of interconnected neurons, including input layers, hidden layers, and output layers. Each neuron applies a weighted sum of its inputs followed by a non-linear activation function, allowing the network to approximate non-linear mappings between inputs and outputs. Training deep learning models involves feeding large amounts of data, computing predictions through a forward pass, evaluating errors using a loss function, and adjusting weights using backpropagation and optimization algorithms. This iterative learning process enables deep networks to achieve remarkable performance on tasks like image recognition, speech processing, natural language understanding, and autonomous systems.

Deep learning has become a cornerstone of modern artificial intelligence because of its ability to learn features automatically, generalize from complex data, and scale efficiently with large datasets and computational resources. By leveraging powerful hardware like GPUs and frameworks such as TensorFlow and PyTorch, deep learning models can solve problems that were previously considered intractable, opening new possibilities in computer vision, natural language processing, robotics, and healthcare. Its capacity to model complex, non-linear relationships makes deep learning an essential approach in fields where data is abundant and patterns are intricate.

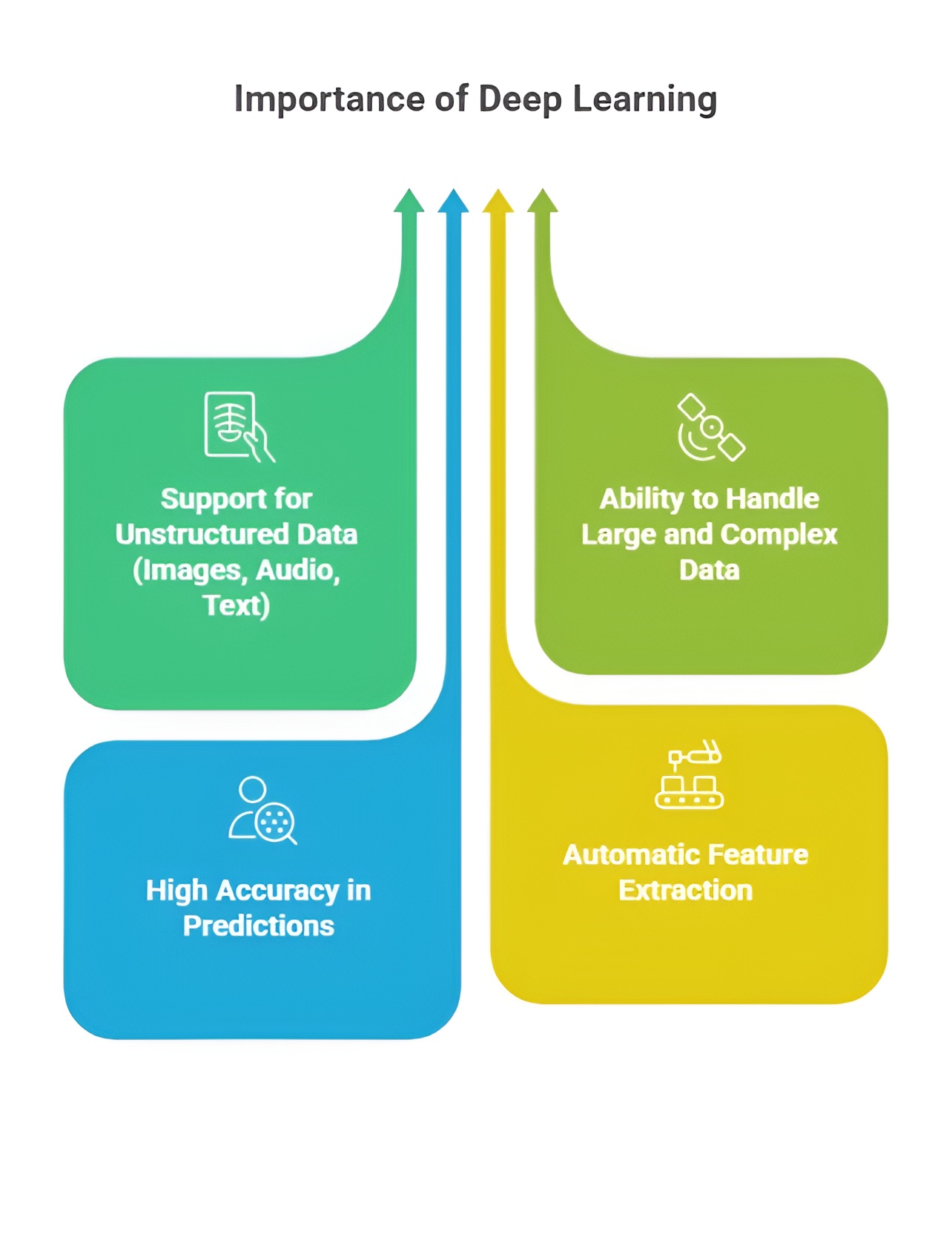

Importance of Deep Learning

Deep learning is a branch of machine learning that uses neural networks with multiple layers to automatically learn patterns and representations from large amounts of data. It enables computers to perform complex tasks such as image recognition, natural language processing, and speech understanding with high accuracy. Its ability to model intricate relationships in data makes it a cornerstone of modern artificial intelligence applications.

1. Automatic Feature Extraction

Deep learning eliminates the need for manual feature engineering, which is a key limitation in traditional machine learning methods. Through multiple layers of neurons, deep networks automatically learn hierarchical representations of data, capturing low-level features in early layers and complex patterns in deeper layers. For example, in image recognition, early layers detect edges and textures, while deeper layers identify shapes, objects, and even contextual relationships. This ability to automatically extract meaningful features enhances model performance and reduces dependency on domain-specific knowledge.

2. Handling High-Dimensional Data

Traditional algorithms often struggle with high-dimensional datasets, such as images, videos, or genomic data, because of the curse of dimensionality. Deep learning models are designed to process and learn from large-scale, high-dimensional data efficiently. The multiple hidden layers in neural networks enable the extraction of informative patterns without manually reducing dimensionality. This capability allows applications like medical imaging, satellite imagery analysis, and autonomous vehicle perception to leverage raw data directly for accurate predictions.

3. Non-Linear Modeling Capability

One of the most important aspects of deep learning is its ability to model complex, non-linear relationships between inputs and outputs. Unlike linear models, which are limited to simple correlations, deep neural networks can approximate highly intricate functions due to the combination of weighted sums and non-linear activation functions in each layer. This capability makes deep learning ideal for tasks such as natural language understanding, speech recognition, and complex time-series forecasting, where relationships are rarely linear or obvious.

4. Scalability with Large Datasets

Deep learning thrives on large amounts of data, which is increasingly available in the modern digital age. As data volumes grow, deep networks can scale their complexity and learn richer representations, often improving model accuracy and robustness. This scalability allows deep learning models to surpass traditional machine learning approaches in performance for tasks like image classification, object detection, and recommendation systems, where millions of examples can be used for training.

5. Real-Time Decision Making

Deep learning models can be deployed to make real-time predictions and decisions, which is crucial in applications like autonomous driving, robotics, and financial trading. Once trained, these models can process inputs rapidly and generate outputs efficiently, enabling systems to respond dynamically to changing environments. This real-time capability is a direct consequence of learned representations and optimized computations within deep networks.

6. Robustness to Noisy Data

Deep neural networks can generalize well even in the presence of noisy, incomplete, or unstructured data, which often degrades the performance of traditional machine learning methods. Techniques like dropout, batch normalization, and regularization improve the robustness of models, allowing them to extract relevant features while ignoring irrelevant noise. This makes deep learning particularly effective in real-world scenarios, such as speech recognition in noisy environments or object detection under varying lighting conditions.

7. Versatility Across Domains

Deep learning is highly versatile and has been successfully applied to a wide range of domains. In computer vision, it enables facial recognition, medical diagnosis, and autonomous vehicle navigation. In natural language processing, it supports machine translation, sentiment analysis, and chatbots. In audio processing, it powers speech recognition and music classification. Its adaptability across modalities is a major reason for its widespread adoption in AI applications.

8. Enabling Advanced AI Applications

Deep learning forms the backbone of state-of-the-art AI systems, including self-driving cars, virtual assistants, and robotics. By learning complex patterns from large datasets, deep networks enable machines to perform tasks that require perception, reasoning, and decision-making, which were previously considered achievable only by humans. This capability is pushing the boundaries of what artificial intelligence can accomplish, creating new possibilities across industries.

9. Integration with Modern Hardware and Frameworks

Deep learning models can efficiently leverage high-performance computing resources, such as GPUs and TPUs, for accelerated training and inference. Frameworks like TensorFlow, PyTorch, and Keras provide tools for constructing complex networks, automatic differentiation, and distributed training. This integration makes it feasible to train very deep networks on massive datasets, which was previously impractical, and allows organizations to deploy deep learning solutions at scale.

10. Continuous Improvement with Data

Deep learning systems have the ability to improve continuously as more data becomes available. Unlike traditional models, which often plateau with fixed features, deep networks can refine their internal representations and adjust weights to capture emerging patterns. This characteristic ensures that models remain effective and adaptable in dynamic environments, such as real-time recommendation systems or adaptive autonomous systems.

Deep learning is therefore critical in modern AI because it automates feature learning, handles complex and high-dimensional data, scales with large datasets, enables real-time and robust decision-making, and supports advanced AI applications across domains. Its combination of hierarchical representation learning, non-linear modeling, and integration with modern computational resources makes it a transformative technology in artificial intelligence.

Deep Learning with Python

Deep Learning is a specialized branch of Machine Learning that focuses on building neural networks with multiple layers to model highly complex patterns and representations in data. Unlike traditional Machine Learning, which often relies on handcrafted features, deep learning automatically learns high-level abstractions from raw data, making it suitable for tasks such as image recognition, natural language processing, speech recognition, and autonomous systems. Python is the most widely used language for deep learning due to its simplicity, readability, and extensive ecosystem of libraries including TensorFlow, Keras, and PyTorch. These libraries provide flexible and powerful tools for constructing, training, testing, and deploying neural network models efficiently.

1. Introduction to Neural Networks

Neural networks are computational models inspired by the structure and function of the human brain. They consist of interconnected nodes called neurons arranged in layers. Each neuron receives inputs, applies a linear transformation combined with an activation function, and passes the output to the next layer. During training, the network adjusts connection weights to minimize prediction errors using algorithms like backpropagation and gradient descent. Neural networks are capable of approximating complex, non-linear functions and can be applied to a wide variety of tasks. Python simplifies neural network implementation through libraries that provide built-in functions for defining layers, forward propagation, backward propagation, weight initialization, and gradient optimization.

2. Types of Neural Networks

2.1 Feedforward Neural Networks (FNN)

Feedforward Neural Networks are the simplest type of neural network. In FNNs, information flows in a single direction from the input layer, through one or more hidden layers, to the output layer. There are no loops or cycles, meaning the network does not retain memory of past inputs. FNNs are primarily used for regression and classification problems, such as predicting house prices or categorizing emails. Deep feedforward networks with multiple hidden layers can learn complex non-linear relationships between input and output data. Python allows easy creation of FNNs using Keras Sequential models or PyTorch’s nn.Sequential, enabling straightforward definition of layer sequences and automatic gradient computation.

2.2 Convolutional Neural Networks (CNN)

Convolutional Neural Networks are designed specifically for spatial and image data. CNNs consist of convolutional layers that automatically extract spatial features like edges, textures, and shapes. Pooling layers reduce spatial dimensions to improve computational efficiency and reduce overfitting. CNN subtypes include LeNet, AlexNet, VGGNet, ResNet, and U-Net, each specialized for tasks such as digit recognition, object classification, image segmentation, or medical imaging analysis. CNNs are extensively used in applications like image classification, object detection, facial recognition, and autonomous vehicle perception. Python libraries such as Keras and PyTorch provide pre-defined layers like Conv2D, MaxPooling2D, Flatten, and activation functions for constructing CNNs easily.

2.3 Recurrent Neural Networks (RNN)

Recurrent Neural Networks are designed for sequential data, where the current output depends on previous inputs. RNNs maintain hidden states that store information about past elements in a sequence, making them suitable for time series forecasting, speech recognition, language modeling, and stock price prediction. RNN subtypes include Simple RNNs for short sequences, LSTMs (Long Short-Term Memory) for capturing long-term dependencies using gates, GRUs (Gated Recurrent Units) as a simplified LSTM, Bidirectional RNNs that process sequences in both directions, and Sequence-to-Sequence models for translation and text generation. Python allows the creation of RNNs with layers like SimpleRNN, LSTM, and GRU in Keras or PyTorch, providing tools for forward and backward computations and sequence handling.

2.4 Transformers

Transformers are advanced neural networks primarily used for natural language processing (NLP) and recently for vision tasks. Unlike RNNs, transformers do not process sequences sequentially but use self-attention mechanisms to capture relationships between all elements of a sequence simultaneously. Transformer models include BERT for contextual embeddings and sentence understanding, GPT for text generation and conversational AI, T5 for versatile text-to-text tasks, and Vision Transformers (ViT) for image classification. Transformers outperform traditional RNNs in handling long sequences due to their parallelization, scalability, and ability to capture global dependencies. Python libraries like Hugging Face Transformers, TensorFlow, and PyTorch provide pre-trained models and tokenizers for fine-tuning and deployment.

2.5 Autoencoders

Autoencoders are neural networks used for unsupervised feature learning, dimensionality reduction, and data reconstruction. They consist of an encoder that compresses input data into a latent space and a decoder that reconstructs the original input. Variants include Denoising Autoencoders for removing noise, Sparse Autoencoders for learning efficient representations, and Variational Autoencoders (VAE) for generative modeling. Autoencoders are applied in image compression, anomaly detection, and generative tasks. Python implementation is simplified through Keras and PyTorch, which provide high-level APIs for defining encoder and decoder architectures and performing training and reconstruction.

3. Training and Testing Neural Networks

Training neural networks involves feeding input data, performing a forward pass to compute outputs, calculating a loss function to measure prediction error, and performing a backward pass (backpropagation) to update weights using an optimizer. Training is performed over multiple epochs until the model achieves satisfactory performance. Testing involves evaluating the model on unseen data to ensure generalization and avoid overfitting. Python frameworks provide utilities such as model.fit() and model.evaluate() in Keras, or custom training loops in PyTorch, giving developers full control over the training and testing workflow.

4. Optimizers and Loss Functions

Optimizers are algorithms that update neural network weights to minimize the loss function. Common optimizers include Stochastic Gradient Descent (SGD), Adam, RMSProp, and others. Loss functions measure the difference between predicted outputs and true labels, guiding the optimizer during training. Examples include Mean Squared Error (MSE) for regression, Binary Crossentropy for binary classification, and Categorical Crossentropy for multi-class classification. Python libraries provide built-in implementations for both optimizers and loss functions, allowing efficient and reliable training of deep learning models with minimal manual computation.

Deep Learning with Python provides a powerful and flexible framework for designing intelligent systems capable of understanding complex data types such as images, sequences, text, and structured data. By exploring subtypes such as FNNs, CNNs, RNNs, Transformers, and Autoencoders, and mastering training workflows, optimizers, and loss functions, developers can create highly accurate, scalable, and real-world AI solutions. Python’s extensive ecosystem, combined with deep learning’s ability to automatically extract high-level features, makes it essential for modern applications in healthcare, finance, autonomous systems, natural language processing, computer vision, and robotics.

Libraries Used in Deep Learning with Python

Deep Learning in Python relies on a variety of libraries and frameworks that simplify building, training, and deploying neural networks. These libraries provide optimized implementations for tensor operations, GPU acceleration, pre-defined layers, models, and utilities for data preprocessing, visualization, and evaluation. Each library has its unique strengths and applications, making Python the most popular language for deep learning.

1. TensorFlow

TensorFlow is an open-source library developed by Google for building and deploying deep learning models. It provides tools for creating computation graphs, automatic differentiation, and GPU/TPU acceleration, which allow for efficient training of large neural networks. TensorFlow includes Keras as a high-level API for defining layers, models, and training workflows with minimal code. It supports multiple platforms such as desktop, mobile (TensorFlow Lite), and cloud (TensorFlow Serving). TensorFlow is widely used in image recognition, NLP, speech processing, and reinforcement learning.

2. Keras

Keras is a high-level neural network API that runs on top of TensorFlow or Theano. It is designed to simplify the process of building deep learning models by providing intuitive classes for layers, models, optimizers, and loss functions. Keras allows quick prototyping and experimentation with neural networks without delving into low-level tensor operations. Its primary applications include image classification, text classification, sequence modeling, and generative models.

3. PyTorch

PyTorch is an open-source deep learning framework developed by Facebook that emphasizes dynamic computation graphs and ease of experimentation. Unlike TensorFlow 1.x, PyTorch constructs the computational graph on-the-fly, making it flexible for research and development. PyTorch supports GPU acceleration and has a rich ecosystem including TorchVision for computer vision, TorchText for NLP, and TorchAudio for audio processing. It is widely used in research, academic projects, and industrial applications, especially where custom models or dynamic architectures are required.

4. NumPy

NumPy is a fundamental library for numerical computing in Python and is widely used in deep learning for handling arrays, matrices, and basic mathematical operations. Deep learning frameworks like TensorFlow and PyTorch rely on NumPy for data preprocessing, reshaping, and vectorized computations. Although NumPy does not provide neural network functionality by itself, it is essential for managing and transforming input data before feeding it into models.

5. Pandas

Pandas is a library for data manipulation and analysis, particularly for structured tabular data. It provides data structures like DataFrames and tools for cleaning, filtering, merging, and transforming datasets, which are essential steps before training deep learning models. Pandas is commonly used in data preprocessing, feature engineering, and handling datasets for supervised learning tasks.

6. Matplotlib and Seaborn

Matplotlib and Seaborn are visualization libraries in Python. Matplotlib provides low-level plotting capabilities for line charts, scatter plots, and histograms, while Seaborn builds on it for advanced statistical visualizations. Visualizing training loss, accuracy, and model predictions helps in understanding the model’s performance and debugging issues. These libraries are essential for analyzing data distributions and model outcomes in deep learning workflows.

7. OpenCV

OpenCV is an open-source computer vision library used for image and video processing. In deep learning, it is often used for reading images, resizing, normalization, and augmentation before feeding data into convolutional neural networks (CNNs). OpenCV supports Python APIs, allowing seamless integration with TensorFlow, Keras, and PyTorch for computer vision applications like object detection, facial recognition, and real-time video analysis.

8. Scikit-learn

Scikit-learn is a Python library for machine learning and data preprocessing, which complements deep learning workflows. It provides standardization, normalization, feature selection, dimensionality reduction, and model evaluation metrics. Although scikit-learn is not primarily for deep learning, it is essential for preprocessing data, splitting datasets, and evaluating neural network models.

9. Hugging Face Transformers

Hugging Face Transformers is a library for state-of-the-art NLP models, including BERT, GPT, RoBERTa, T5, and many others. It allows fine-tuning pre-trained transformer models for custom NLP tasks like text classification, translation, summarization, and question answering. Hugging Face simplifies working with transformers in Python and integrates seamlessly with PyTorch and TensorFlow.

10. TensorBoard

TensorBoard is a visualization toolkit that comes with TensorFlow, used for monitoring training progress, visualizing computation graphs, and tracking metrics like loss and accuracy. It helps in debugging, optimizing model architectures, and comparing experiments. TensorBoard is essential for large-scale model development and performance analysis.

Python’s deep learning ecosystem integrates these libraries, providing a comprehensive toolkit for building neural networks, processing data, performing numerical computations, visualizing results, and deploying models in real-world applications. Libraries like TensorFlow, Keras, PyTorch, and Hugging Face handle neural network training, while NumPy, Pandas, Matplotlib, OpenCV, and Scikit-learn support data preparation, manipulation, and visualization, ensuring end-to-end deep learning solutions are achievable in Python.

Types of Deep Learning in Python

Deep Learning in Python can be categorized into several types based on the neural network architecture and the kind of data they are designed to process. Each type is suited to specific tasks, from image recognition to sequence modeling, and Python provides libraries such as TensorFlow, Keras, PyTorch, and Hugging Face Transformers to implement these architectures efficiently.

1. Feedforward Neural Networks (FNN)

Feedforward Neural Networks are the most basic type of neural network, where information flows only in one direction—from input to output—without any cycles or feedback loops. FNNs are used for regression and classification tasks, where the goal is to map input features to output predictions. They consist of input layers, hidden layers, and output layers, with neurons connected through weighted edges. Deep feedforward networks with multiple hidden layers can capture complex non-linear relationships. Python allows implementing FNNs easily using Keras Sequential models or PyTorch nn.Sequential, providing built-in layers and activation functions.

2. Convolutional Neural Networks (CNN)

Convolutional Neural Networks are specialized for spatial and image data, leveraging convolutional layers to extract features such as edges, textures, and patterns. Pooling layers reduce dimensionality, making computation more efficient. CNNs are extensively used in image classification, object detection, facial recognition, and medical imaging. Variants include LeNet, AlexNet, VGGNet, ResNet, and U-Net, each optimized for specific image processing tasks. Python frameworks like Keras and PyTorch provide tools to implement CNN layers like Conv2D, MaxPooling2D, Flatten, and activation functions like ReLU.

3. Recurrent Neural Networks (RNN)

Recurrent Neural Networks are designed for sequential data, such as text, speech, or time series, where current outputs depend on previous inputs. Standard RNNs maintain hidden states to store past information, but they struggle with long-term dependencies. Variants like LSTM (Long Short-Term Memory) and GRU (Gated Recurrent Unit) address this by using gating mechanisms to retain relevant information over long sequences. RNNs are widely used in language modeling, text generation, speech recognition, and stock price prediction. Python allows defining RNNs through layers like SimpleRNN, LSTM, and GRU in Keras or PyTorch.

4. Transformers

Transformers are advanced neural networks designed primarily for natural language processing (NLP) but are increasingly used for images and other data types. They use self-attention mechanisms to capture relationships between all elements in a sequence simultaneously, enabling parallel processing and long-term dependency modeling. Transformers include models such as BERT, GPT, T5, and Vision Transformers (ViT) for text and image tasks. Python libraries like Hugging Face Transformers, TensorFlow, and PyTorch provide pre-trained models and utilities for fine-tuning on custom datasets.

5. Autoencoders

Autoencoders are unsupervised neural networks used for dimensionality reduction, feature learning, and data reconstruction. They consist of an encoder that compresses the input into a latent representation and a decoder that reconstructs the original data. Variants include Denoising Autoencoders for noise removal, Sparse Autoencoders for efficient representation, and Variational Autoencoders (VAE) for generative modeling. Autoencoders are applied in image compression, anomaly detection, and generative tasks, and can be implemented in Python using Keras or PyTorch.

6. Generative Adversarial Networks (GANs)

Generative Adversarial Networks are composed of two neural networks—a generator and a discriminator—that compete in a game-theoretic manner. The generator creates fake data, while the discriminator attempts to distinguish real from fake. This architecture is widely used in image synthesis, deepfake generation, and data augmentation. GANs can be implemented in Python using TensorFlow, Keras, and PyTorch, allowing researchers and developers to create high-quality synthetic data for various applications.

7. Deep Reinforcement Learning (DRL)

Deep Reinforcement Learning combines deep learning with reinforcement learning, where an agent learns to make sequential decisions by interacting with an environment. The agent optimizes a reward signal to improve performance over time. DRL is used in autonomous vehicles, robotics, game AI, and resource optimization. Python provides frameworks such as OpenAI Gym, Stable Baselines, TensorFlow Agents, and PyTorch RL libraries for building and training DRL models.

Python provides a comprehensive ecosystem for implementing all types of deep learning architectures, from basic feedforward networks to complex transformers, GANs, and reinforcement learning models. Each type has specialized applications in fields such as computer vision, natural language processing, sequence modeling, generative modeling, and intelligent agent development. By leveraging Python libraries, developers can rapidly prototype, train, and deploy deep learning models, making deep learning both accessible and scalable for real-world applications.

Class Sessions

1- Introduction to Python

2- Basic Syntax and Variables

3- Basic Input & Output

4- Control Flow Statements

5- Introduction to Python in AI

6- Libraries supported in Python for AI

7- OpenCV

8- Scikit-image

9- Mediapipe

10- Introduction to Tenserflow

11- Introduction to Pytorch

12- Machine Learning in Python

13- Deep Learning in Python

14- Introduction to Robotics and Automation Applications in Python