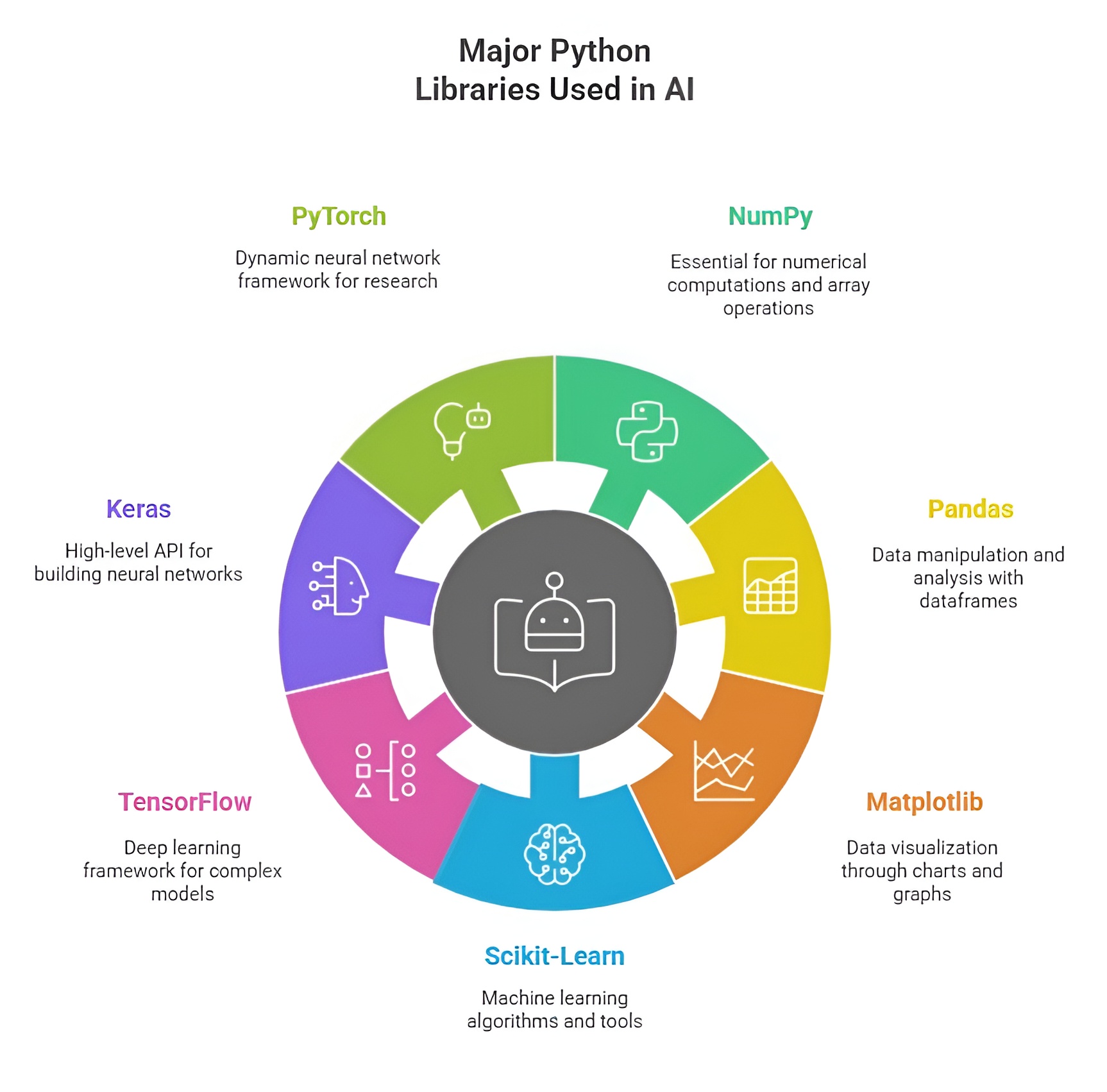

Major Python Libraries Used in AI

Python is the leading language for AI because of its simplicity, readability, and rich ecosystem of specialized libraries. These libraries are tailored to different AI tasks such as machine learning, deep learning, computer vision, natural language processing, and data analysis. Organizing Python libraries according to their main application areas helps in understanding their purpose and selecting the right tools for specific AI projects.

1) Machine Learning Libraries

Python offers several powerful libraries for machine learning that simplify model development, training, and evaluation. Key libraries include scikit-learn for traditional ML algorithms, XGBoost and LightGBM for gradient boosting, and TensorFlow or PyTorch for deep learning models. These libraries provide built-in functions for preprocessing, feature selection, model training, and evaluation. Overall, Python’s machine learning libraries enable efficient, flexible, and scalable AI solutions for a wide range of applications.

Scikit-learn

Scikit-learn is a versatile Python library for machine learning and statistical modeling. It provides tools for classification, regression, clustering, dimensionality reduction, and preprocessing. Scikit-learn allows developers to implement machine learning algorithms without building models from scratch, making it ideal for predictive modeling, recommendation systems, and anomaly detection. Its integration with NumPy and Pandas ensures efficient handling of datasets and preprocessing workflows.

NumPy

NumPy is the foundational library for numerical computing in Python and is widely used in machine learning. It provides multi-dimensional arrays and mathematical functions that enable efficient computation on large datasets. NumPy is used for matrix operations, linear algebra, and statistical analysis, all of which are essential for training and deploying machine learning algorithms.

Pandas

Pandas is a library for data manipulation and analysis. It provides data structures like DataFrames and Series to handle structured data efficiently. Pandas is used in AI workflows to clean datasets, handle missing values, and perform transformations needed for model training. Its integration with other libraries like Matplotlib and Scikit-learn makes it indispensable for machine learning projects.

2) Deep Learning Libraries

Python provides specialized libraries for deep learning that facilitate building and training neural networks efficiently. Popular libraries include TensorFlow and Keras for designing complex architectures, and PyTorch for dynamic computation and flexibility in research. These libraries support GPU acceleration, automatic differentiation, and pre-trained models for faster development. Overall, Python’s deep learning libraries enable creating advanced AI systems for tasks like image recognition, natural language processing, and reinforcement learning.

TensorFlow

TensorFlow is an open-source deep learning framework developed by Google. It allows developers to design and train neural networks for tasks like image recognition, natural language processing, and reinforcement learning. TensorFlow supports both CPU and GPU acceleration and provides Keras as a high-level API for easier model building and deployment. Its versatility makes it suitable for large-scale AI applications in research and industry.

PyTorch

PyTorch is an open-source deep learning library developed by Facebook. It provides dynamic computational graphs, allowing models to be modified during runtime, which is ideal for research and experimentation. PyTorch supports tensor computations, automatic differentiation, and GPU acceleration. It is widely used in computer vision, natural language processing, and reinforcement learning due to its flexibility and ease of use.

Keras

Keras is a high-level neural networks API built on top of TensorFlow. It allows developers to quickly prototype and train deep learning models with minimal code. Keras provides a modular approach to building neural networks, enabling the combination of layers, optimizers, and loss functions efficiently. It is widely used for image classification, time-series prediction, and NLP tasks.

3) Computer Vision Libraries

Python offers several libraries for computer vision that enable processing, analyzing, and interpreting visual data. Key libraries include OpenCV for image and video manipulation, PIL/Pillow for basic image processing, and scikit-image for advanced image analysis. These libraries provide tools for feature extraction, object detection, image filtering, and transformation. Overall, Python’s computer vision libraries simplify developing applications in fields like robotics, surveillance, medical imaging, and augmented reality.

OpenCV

OpenCV (Open Source Computer Vision Library) is specialized in image and video processing. It provides tools for tasks such as object detection, facial recognition, motion tracking, and video analysis. OpenCV can be combined with deep learning libraries like TensorFlow and PyTorch to build AI-powered visual recognition systems. Its extensive functions for image manipulation and analysis make it a key library in computer vision applications.

PIL (Python Imaging Library)

PIL is used for opening, manipulating, and saving images in various formats. It allows developers to perform tasks like image resizing, cropping, and filtering, which are essential preprocessing steps in computer vision pipelines. PIL is often used alongside OpenCV for image processing tasks in AI projects.

4) Natural Language Processing (NLP) Libraries

Python provides powerful libraries for Natural Language Processing (NLP) to analyze, process, and understand human language. Popular libraries include NLTK for text processing and linguistic analysis, spaCy for efficient NLP pipelines, and Hugging Face Transformers for state-of-the-art language models. These libraries support tokenization, sentiment analysis, named entity recognition, and machine translation. Overall, Python’s NLP libraries enable building intelligent applications like chatbots, language translators, and text analytics systems.

NLTK (Natural Language Toolkit)

NLTK is a Python library for text processing and NLP tasks. It provides tools for tokenization, stemming, parsing, tagging, and semantic reasoning. NLTK is widely used for educational purposes, research, and building NLP applications such as chatbots, text analysis, and sentiment detection.

spaCy

spaCy is an industrial-strength NLP library optimized for performance and efficiency. It provides pipelines for tasks such as named entity recognition, part-of-speech tagging, dependency parsing, and text classification. spaCy is used in real-world AI applications where speed and accuracy are critical, such as automated customer support systems and information extraction tools.

Hugging Face Transformers

Hugging Face Transformers provides pre-trained state-of-the-art models for NLP tasks, including BERT, GPT, RoBERTa, and T5. It allows developers to perform text classification, summarization, translation, and generation without training models from scratch. Hugging Face Transformers accelerates NLP development and is widely adopted in research and production AI systems.

5) Data Visualization Libraries

Python offers a variety of libraries for creating visual representations of data to aid analysis and interpretation. Popular libraries include Matplotlib for flexible plotting, Seaborn for statistical visualizations, Plotly for interactive plots, and Bokeh for web-based dashboards. These libraries support charts, graphs, heatmaps, and 3D visualizations with extensive customization options. Overall, Python’s data visualization libraries help communicate insights effectively and make complex data more understandable.

Matplotlib

Matplotlib is a Python library for creating static, animated, and interactive visualizations. It is used in AI to visualize datasets, model performance, and training metrics. Visualization is crucial for understanding patterns, debugging algorithms, and interpreting results in machine learning and deep learning workflows.

Seaborn

Seaborn is built on Matplotlib and provides high-level statistical visualizations. It simplifies the creation of complex plots like heatmaps, violin plots, and regression plots. Seaborn is often used alongside Matplotlib in AI projects to present data insights in a visually appealing and informative manner.

Python provides a wide range of libraries tailored to different AI domains. For machine learning, libraries like Scikit-learn, NumPy, and Pandas provide robust tools for data processing and modeling. Deep learning relies on TensorFlow, PyTorch, and Keras for building and training neural networks. Computer vision applications use OpenCV and PIL for image and video processing, while NLP depends on NLTK, spaCy, and Hugging Face Transformers. Data visualization is supported by Matplotlib and Seaborn. Organizing libraries by AI domain helps developers choose the right tools for specific tasks and ensures efficient development of intelligent systems.

Importance of its libraries

Python libraries form the backbone of AI development, providing pre-built functions, tools, and frameworks that simplify the implementation of complex algorithms. These libraries reduce development time, minimize coding errors, and allow AI developers to focus on problem-solving rather than low-level programming. The importance of Python libraries lies in their ability to accelerate research, enhance productivity, and enable the deployment of robust, scalable AI applications across industries such as healthcare, finance, robotics, and natural language processing.

1)Simplifying Complex AI Tasks

Python libraries provide ready-to-use modules for machine learning, deep learning, computer vision, and natural language processing. Without these libraries, AI developers would need to manually implement complex algorithms, which is time-consuming and prone to errors. For example, TensorFlow and PyTorch provide built-in functions for neural network layers, optimization techniques, and backpropagation, enabling developers to train deep learning models efficiently. Libraries like Scikit-learn simplify the implementation of machine learning algorithms such as regression, classification, and clustering. By abstracting complexity, Python libraries make AI development accessible to both beginners and experts.

2)Accelerating Development and Prototyping

Rapid prototyping is critical in AI research and development because AI solutions require experimentation with different algorithms, architectures, and hyperparameters. Python libraries allow developers to build, test, and iterate models quickly. Keras, for instance, provides a high-level interface for designing neural networks, while Scikit-learn enables quick evaluation of multiple machine learning algorithms. Libraries for data manipulation (Pandas, NumPy) and visualization (Matplotlib, Seaborn) further accelerate experimentation by making data preprocessing and result analysis straightforward. This speed of development allows researchers to focus on optimizing models and discovering innovative solutions rather than writing code from scratch.

3)Enhancing Accuracy and Performance

Python libraries are optimized for high-performance computation, supporting GPU acceleration, efficient matrix operations, and parallel processing. Libraries like TensorFlow, PyTorch, and NumPy provide fast numerical computations, enabling large-scale AI models to train more efficiently. OpenCV and PIL allow for efficient image and video processing, critical in computer vision applications. By leveraging these optimized libraries, AI developers can achieve higher accuracy and performance in their models, ensuring reliable and scalable AI systems.

4)Standardization and Consistency

Python libraries provide standardized APIs and methodologies for implementing AI algorithms, which promotes consistency across projects. Using libraries like Scikit-learn for machine learning or spaCy for NLP ensures that best practices are followed and reduces variability in code quality. This standardization is important for collaboration in AI research and for maintaining reproducibility of experiments. It allows multiple developers to work on the same project seamlessly while maintaining clear, readable, and maintainable code.

5)Facilitating Integration and Scalability

Python libraries are designed to integrate seamlessly with other tools, frameworks, and platforms. Deep learning libraries like TensorFlow and PyTorch can be combined with computer vision libraries like OpenCV, or NLP libraries like Hugging Face Transformers, to create multi-functional AI applications. Python’s libraries also support deployment across platforms, including cloud environments, web applications, and edge devices. This integration capability ensures that AI solutions can scale from small prototypes to large, real-world applications efficiently.

6)Supporting Visualization and Interpretation

Interpreting AI model results is crucial for making data-driven decisions and understanding model behavior. Python libraries like Matplotlib and Seaborn enable detailed visualization of datasets, model outputs, and training metrics. Visualization helps in identifying patterns, diagnosing errors, and communicating insights effectively. Without these libraries, understanding complex AI models, especially deep learning networks, would be much more challenging and time-consuming.

7)Encouraging Community Collaboration and Innovation

Python libraries are open-source and maintained by large, active communities. This ensures continuous updates, improvements, and the addition of new features to support the latest AI techniques. Community-contributed libraries also provide tutorials, pre-trained models, and research-ready tools, which accelerate innovation. Developers can build on existing libraries to create novel AI solutions, fostering collaboration and knowledge sharing across industries and academic research.

Python libraries are crucial for AI because they simplify complex tasks, accelerate development and prototyping, enhance performance and accuracy, provide standardization, facilitate integration and scalability, support visualization, and promote community-driven innovation. They empower AI developers to focus on designing intelligent solutions instead of reinventing algorithms, making Python the preferred language for AI research, application, and real-world deployment.

Uses of python libraries in the real world

Python libraries are the backbone of modern AI applications in the real world. They provide pre-built modules, tools, and frameworks that simplify the implementation of complex algorithms, enabling developers to focus on solving practical problems. These libraries are widely used across industries such as healthcare, finance, e-commerce, education, robotics, and entertainment. By leveraging Python libraries, developers can build efficient, scalable, and accurate AI systems that drive innovation and automation in real-world scenarios.

1)Machine Learning Applications

Scikit-learn in Predictive Modeling

Scikit-learn is widely used in predictive analytics, which helps businesses forecast trends, detect anomalies, and make data-driven decisions. For example, e-commerce companies use Scikit-learn to predict customer behavior, recommend products, and optimize marketing strategies. Financial institutions apply it to detect fraudulent transactions and assess credit risk. The pre-built algorithms in Scikit-learn reduce development time and provide reliable, tested methods for real-world machine learning tasks.

NumPy and Pandas in Data Analysis

NumPy and Pandas are essential for handling and analyzing large datasets. In healthcare, these libraries are used to process patient records, medical images, and lab data to identify patterns and predict disease outcomes. In business, they help analyze sales, inventory, and customer data to optimize operations. By enabling efficient data manipulation and numerical computation, these libraries are critical for any AI application that depends on data-driven insights.

2)Deep Learning Applications

TensorFlow and Keras in Image Recognition

TensorFlow and Keras are widely used for deep learning applications such as medical image analysis, facial recognition, and autonomous vehicle vision systems. Hospitals use these libraries to build AI models that detect tumors in MRI scans. Security companies use them for facial recognition systems to enhance safety. The combination of GPU acceleration and high-level APIs makes these libraries highly effective for real-time, large-scale AI applications.

PyTorch in Research and NLP Models

PyTorch is used extensively in AI research and natural language processing (NLP) applications. It powers AI tools for machine translation, sentiment analysis, and chatbots. For example, social media platforms use PyTorch-based models to filter content, recommend posts, and improve user engagement. Its dynamic computation graphs make PyTorch suitable for developing experimental AI models and rapidly testing new algorithms in real-world applications.

3)Computer Vision Applications

OpenCV in Surveillance and Automation

OpenCV is used for object detection, motion tracking, and video analysis. In retail, it enables automated checkout systems and customer behavior analysis. In manufacturing, OpenCV-based systems monitor production lines for defects. Its ability to process images and videos efficiently makes it indispensable in AI applications that require real-time visual understanding.

PIL in Image Preprocessing

PIL (Python Imaging Library) is used for preprocessing images before they are fed into AI models. In e-commerce, PIL helps resize, crop, and optimize product images for recommendation systems. In healthcare, it is used to preprocess medical scans for analysis in AI-driven diagnostic tools.

4)Natural Language Processing Applications

NLTK in Text Analysis

NLTK is used to process and analyze textual data in AI applications. News agencies use it for automated content summarization and keyword extraction. Educational platforms use NLTK for sentiment analysis on student feedback to improve learning systems. It helps AI systems understand and generate meaningful insights from text efficiently.

spaCy in Enterprise NLP

spaCy is used in enterprise applications for named entity recognition, information extraction, and document classification. Businesses use spaCy to automatically analyze contracts, extract important clauses, and streamline document management. Its speed and accuracy make it suitable for large-scale real-world applications where text processing is critical.

Hugging Face Transformers in Language Models

Hugging Face Transformers powers state-of-the-art NLP applications such as chatbots, virtual assistants, and question-answering systems. Companies use these pre-trained models to implement conversational AI, automate customer support, and generate human-like text for content creation. It allows developers to deploy advanced NLP capabilities without extensive computational resources.

5)Data Visualization Applications

Matplotlib and Seaborn in Business Insights

Matplotlib and Seaborn are used to visualize complex data and model results. Financial analysts use these libraries to plot stock trends and risk assessments. Healthcare professionals use them to visualize patient data trends and treatment outcomes. Visualization enables decision-makers to interpret AI model outputs clearly and take actionable insights.

6)Robotics and Automation Applications

ROS and PyRobot Integration

Python libraries like ROS (Robot Operating System) and PyRobot are used to implement AI in robotics and automation. They enable autonomous navigation, object manipulation, and human-robot interaction. For example, warehouse robots use AI libraries to navigate efficiently, pick and place items, and collaborate with human workers. Python’s libraries make these robotics applications robust, flexible, and easier to develop.

Python libraries have extensive uses in real-world AI applications across multiple domains. Machine learning, deep learning, computer vision, NLP, data visualization, and robotics all rely on specialized Python libraries to implement practical solutions efficiently. Scikit-learn, TensorFlow, Keras, PyTorch, OpenCV, NLTK, spaCy, Hugging Face Transformers, Matplotlib, and Pandas enable developers to build intelligent systems that are accurate, scalable, and deployable. Their pre-built tools, optimized functions, and integration capabilities make Python the most practical and powerful language for real-world AI development.