AWS offers over 200 services — databases, machine learning, security, analytics, and much more. That number can feel overwhelming at first. But the truth is, the vast majority of what you will build on AWS starts with just five core services.

These five services are the foundation of almost every cloud application on AWS. Whether you are deploying a simple website or building a complex DevOps pipeline, you will use these again and again.

Service 1 — Amazon EC2

EC2 gives you virtual servers called instances, that run in the cloud. You choose the operating system, the amount of CPU and memory, the storage, and the network configuration.

Once launched, it behaves just like a physical server, except it lives on AWS infrastructure and you can start or stop it whenever you want.

Think of EC2 as renting a computer that lives in an AWS data centre. You have full control over it.

Key Concepts in EC2

1. Instances: An instance is a single virtual server. You can run one instance or thousands simultaneously, depending on your needs.

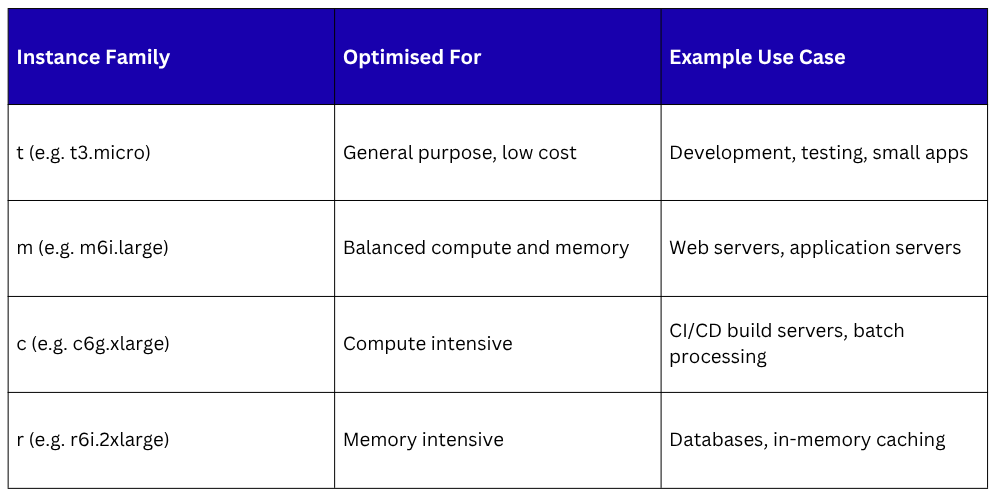

Instance Types

AWS offers different instance types optimised for different workloads. The naming convention tells you what the instance is built for:

2. AMI — Amazon Machine Image

An AMI is a template that contains the operating system and pre-installed software for your instance. When you launch an EC2 instance, you choose an AMI. AWS provides standard AMIs — Amazon Linux, Ubuntu, Windows — and you can also create your own custom AMIs.

3. Security Groups

A Security Group acts like a firewall for your EC2 instance. You define rules that control which traffic is allowed in and out — for example, allowing HTTP traffic on port 80 but blocking everything else.

4. Key Pairs

To connect securely to a Linux EC2 instance, you use a key pair — a public key stored on the instance and a private key you keep on your machine. This is how SSH access works on AWS.

5. Elastic IP

By default, an EC2 instance gets a new public IP address every time it restarts. An Elastic IP is a fixed, static public IP address you can attach to your instance so it always has the same address.

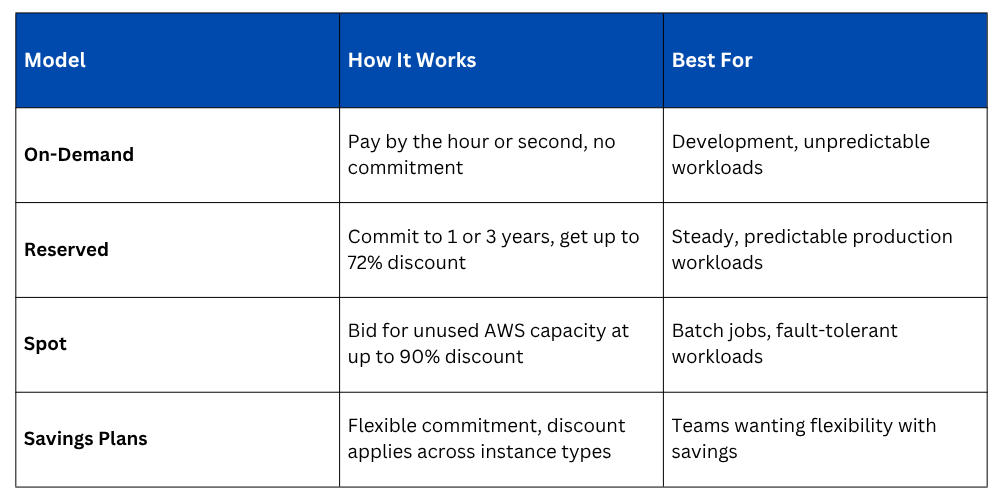

EC2 Pricing Models

How EC2 fits into DevOps

1. Host your application servers, build servers, and CI/CD agents.

2. Run self-hosted tools like Jenkins or SonarQube.

3. Create custom environments for testing and staging.

4. Automate instance management using Infrastructure as Code tools like Terraform or AWS CDK.

Service 2 — Amazon S3

S3 is object storage. That means you can store any type of file-documents, images, videos, application binaries, database backups, log files, and retrieve them from anywhere, at any time, at virtually unlimited scale.

You do not manage any servers or disks. You just upload files and S3 handles everything else.

Key Concepts in S3

1. Buckets: A bucket is a container for your files. Every file you store in S3 must live inside a bucket. Bucket names must be globally unique across all of AWS — no two customers can have a bucket with the same name.

2. Objects: An object is the file you store in S3 — along with its metadata. Every object has a unique key, which is essentially its file path within the bucket.

Example:

Bucket name: my-devops-project-artifacts

Object key: builds/v1.2.3/app.zip

Full path: s3://my-devops-project-artifacts/builds/v1.2.3/app.zip

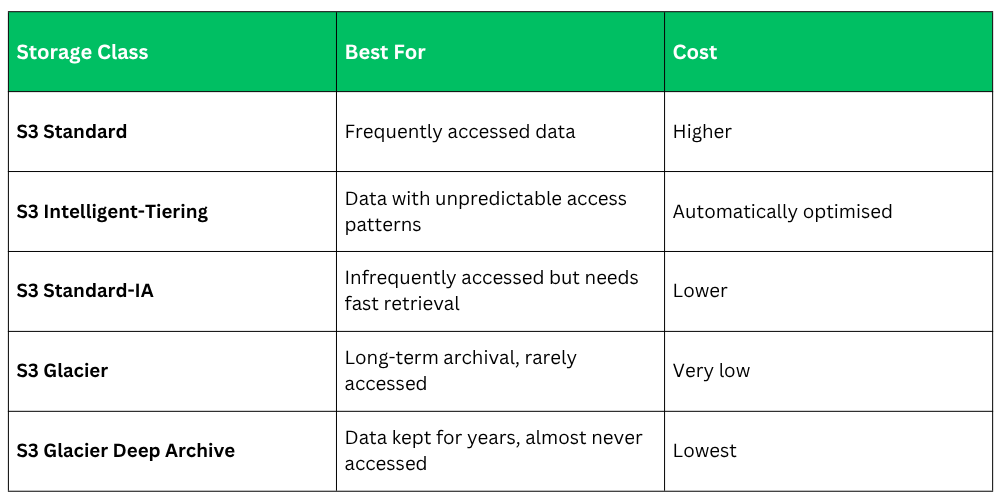

3. Storage Classes: S3 offers different storage classes depending on how frequently you access your data:

4. Versioning: S3 can keep multiple versions of the same file. If you overwrite or accidentally delete a file, you can restore a previous version. This is very useful for storing deployment artifacts and configuration files.

5. S3 Bucket Policies and ACLs: You control access to your S3 buckets using bucket policies — JSON-based rules that define who can read, write, or delete objects. By default, all buckets are private.

How S3 fits into DevOps

1. Store build artifacts produced by your CI/CD pipeline.

2. Host static websites directly from an S3 bucket.

3. Store Terraform state files for Infrastructure as Code.

4. Archive application logs for long-term storage and compliance.

5. Store Docker images alongside Amazon ECR.

6. Serve as a source stage in AWS CodePipeline.

Service 3 — AWS IAM

IAM is the security backbone of your entire AWS account. It lets you define and manage:

1. Who can access your AWS account — users, applications, and services.

2. What they are allowed to do — read, write, delete, deploy, and so on.

3. Which resources they can access — specific S3 buckets, specific EC2 instances, specific services.

Everything in AWS goes through IAM. Every API call, every CLI command, every automated process — IAM checks whether it is allowed before anything happens.

Key Concepts in IAM

1. Users: An IAM User is a person or application with a specific identity inside your AWS account. Each user has their own credentials — a username and password for the console, or access keys for programmatic access via the CLI or API.

2. Groups: A Group is a collection of IAM Users. Instead of assigning permissions to each user individually, you assign permissions to a group and add users to it. For example, a "Developers" group might have permissions to access EC2 and S3, but not billing or IAM settings.

3. Roles: A Role is a set of permissions that can be assumed — temporarily — by a user, an application, or an AWS service. Roles are extremely important in DevOps because they allow services like EC2 or Lambda to interact with other AWS services securely, without using hardcoded credentials.

For example — if your EC2 instance needs to read from an S3 bucket, you attach an IAM Role to the EC2 instance with S3 read permissions. The instance assumes the role automatically. No passwords, no access keys stored on the server.

4. Policies: A Policy is a document written in JSON, that defines what is allowed or denied. Policies are attached to users, groups, or roles.

A Simple Example of an IAM Policy:

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.